STAT946F17/Decoding with Value Networks for Neural Machine Translation

Introduction

Background Knowledge

- NTM

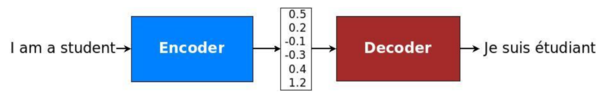

Neural Machine Translation (NMT), which is based on deep neural networks and provides an end- to-end solution to machine translation, uses an RNN-based encoder-decoder architecture to model the entire translation process. Specifically, an NMT system first reads the source sentence using an encoder to build a "thought" vector, a sequence of numbers that represents the sentence meaning; a decoder, then, processes the "meaning" vector to emit a translation. (Figure 1)[1]

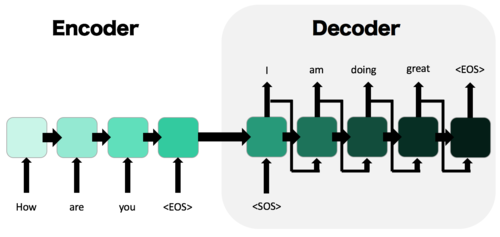

- Sequence-to-Sequence(Seq2Seq) Model

- Two RNNs: an encoder RNN, and a decoder RNN 1) The input is passed though the encoder and it’s final hidden state, the “thought vector” is passed to the decoder as it’s initial hidden state. 2)Decoder given the start of sequence token, <SOS>, and iteratively produces output until it outputs the end of sequence token, <EOS> - Commonly used in text generation, machine translation, and related problems

- Beam Search

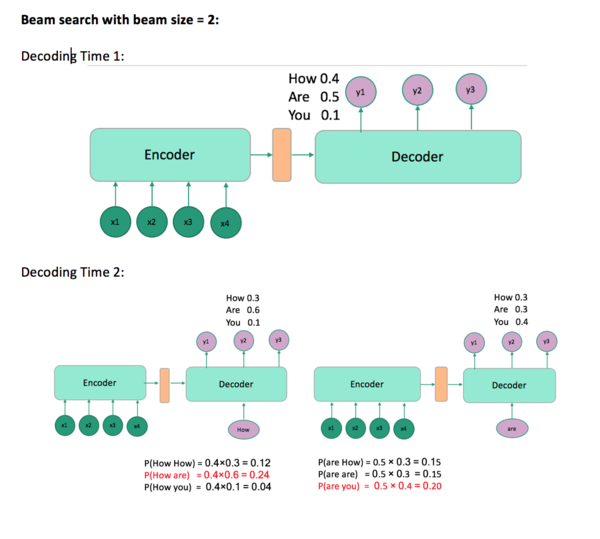

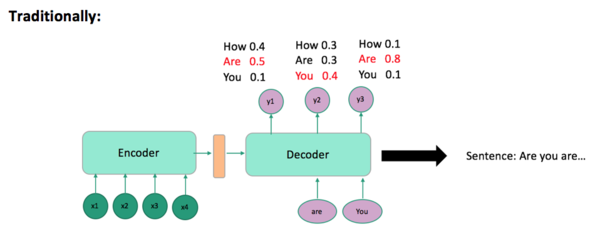

Decoding process:

Problem: Choosing the word with highest score at each time step t is not necessarily going to give you the sentence with the highest probability(Figure 3). Beam search solves this problem (Figure 4). Beam search has a size m such that at each time step t, it takes the top m proposal and continues decoding with each one of them. In the end, you will get a sentence with the highest probability not in the word level.