Hierarchical Question-Image Co-Attention for Visual Question Answering

Abstract: A number of recent works have proposed attention models for Visual Question Answering (VQA) that generate spatial maps highlighting image regions relevant to answering the question. In this paper, we argue that in addition to modeling "where to look" or visual attention, it is equally important to model "what words to listen to" or question attention. We present a novel co-attention model for VQA that jointly reasons about image and question attention. In addition, our model reasons about the question (and consequently the image via the co-attention mechanism) in a hierarchical fashion via a novel 1-dimensional convolution neural networks (CNN). Our model improves the state-of-the-art on the VQA dataset from 60.3% to 60.5%, and from 61.6% to 63.3% on the COCO-QA dataset. By using ResNet, the performance is further improved to 62.1% for VQA and 65.4% for COCO-QA.

Introduction

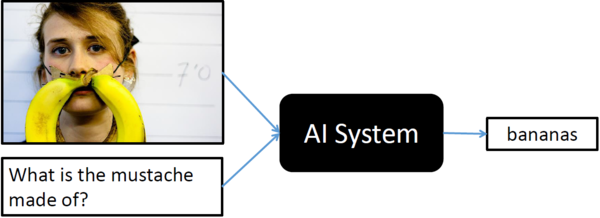

Visual Question Answering (VQA) is a recent problem in computer vision and natural language processing that has garnered a large amount of interest from the deep learning, computer vision, and natural language processing communities. In VQA, an algorithm needs to answer text-based questions about images in natural language as illustrated in Figure 1.

Recently, visual-attention based models have gained traction for VQA tasks, where the attention mechanism typically produces a spatial map highlighting image regions relevant for answering the visual question about the image. However, to correctly answer the question, machine not only needs to understand or "attend" regions in the image but also the parts of question as well. In this paper, authors have proposed a novel co-attention technique to combine "where to look" or visual-attention along with "what words to listen to" or question-attention VQA allowing their model to jointly reasons about image and question thus improving upon existing state of the art results.

"attention" models

Please feel free to skip this section if you already know about "attention" in context of deep learning. Since this paper talks about "attention" almost everywhere, I decided to put this section to give very informal and brief introduction to the concept of the "attention" mechanism specially visual "attention", however, it can be expanded to any other type of "attention".

Visual attention in CNN is inspired by the biological visual system. As humans, we have ability to focus our cognitive processing onto a subset of the environment that is more relevant for the given situation. Imagine, you witness a bank robbery where robbers are trying to escape on a car, as a good citizen, you will immediately focus your attention on number plate and other physical features of the car and robbers in order to give your testimony later. Such selective visual attention for a given context can also be implemented on traditional CNNs making them more superior for certains tasks and it even helps algorithm designer to visualize what localized features were more important than others.

Role of "visual-attention" in VQA

This section is not a part of the actual paper that is been summarized, however, it gives an overview of how visual attention can be incorporated in training of a network for VQA tasks. Eventually, helping readers to absorb and understand actual proposed ideas from the paper more effortlessly.

Generally speaking, most common and easy to implement form of "attention" mechanism is called "soft attention". In soft attention, network tries to learn the conditional distribution [math]\displaystyle{ P_{i \in [1,n]}(Li|c) }[/math] representing every individual importance for all the features extracted from each of the dsicrete [math]\displaystyle{ n }[/math] locations within the image conditioned on some context vector [math]\displaystyle{ c }[/math]. In order words, given [math]\displaystyle{ n }[/math] features [math]\displaystyle{ L_i = [L_0, L_1, ..., L_n] }[/math] from [math]\displaystyle{ n }[/math] different regions within the image(top-left, top-middle, top-right, and so on), then "attention" module learns a parameteric function [math]\displaystyle{ F(c;\theta) }[/math] that outputs importance of each of these individual feature for a given context vector [math]\displaystyle{ c }[/math] or outputs a discrete probability distribution of size [math]\displaystyle{ n }[/math], can be achived by [math]\displaystyle{ softmax(n) }[/math].

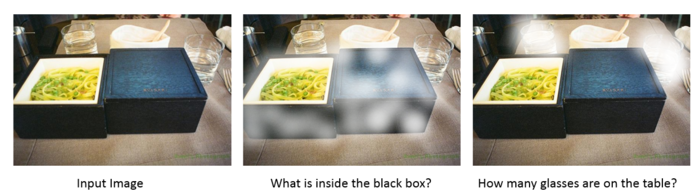

In order to incorporate the visual attention in VQA task, one can define context vector [math]\displaystyle{ c }[/math] as a representation of the visual question asked by an user (using RNN perhaps LSTM) and generate a localized attention map which can than be used for end-to-end training purposes as shown in Figure 2. Most work that exists in literature regarding use of visual-attention in VQA tasks are generally further specialization of such similar ideas.

Motivation

So far, all attention models for VQA in literature have focused on the problem of identifying "where to look" or visual attention. In this paper, authors argue that the problem of identifying "which words to listen to" or question attention is equally important. Consider the questions "how many horses are in this image?" and "how many horses can you see in this image?". They have the same meaning, essentially captured by the first three words. A machine that attends to the first three words would arguably be more robust to linguistic variations irrelevant to the meaning and answer of the question. Motivated by this observation, in addition to reasoning about visual attention, authors also address the problem of question attention.

Contributions

- A novel co-attention mechanism for VQA that jointly performs question-guided

visual attention and image-guided question attention.

- A hierarchical architecture to represent the question, and consequently construct

image-question co-attention maps at 3 different levels: word level, phrase level and question level.

- A novel convolution-pooling strategy at phase-level to adaptively select the

phrase sizes whose representations are passed to the question level representation.

- Results on VQA and COCO-QA and ablation studies to quantify the roles of different components in our model