stat940W25

Notes on Exercises

Exercises are numbered using a two-part system, where the first number represents the lecture number and the second number represents the exercise number. For example:

- 1.1 refers to the first exercise in Lecture 1.

- 2.3 refers to the third exercise in Lecture 2.

Students are encouraged to complete these exercises as they follow the lecture content to deepen their understanding.

Exercise 1.1

Level: ** (Moderate)

Exercise Types: Novel

Each exercise you contribute should fall into one of the following categories:

- Novel: Preferred – An original exercise created by you.

- Modified: Valued – An exercise adapted or significantly altered from an existing source.

- Copied: Permissible – An exercise reproduced exactly as it appears in the source.

References: Source: (e.g., book or other resources, if a webpage has its URL), Chapter,Page Number.

Question

Prove that the Perceptron Learning Algorithm converges in a finite number of steps if the dataset is linearly separable.

Hint:Note: exc Assume that the dataset [math]\displaystyle{ \{(\mathbf{x}_i, y_i)\}_{i=1}^N }[/math] is linearly separable, where [math]\displaystyle{ \mathbf{x}_i \in \mathbb{R}^d }[/math] are the input vectors, and [math]\displaystyle{ y_i \in \{-1, 1\} }[/math] are their corresponding labels. Show that there exists a weight vector [math]\displaystyle{ \mathbf{w}^* }[/math] and a bias [math]\displaystyle{ b^* }[/math] such that [math]\displaystyle{ y_i (\mathbf{w}^* \cdot \mathbf{x}_i + b^*) \gt 0 }[/math] for all [math]\displaystyle{ i }[/math], and use this assumption to bound the number of updates made by the algorithm.

Solution

Step 1: Linear Separability Assumption

If the dataset is linearly separable, there exists a weight vector [math]\displaystyle{ \mathbf{w}^* }[/math] and a bias [math]\displaystyle{ b^* }[/math] such that: [math]\displaystyle{ y_i (\mathbf{w}^* \cdot \mathbf{x}_i + b^*) \gt 0 \quad \forall i = 1, 2, \dots, N. }[/math] Without loss of generality, let [math]\displaystyle{ \| \mathbf{w}^* \| = 1 }[/math] (normalize [math]\displaystyle{ \mathbf{w}^* }[/math]).

Step 2: Perceptron Update Rule

The Perceptron algorithm updates the weight vector [math]\displaystyle{ \mathbf{w} }[/math] and bias [math]\displaystyle{ b }[/math] as follows:

- Initialize [math]\displaystyle{ \mathbf{w}_0 = 0 }[/math] and [math]\displaystyle{ b_0 = 0 }[/math].

- For each misclassified point [math]\displaystyle{ (\mathbf{x}_i, y_i) }[/math], update:

[math]\displaystyle{ \mathbf{w} \leftarrow \mathbf{w} + y_i \mathbf{x}_i, \quad b \leftarrow b + y_i. }[/math]

Define the margin [math]\displaystyle{ \gamma }[/math] of the dataset as: [math]\displaystyle{ \gamma = \min_{i} \frac{y_i (\mathbf{w}^* \cdot \mathbf{x}_i + b^*)}{\| \mathbf{x}_i \|}. }[/math] Since the dataset is linearly separable, [math]\displaystyle{ \gamma \gt 0 }[/math].

Step 3: Bounding the Number of Updates

Let [math]\displaystyle{ \mathbf{w}_t }[/math] be the weight vector after [math]\displaystyle{ t }[/math]-th update. Define: [math]\displaystyle{ M = \max_i \| \mathbf{x}_i \|^2, }[/math] the maximum squared norm of any input vector.

Growth of [math]\displaystyle{ \| \mathbf{w}_t \|^2 }[/math]

After [math]\displaystyle{ t }[/math] updates, the norm of [math]\displaystyle{ \mathbf{w}_t }[/math] satisfies: [math]\displaystyle{ \| \mathbf{w}_{t+1} \|^2 = \| \mathbf{w}_t + y_i \mathbf{x}_i \|^2 = \| \mathbf{w}_t \|^2 + 2 y_i (\mathbf{w}_t \cdot \mathbf{x}_i) + \| \mathbf{x}_i \|^2. }[/math] Since the point is misclassified, [math]\displaystyle{ y_i (\mathbf{w}_t \cdot \mathbf{x}_i) \lt 0 }[/math]. Thus: [math]\displaystyle{ \| \mathbf{w}_{t+1} \|^2 \leq \| \mathbf{w}_t \|^2 + \| \mathbf{x}_i \|^2 \leq \| \mathbf{w}_t \|^2 + M. }[/math] By induction, after [math]\displaystyle{ t }[/math] updates: [math]\displaystyle{ \| \mathbf{w}_t \|^2 \leq tM. }[/math]

Lower Bound on [math]\displaystyle{ \mathbf{w}_t \cdot \mathbf{w}^* }[/math]

Let [math]\displaystyle{ \mathbf{w}_t }[/math] be the weight vector after [math]\displaystyle{ t }[/math]-th update. Each update increases [math]\displaystyle{ \mathbf{w}_t \cdot \mathbf{w}^* }[/math] by at least [math]\displaystyle{ \gamma }[/math]: [math]\displaystyle{ \mathbf{w}_{t+1} \cdot \mathbf{w}^* = (\mathbf{w}_t + y_i \mathbf{x}_i) \cdot \mathbf{w}^* = \mathbf{w}_t \cdot \mathbf{w}^* + y_i (\mathbf{x}_i \cdot \mathbf{w}^*). }[/math] Since [math]\displaystyle{ y_i (\mathbf{x}_i \cdot \mathbf{w}^*) \geq \gamma }[/math], we have: [math]\displaystyle{ \mathbf{w}_{t+1} \cdot \mathbf{w}^* \geq \mathbf{w}_t \cdot \mathbf{w}^* + \gamma. }[/math] By induction: [math]\displaystyle{ \mathbf{w}_t \cdot \mathbf{w}^* \geq t \gamma. }[/math]

Combining the Results

The Cauchy-Schwarz inequality gives: [math]\displaystyle{ \mathbf{w}_t \cdot \mathbf{w}^* \leq \| \mathbf{w}_t \| \| \mathbf{w}^* \| = \| \mathbf{w}_t \|. }[/math] Thus: [math]\displaystyle{ t \gamma \leq \| \mathbf{w}_t \| \leq \sqrt{tM}. }[/math] Squaring both sides: [math]\displaystyle{ t^2 \gamma^2 \leq tM. }[/math] Dividing through by [math]\displaystyle{ t }[/math] (assuming [math]\displaystyle{ t \gt 0 }[/math]): [math]\displaystyle{ t \leq \frac{M}{\gamma^2}. }[/math]

Step 4: Conclusion

The Perceptron Learning Algorithm converges after at most [math]\displaystyle{ \frac{M}{\gamma^2} }[/math] updates, which is finite. This proves that the algorithm terminates when the dataset is linearly separable.

Exercise 1.2

Level: * (Easy)

Exercise Types: Modified

References: Simon J.D. Prince. Understanding Deep learning. 2024

This problem generalized Problem 4.10 in this textbook to [math]\displaystyle{ N }[/math] inputs and [math]\displaystyle{ M }[/math] outputs.

Question

(a) Consider a deep neural network with a single input, a single output, and [math]\displaystyle{ K }[/math] hidden layers, each containing [math]\displaystyle{ D }[/math] hidden units. How many parameters does this network have in total?

(b) Now, generalize the problem: if the number of inputs is [math]\displaystyle{ N }[/math] and the number of outputs is [math]\displaystyle{ M }[/math], how many parameters does this network have in total?

Solution

(a) Total number of parameters when there is a single input and output:

For the first layer, the input size is [math]\displaystyle{ 1 }[/math] and the output size is [math]\displaystyle{ D }[/math]. Therefore, the number of weights is [math]\displaystyle{ 1D }[/math], and the number of biases is [math]\displaystyle{ D }[/math].

Number of parameters: [math]\displaystyle{ D + D = 2D }[/math]

For hidden layers [math]\displaystyle{ i \longrightarrow i+1,i\in1,...,K-1 }[/math]: Each hidden layer connects [math]\displaystyle{ D }[/math] units to another [math]\displaystyle{ D }[/math] units. Therefore, for each layer, the number of weights is [math]\displaystyle{ D^2 }[/math], and the number of biases is [math]\displaystyle{ D }[/math].

Number of parameters for all [math]\displaystyle{ K-1 }[/math] hidden layers: [math]\displaystyle{ (K-1)(D^2 + D) }[/math]

For the output layer, the number of weights is [math]\displaystyle{ D }[/math], and the number of biases is [math]\displaystyle{ 1 }[/math].

Number of parameters: [math]\displaystyle{ D + 1 }[/math]

Therefore, the total number of parameters is [math]\displaystyle{ 2D + (K-1)(D^2 + D) + D + 1 }[/math].

(b) Total number of parameters for [math]\displaystyle{ N }[/math] inputs and [math]\displaystyle{ M }[/math] outputs:

In this case, the number of parameters for the first layer becomes [math]\displaystyle{ ND+D }[/math], while the number of parameters for the output layer becomes [math]\displaystyle{ DM+M }[/math].

Therefore, in total, the number of parameters is [math]\displaystyle{ ND+D+(K-1)(D^2+D)+MD+M }[/math]

Exercise 1.3

Level: * (Easy)

Exercise Types: Modified

References: Simon J.D. Prince. Understanding Deep learning. MIT Press, 2023

This problem modified from the background mathematics problem chap01 Question1.

Question

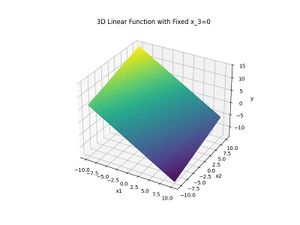

A single linear equation with three inputs associates a value [math]\displaystyle{ y }[/math] with each point in a 3D space [math]\displaystyle{ (x_1,x_2,x_3) }[/math]. Is it possible to visualize this? What value is at position [math]\displaystyle{ (0,0,0) }[/math]?

We add an inverse problem: If [math]\displaystyle{ y, \omega_1, \omega_2, \omega_3 }[/math] and [math]\displaystyle{ \beta }[/math] are known, derive a system of equations to solve for the input values [math]\displaystyle{ x_1, x_2, x_3 }[/math] that produce a specific output value of [math]\displaystyle{ y }[/math]. Under what conditions is this problem solvable?

Solution

A single linear equation with three inputs is of the form:

[math]\displaystyle{ y = \beta + \omega_1 x_1 + \omega_2 x_2 + \omega_3 x_3 }[/math]

where [math]\displaystyle{ \beta }[/math] is the offset, and [math]\displaystyle{ \omega_1, \omega_2, \omega_3 }[/math] are weights for the inputs [math]\displaystyle{ x_1, x_2, x_3 }[/math].

We can define the code as follows:

def linear_function_3D(x1, x2, x3, beta, omega1, omega2, omega3):

y = beta + omega1 * x1 + omega2 * x2 + omega3 * x3

return y

Given [math]\displaystyle{ \beta = 0.5, \omega_1 = -1.0, \omega_2 = 0.4 }[/math] and [math]\displaystyle{ \omega_3 = -0.3 }[/math],

[math]\displaystyle{ y = \beta + \omega_1 \cdot 0 + \omega_2 \cdot 0 + \omega_3 \cdot 0 }[/math]

Thus, [math]\displaystyle{ y(0, 0, 0) = 0.5. }[/math]

To visualize, we can fix [math]\displaystyle{ x_3 = 0 }[/math] and let [math]\displaystyle{ x_1, x_2 }[/math] vary, and generate the [math]\displaystyle{ y }[/math]-values using the equation.

Here is the code:

import numpy as np

import matplotlib.pyplot as plt

# Generate grid for x1 and x2, fix x3 = 0

x1 = np.linspace(-10, 10, 100)

x2 = np.linspace(-10, 10, 100)

x1, x2 = np.meshgrid(x1, x2)

x3 = 0

# Define coefficients

beta = 0.5

omega1 = -1.0

omega2 = 0.4

omega3 = -0.3

# Compute y-values

y = linear_function_3D(x1, x2, x3, beta, omega1, omega2, omega3)

# Visualization

fig = plt.figure(figsize=(8, 6))

ax = fig.add_subplot(111, projection='3d')

ax.plot_surface(x1, x2, y, cmap='viridis')

ax.set_xlabel('x1')

ax.set_ylabel('x2')

ax.set_zlabel('y')

plt.title('3D Linear Function with Fixed x3=0')

plt.show()

The plot is shown below:

For the inverse problem, given [math]\displaystyle{ y, \beta, \omega_1, \omega_2, \omega_3, }[/math] we can solve [math]\displaystyle{ x_1, x_2, x_3 }[/math]as follows:

[math]\displaystyle{ \begin{bmatrix} \omega_1 & \omega_2 & \omega_3 \end{bmatrix} \begin{bmatrix} x_1 \\ x_2 \\ x_3 \end{bmatrix} = y - \beta }[/math]

The problem is solvable if [math]\displaystyle{ \omega }[/math] is not a zero vector, ensuring at least one weight contributes to the equation.

y = 10.0

beta = 1.0

omega = [2, -1, 0.5]

rhs = y - beta

# Solve using least squares

x_vec = np.linalg.lstsq(np.array([omega]), [rhs], rcond=None)[0]

print(f"Solution for x: {x_vec}")

Exercise 1.4

Level: * (Easy)

Exercise Types: Novel

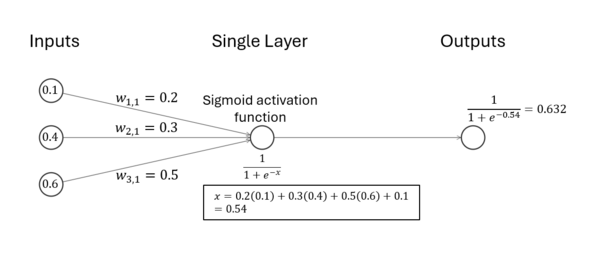

Question

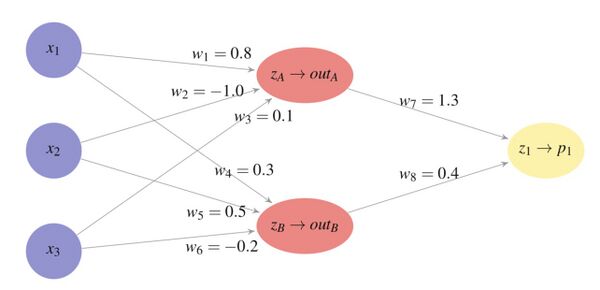

Thinking about feedforward model with sigmoid activation, compute the output of a single-layer neural network with 3 inputs and 1 output.

Assuming:

- Input vector: [math]\displaystyle{ x = (0.1, 0.4, 0.6) }[/math]

- weights: [math]\displaystyle{ w = (0.2, 0.3, 0.5) }[/math]

- Bias: [math]\displaystyle{ b = 0.1 }[/math]

- a). Sigmoid activation function: [math]\displaystyle{ f(z) = \frac{1}{1 + e^{-z}} }[/math]

- b). ReLU activation function: [math]\displaystyle{ f(z) = \max(0, z) }[/math]

- c). Tanh activation function: [math]\displaystyle{ f(z) = \tanh(z) = \frac{e^z - e^{-z}}{e^z + e^{-z}} }[/math]

Solution

1. Compute the weighted sum: [math]\displaystyle{ z = w \cdot x + b = (0.2)(0.1) + (0.3)(0.4) + (0.5)(0.6) + 0.1 }[/math]

Breaking this down step-by-step: [math]\displaystyle{ z = 0.02 + 0.12 + 0.3 + 0.1 = 0.54 }[/math]

2. a). Apply the sigmoid activation function: [math]\displaystyle{ f(z) = \frac{1}{1 + e^{-z}} }[/math]

Substituting [math]\displaystyle{ z = 0.54 }[/math]: [math]\displaystyle{ f(z) = \frac{1}{1 + e^{-0.54}} \approx \frac{1}{1 + 0.582} \approx 0.632 }[/math]

Thus, the final output is 0.632.

b). Similarly, apply the ReLU activation function: [math]\displaystyle{ f(z) = \max(0, z) }[/math]

Substituting [math]\displaystyle{ z = 0.54 }[/math]: [math]\displaystyle{ f(z) = \max(0, 0.54) = 0.54 }[/math]

c). Finally, apply the Tanh activation function: [math]\displaystyle{ f(z) = \frac{e^z - e^{-z}}{e^z + e^{-z}} }[/math]

Substituting [math]\displaystyle{ z = 0.54 }[/math]: [math]\displaystyle{ f(z) = \frac{e^{0.54} - e^{-0.54}}{e^{0.54} + e^{-0.54}} \approx \frac{1.716 - 0.583}{1.716 + 0.583} \approx \frac{1.133}{2.299} \approx 0.493 }[/math]

Exercise 1.5

Level: * (Esay)

Exercise Types: Novel

Question

1.2012: ________'s ImageNet victory brings mainstream attention.

2.2016: Google's ________ uses deep learning to defeat a Go world champion.

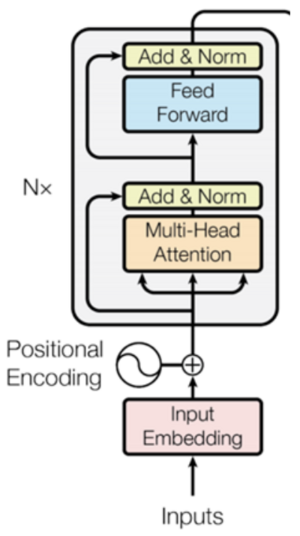

3.2017: ________ architecture revolutionizes Natural Language Processing.

Solution

1. AlexNet

2. AlphaGo

3. Transformer

Key Milestones in Deep Learning

•2006: Deep Belief Networks – The modern era of deep learning begins.

•2012: AlexNet's ImageNet victory brings mainstream attention.

•2014-2015: Introduction of Generative Adversarial Networks (GANs).

•2016: Google's AlphaGo uses deep learning to defeat a Go world champion.

•2017: Transformer architecture revolutionizes Natural Language Processing.

•2018-2019: BERT and GPT-2 set new benchmarks in NLP.

•2020: GPT-3 demonstrates advanced language understanding and generation.

•2021: AlphaFold 2 achieves breakthroughs in protein structure prediction.

•2021-2022: Diffusion Models (e.g., DALL-E 2, Stable Diffusion) achieve state-of-the-art in image and video generation.

•2022: ChatGPT popularizes conversational AI and large language models (LLMs).

Exercise 1.6

Level: * (Easy)

Exercise Type: Novel

Question

a) What are some common examples of first-order search strategies in neural network optimization, and why are first-order methods generally preferred over second-order methods?

b) What is the difference between a deep neural network and a shallow neural network, and how many hidden layers does each typically have?

c) Prove that a perceptron cannot converge for the XOR problem.

Solution

a)

Common examples of first-order search strategies in neural network optimization include Gradient Descent (GD), Stochastic Gradient Descent (SGD), Momentum, and Adam. These methods rely on gradients (first derivatives) of the loss function to update model parameters, making them computationally efficient and scalable. First-order methods are preferred due to their efficiency, scalability to large datasets, and lower memory requirements compared to second-order methods. While second-order methods can converge faster, first-order methods like Adam balance performance and resource usage well, especially in large-scale networks.

b)

A deep neural network typically has more than 2 hidden layers, allowing it to learn complex, abstract features at each layer. A shallow neural network usually has 1 or 2 hidden layers. Therefore, networks with more than 2 hidden layers are considered deep, while those with fewer layers are considered shallow.

c)

Step 1: XOR Dataset

The XOR problem has the following data points and labels:

| x₁ | x₂ | y |

| 0 | 0 | 0 |

| 0 | 1 | 1 |

| 1 | 0 | 1 |

| 1 | 1 | 0 |

Step 2: Perceptron Decision Boundary

The perceptron decision boundary is defined as:

[math]\displaystyle{ z = w₁ ⋅ x₁ + w₂ ⋅ x₂ + b }[/math]

A point is classified as:

- y = 1 if z > 0

- y = 0 if z < 0

For the XOR dataset, we derive inequalities for each data point.

Step 3: Derive Inequalities

1. For (x₁, x₂) = (0, 0), y = 0:

b < 0

2. For (x₁, x₂) = (0, 1), y = 1:

w₂ + b > 0

3. For (x₁, x₂) = (1, 0), y = 1:

w₁ + b > 0

4. For (x₁, x₂) = (1, 1), y = 0:

w₁ + w₂ + b < 0

Step 4: Attempt to Solve

From the inequalities:

1. [math]\displaystyle{ b \lt 0 }[/math]

2. [math]\displaystyle{ w₂ + b \gt 0 \Rightarrow w₂ \gt -b }[/math]

3. [math]\displaystyle{ w₁ + b \gt 0 \Rightarrow w₁ \gt -b }[/math]

4. [math]\displaystyle{ w₁ + w₂ + b \lt 0 \Rightarrow w₁ + w₂ \lt -b }[/math]

Now, add inequalities (2) and (3):

[math]\displaystyle{ w₁ + w₂ \gt -2b }[/math]

But compare this with inequality (4):

[math]\displaystyle{ w₁ + w₂ \lt -b }[/math]

This leads to a contradiction because [math]\displaystyle{ -2b \lt -b }[/math] cannot be true if [math]\displaystyle{ b \lt 0 }[/math].

Therefore, the XOR dataset is not linearly separable, and the perceptron cannot converge for the XOR problem.

Exercise 1.7

Level: * (Easy)

Exercise Type: Novel

Question

The sigmoid activation function is defined as: [math]\displaystyle{ \sigma(x) = \frac{1}{1 + e^{-x}}. }[/math]

(a) Derive the derivative of [math]\displaystyle{ \sigma(x) }[/math] with respect to [math]\displaystyle{ x }[/math], and show that: [math]\displaystyle{ \sigma'(x) = \sigma(x)(1 - \sigma(x)). }[/math]

(b) Use this property to explain why sigmoid activation is suitable for modeling probabilities in binary classification tasks.

Solution

(a) Derivative: Starting with [math]\displaystyle{ \sigma(x) = \frac{1}{1 + e^{-x}} }[/math]: [math]\displaystyle{ \sigma'(x) = \frac{d}{dx} \left( \frac{1}{1 + e^{-x}} \right) = \frac{e^{-x}}{(1 + e^{-x})^2}. }[/math] Simplifying using [math]\displaystyle{ \sigma(x) = \frac{1}{1 + e^{-x}} }[/math] and [math]\displaystyle{ 1 - \sigma(x) = \frac{e^{-x}}{1 + e^{-x}} }[/math], we find: [math]\displaystyle{ \sigma'(x) = \sigma(x)(1 - \sigma(x)). }[/math]

(b) The sigmoid function outputs values in the range [math]\displaystyle{ (0, 1) }[/math], making it ideal for modeling probabilities. The derivative [math]\displaystyle{ \sigma'(x) = \sigma(x)(1 - \sigma(x)) }[/math] ensures that gradient updates during optimization are proportional to the confidence of the prediction, preventing drastic updates for very confident predictions. Here, we want to mention that softmax function is also suitable for binary classification problem.

Exercise 1.8

Level: * (Easy)

Exercise Types: Novel

Question

In classification, it is possible to minimize the number of misclassifications directly by using:

[math]\displaystyle{ \sum_{i=1}^n \mathbf{1}\Bigl(\text{sign}(\boldsymbol{\beta}^T \mathbf{x}_i + \beta_0) \neq y_i\Bigr) }[/math]

where [math]\displaystyle{ \mathbf{1}(\cdot) }[/math] is the indicator function, [math]\displaystyle{ \boldsymbol{\beta} }[/math] is the weight vector, and [math]\displaystyle{ \beta_0 }[/math] is the bias term. So, the loss function gives 1 for each incorrect response and 0 for each correct one.

(a) Why is this approach not commonly used in practice?

(b) Name and give formulas for two differentiable loss functions commonly employed in practice for binary classification tasks, explaining why they are more popular.

Solution

(a): The expression [math]\displaystyle{ \mathbf{1}\left(\text{sign}(\boldsymbol{\beta}^T \mathbf{x}_i + \beta_0) \neq y_i \right) }[/math] gives only 0 or 1. Small changes in [math]\displaystyle{ \boldsymbol{\beta} }[/math] or [math]\displaystyle{ \beta_0 }[/math] can suddenly change the loss function for a sample from 0 to 1 (or vice versa). Because the loss function is discrete, the gradient with respect to [math]\displaystyle{ \boldsymbol{\beta} }[/math] or [math]\displaystyle{ \beta_0 }[/math] does not exist. Standard optimization techniques like gradient descent rely on differentiable, continuous loss function where partial derivatives can use to update the parameters.

(b): Two alternative loss functions:

Hinge Loss: [math]\displaystyle{ \sum_{i=1}^n \max(0, 1 - y_i (\boldsymbol{\beta}^T \mathbf{x}_i + \beta_0)) }[/math]

The hinge loss is often used in Support Vector Machines (SVMs) and works well when the data is linearly separable.

Logistic (Cross-Entropy) Loss: [math]\displaystyle{ \sum_{i=1}^n \log\left( 1 + \exp(-y_i (\boldsymbol{\beta}^T \mathbf{x}_i + \beta_0)) \right) }[/math]

The logistic loss (or cross-entropy loss) is commonly used in logistic regression and neural networks. It is differentiable so it works well for gradient-based optimization methods.

Exercise 1.9

Level: ** (Easy)

Exercise Types: Novel

Question

How are neural networks modeled?

Solution

Neural networks are modeled from biological neurons. A neural network consists of layers of interconnected neurons where each connection has associated weights. The input layer receives the data features, and each neuron corresponds to one feature from the dataset. The hidden layer consists of multiple neurons that transform the input data into intermediate representations, using a combination of weights, biases, and activation functions which allows the network to learn complex patterns, like the Sigmoid function [math]\displaystyle{ S(x) = \frac {1}{1+e^{-x}} }[/math]. The output layer generates the final prediction, such as probabilities for classification or continuous values for regression. Neural networks learn to map inputs to outputs by adjusting weights during training to minimize the error between predicted and actual outputs.

For example, in the lecture note, inputs [math]\displaystyle{ x_1 = 0.5 , x_2 = 0.9, x_3 = -0.3 }[/math] are passed through a hidden layer with specific weights:

[math]\displaystyle{ H_1 ~ weight = (1.0,-2.0,2.0) }[/math],

[math]\displaystyle{ H_2 ~ weight= (2.0,1.0 -4.0) }[/math],

[math]\displaystyle{ H_3 ~ weight = (1.0,-1.0,0.0) }[/math].

The computations yield hidden neuron values of

[math]\displaystyle{ H_1 = 0.5\times1.0 + 0.9\times -2.0 + -0.3 \times 2.0= 0.13 }[/math],

[math]\displaystyle{ H_2 = 0.5\times 2.0 + 0.9\times 1.0 + -0.3\times -4.0= 0.96 }[/math],

[math]\displaystyle{ H_3 = 0.5\times 1.0 + 0.9\times -1.0+ -0.3\times 0.0 = 0.40 }[/math],

which are then processed by the output layer to produce predictions.

Exercise 1.10

Level: ** (Moderate)

Exercise Types: Novel

Question

Biological neurons in the human brain have the following characteristics:

1. A neuron fires an electrical signal only when its membrane potential exceeds a certain threshold. Otherwise, it remains inactive.

2. Neurons are connected to one another through dendrites (input) and axons (outputs), forming a highly interconnected network.

3. The intensity of the signal passed between neurons depends on the strength of the connection, which can change over time due to learning and adaptation.

Considering the above points, answer the following questions:

Explain how these biological properties of neurons might inspire the design and functionality of nodes in artificial neural networks.

Solution

1. Threshold Behavior: The concept of a neuron firing only when its membrane potential exceeds a threshold is mirrored in neural networks through activation functions. These functions decide whether a node "fires" by producing a significant output.

2. Connectivity: The connections between biological neurons via dendrites and axons inspire the weighted connections in artificial neural networks. Each node receives inputs, processes them, and sends weighted outputs to subsequent node, similar how to signals propagate in the brain.

3. Learning and Adaptation: Biological neurons strengthen or weaken their connections based on experience (neuroplasticity). This is similar to how artificial networks adjust weights during training using backpropagation and optimization algorithms. The dynamic modification of weights allows artificial networks to learn from data.

Exercise 1.11

Level: * (Easy)

Exercise Type: Novel

Question

If the pre-activation is 20, what are the outputs of the following activation functions: ReLU, Leaky ReLU, logistic, and hyperbolic?

Choose the correct answer:

a) 20, 20, 1, 1

b) 20, 0, 1, 1

c) 20, -20, 1, 1

d) 20, 20, -1, 1

e) 20, -20, 1, -1

Solution

The correct answer is a): 20, 20, 1, 1.

Calculation

[math]\displaystyle{ \text{ReLU}(20) = \max(0, 20) = 20 }[/math]

[math]\displaystyle{ \text{LeakyReLU}(20) = \begin{cases} 20 & \text{if } 20 \geq 0 \\ \alpha \cdot 20 & \text{if } 20 \lt 0 \end{cases} = 20 }[/math] where [math]\displaystyle{ \alpha }[/math] is a small constant (typically [math]\displaystyle{ 0.01 }[/math]).

[math]\displaystyle{ \sigma(20) = \frac{1}{1 + e^{-20}} \approx 1 }[/math]

[math]\displaystyle{ \tanh(20) = \frac{e^{20} - e^{-20}}{e^{20} + e^{-20}} = 1 }[/math]

Exercise 1.12

Level: * (Easy)

Exercise Type: Novel

Question

Imagine a simple feedforward neural network with a single hidden layer. The network structure is as follows: - linear activation function - The input layer has 2 neurons. - The hidden layer has 2 neurons. - The output layer has 1 neuron. - There are no biases in the network.

If the weights from the input layer to the hidden layer are given by: [math]\displaystyle{ W^{(1)} = \begin{bmatrix} 0.5 & -0.6 \\ 0.1 & 0.8 \end{bmatrix} }[/math] and the weights from the hidden layer to the output layer are given by: [math]\displaystyle{ W^{(2)} = \begin{bmatrix} 0.3 \\ -0.2 \end{bmatrix} }[/math]

Calculate the output of the network for the input vector [math]\displaystyle{ \mathbf{x} = \begin{bmatrix} 1 \\ 0 \end{bmatrix} }[/math] using a linear activation function for all neurons.

Hint

- The output of each layer is calculated by multiplying the input of that layer by the layer's weight matrix. - Use matrix multiplication to compute the outputs step-by-step.

Solution

- Step 1: Calculate Hidden Layer Output**

The input to the hidden layer is the initial input [math]\displaystyle{ \mathbf{x} }[/math]: [math]\displaystyle{ h^{(1)} = W^{(1)} \times \mathbf{x} = \begin{bmatrix} 0.5 & -0.6 \\ 0.1 & 0.8 \end{bmatrix} \begin{bmatrix} 1 \\ 0 \end{bmatrix} = \begin{bmatrix} 0.5 \\ 0.1 \end{bmatrix} }[/math]

- Step 2: Calculate Output Layer Output**

The input to the output layer is the output from the hidden layer: [math]\displaystyle{ y = W^{(2)} \times h^{(1)} = \begin{bmatrix} 0.3 \\ -0.2 \end{bmatrix} \times \begin{bmatrix} 0.5 \\ 0.1 \end{bmatrix} = 0.3 \times 0.5 + (-0.2) \times 0.1 = 0.15 - 0.02 = 0.13 }[/math]

Thus, the output of the network for the input vector [math]\displaystyle{ \mathbf{x} = \begin{bmatrix} 1 \\ 0 \end{bmatrix} }[/math] is [math]\displaystyle{ 0.13 }[/math].

Exercise 1.13

Level: * (Easy)

Exercise Types: Novel

Question

Explain whether this is a classification, regression, or clustering task each time. If the task is either classification or regression, also comment on whether the focus is prediction or explanation.

1. **Stock Market Trends:**

A financial analyst wants to predict the future stock prices of a company based on historical trends, economic indicators, and company performance metrics.

2. **Customer Segmentation:**

A retail company wants to group its customers based on their purchasing behaviour, including transaction frequency, product categories, and total spending, to design targeted marketing campaigns.

3. **Medical Diagnosis:**

A hospital wants to develop a model to determine whether a patient has a specific disease based on symptoms, medical history, and lab test results.

4. **Predicting Car Fuel Efficiency:**

An automotive researcher wants to understand how engine size, weight, and aerodynamics affect a car's fuel efficiency (miles per gallon).

Solution

**1. Stock Market Trends**

- Task Type:** Regression

- Focus:** Prediction

- Reasoning:** Stock prices are continuous numerical values, making this a regression task. The goal is to predict future prices rather than explain past fluctuations.

**2. Customer Segmentation**

- Task Type:** Clustering

- Focus:** —

- Reasoning:** Customers are grouped based on their purchasing behaviour without predefined labels, making this a clustering task.

**3. Medical Diagnosis**

- Task Type:** Classification

- Focus:** Prediction

- Reasoning:** The disease status is a categorical outcome (Has disease: Yes/No), making this a classification problem. The goal is to predict a diagnosis for future patients.

**4. Predicting Car Fuel Efficiency**

- Task Type:** Regression

- Focus:** Explanation

- Reasoning:** Fuel efficiency (miles per gallon) is a continuous variable. The researcher is interested in understanding how different factors influence efficiency, so the focus is on explanation.

Summary

| Task | Type | Focus | Reasoning |

|---|---|---|---|

| Stock Market Trends | Regression | Prediction | Predict future stock prices (continuous variable). |

| Customer Segmentation | Clustering | — | Group customers based on purchasing behaviour. |

| Medical Diagnosis | Classification | Prediction | Determine if a patient has a disease (Yes/No). |

| Predicting Car Fuel Efficiency | Regression | Explanation | Understand how factors affect fuel efficiency. |

Exercise 1.14

Level: ** (Easy)

Exercise Types: Novel

Question

You are given a set of real-world scenarios. Your task is to identify the most suitable fundamental machine learning approach for each scenario and justify your choice.

- Scenarios:**

1. **Loan Default Prediction:**

A bank wants to predict whether a loan applicant will default on their loan based on their credit history, income, and employment status.

2. **House Price Estimation:**

A real estate company wants to estimate the price of a house based on features such as location, size, and number of bedrooms.

3. **User Grouping for Advertising:**

A social media platform wants to group users with similar interests and online behavior for targeted advertising.

4. **Dimensionality Reduction in Medical Data:**

A medical researcher wants to reduce the number of variables in a dataset containing hundreds of patient health indicators while retaining the most important information.

- Tasks:**

- For each scenario, classify the problem into one of the four fundamental categories: Classification, Regression, Clustering, or Dimensionality Reduction. - Explain why you selected that category for each scenario. - Suggest a possible algorithm that could be used to solve each problem.

Solution

- 1. Loan Default Prediction**

- Task Type:** Classification

- Reasoning:** The target variable (loan default) is categorical (Yes/No), making this a classification problem. The goal is to predict whether an applicant will default based on their financial history.

- Possible Algorithm:** Logistic Regression, Random Forest, or Gradient Boosting.

- 2. House Price Estimation**

- Task Type:** Regression

- Reasoning:** House prices are continuous numerical values, making this a regression task. The goal is to estimate a house's price based on features like location and size.

- Possible Algorithm:** Linear Regression, Decision Trees, or XGBoost.

- 3. User Grouping for Advertising**

- Task Type:** Clustering

- Reasoning:** The goal is to group users based on their behavior without predefined labels, making this a clustering task.

- Possible Algorithm:** K-Means, DBSCAN, or Hierarchical Clustering.

- 4. Dimensionality Reduction in Medical Data**

- Task Type:** Dimensionality Reduction

- Reasoning:** The goal is to reduce the number of variables while preserving essential information, making this a dimensionality reduction task.

- Possible Algorithm:** Principal Component Analysis (PCA), t-SNE, or Autoencoders.

Exercise 1.15

Level: ** (Easy)

Exercise Types: Novel

Question

Define what machine learning is and how it is different from classical statistics. Provide the three learning methods used in machine learning, briefly define each and give an example of where each of them can be used. Include some common algorithms for each of the learning methods.

Solution

- Machine learning Definition**

– Machine Learning is the ability to teach a computer without explicitly programming it

– Examples are used to train computers to perform tasks that would be difficult to program

The difference between classical statistics and machine learning is the size of the data that they infer information from. In classical statistics, this is usually done from a small dataset(not enough data) while in machine learning it is done from a large dataset(Too many data).

- Supervised learning**

Supervised learning is a type of machine learning where the model is trained on a labeled dataset, meaning each training example has input features and a corresponding correct output. The algorithm learns the relationship between inputs and outputs to make predictions on new, unseen data.

Examples: Predicting house prices based on location, size, and other features (Regression). Identifying whether an email is spam or not (Classification).

Common Algorithms: Linear Regression, Logistic Regression, Decision Trees, Random Forest, Support Vector Machines (SVM), Neural Networks.

- Unsupervised Learning**

Unsupervised learning involves training a model on data without labeled outputs. The algorithm attempts to discover patterns, structures, or relationships within the data.

Examples: Grouping customers with similar purchasing behaviors for targeted marketing (Clustering). Identifying important features in a high-dimensional dataset (Dimensionality Reduction).

Common Algorithms: K-Means, Hierarchical Clustering, DBSCAN (Clustering). Principal Component Analysis (PCA), t-SNE, Autoencoders (Dimensionality Reduction).

- Reinforcement Learning**

Reinforcement learning (RL) is a type of machine learning where an agent learns to make decisions by performing actions in an environment to maximize cumulative rewards. The agent interacts with the environment, receives feedback in the form of rewards or penalties, and improves its strategy over time.

Examples: Training a robot to walk by rewarding successful movements. Teaching an AI to play chess or video games by rewarding wins and penalizing losses.

Common Algorithms: Q-Learning, Deep Q Networks (DQN), Policy Gradient Methods, Proximal Policy Optimization (PPO).

Summary

| Aspect | Supervised Learning | Unsupervised Learning | Reinforcement Learning |

|---|---|---|---|

| Definition | Learning from labeled data where inputs are paired with outputs. | Learning patterns or structures from unlabeled data. | Learning by interacting with an environment to maximize cumulative rewards. |

| Key Characteristics | Trains on known inputs and outputs to predict outcomes for unseen data. | No predefined labels; discovers hidden structures in the data. | Agent learns through trial and error by receiving rewards or penalties for its actions. |

| Examples | - Predicting house prices (Regression). - Classifying emails as spam or not (Classification). |

- Grouping customers by behavior (Clustering). - Reducing variables in large datasets (Dimensionality Reduction). |

- Training robots to walk. - Teaching AI to play chess or video games. |

| Common Algorithms | - Linear Regression - Logistic Regression - Decision Trees - Random Forest - SVM - Neural Networks |

- K-Means - Hierarchical Clustering - PCA - t-SNE - Autoencoders |

- Q-Learning - Deep Q Networks (DQN) - Policy Gradient Methods - Proximal Policy Optimization (PPO) |

Exercise 1.16

Level: * (Easy)

Exercise Types: Novel

Question

Categorize each of these machine learning scenarios into supervised learning, unsupervised learning, or reinforcement learning. Justify your reasoning for each case.

(a) A neural network is trained to classify handwritten digits using the MNIST dataset, which contains 60 000 images of handwritten digits, along with the correct answer for each image.

(b) A robot is programmed to learn how to play a video game. It does not have access to the game’s rules, but it can observe its current score after each action. Over time, it learns to play better by maximizing its score.

(c) A deep learning model is designed to segment medical images into different sections corresponding to specific organs. The training data consists of medical scans that have been annotated by experts to mark the boundaries of the organs.

(d) A machine learning model is given 100 000 astronomical images of unknown stars and galaxies. Using dimensionality reduction techniques, it groups similar-looking objects based on their features, such as size and shape.

Solution

(a) Supervised learning: The model is trained with labeled data, where each image has a corresponding digit label.

(b) Reinforcement learning: The model learns by interacting with an environment and receiving feedback in the form of rewards or penalties. It explores different actions to maximize cumulative rewards over time.

(c) Supervised learning: The model uses labeled data where professionals annotated each region of the image.

(d) Unsupervised learning: The model works with unlabeled data to find patterns and group similar objects.

Exercise 1.17

Level: * (Easy)

Exercise Types: Novel

Question

How does the introduction of ReLU as an activation function address the vanishing gradient problem observed in early deep learning models using sigmoid or tanh functions?

Solution

The vanishing gradient problem occurs when activation functions like sigmoid or tanh compress their inputs into small ranges, resulting in gradients that become very small during backpropagation. This hinders learning, particularly in deeper networks.

The ReLU (Rectified Linear Unit), defined as [math]\displaystyle{ f(x) = \max(0, x) }[/math], addresses this issue effectively:

(a) Non-Saturating Gradients: For positive input values, ReLU's gradient remains constant (equal to 1), preventing gradients from vanishing.

(b) Efficient Computation: The simplicity of the ReLU function makes it computationally faster than the sigmoid or tanh functions, which involve more complex exponential calculations.

(c) Sparse Activations: ReLU outputs zero for negative inputs, leading to sparse activations, which can improve computational efficiency and reduce overfitting.

However, ReLU can experience the "dying ReLU" problem, where neurons output zero for all inputs and effectively become inactive. Variants like Leaky ReLU and Parametric ReLU address this by allowing small, non-zero gradients for negative inputs, ensuring neurons remain active.

Exercise 2.1

Level: * (Easy)

Exercise Types: Novel

References: Calin, Ovidiu. Deep learning architectures: A mathematical approach. Springer, 2020

This problem is coincidentally similar to Exercise 5.10.1 (page 163) in this textbook, although that exercise was not used as the basis for this question.

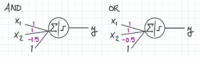

Question

This problem is about using perceptrons to implement logic functions. Assume a dataset of the form [math]\displaystyle{ x_1, x_2 \in \{0, 1\} }[/math], and a perceptron defined as: [math]\displaystyle{ y = H(\beta_0 + \beta_1 x_1 + \beta_2 x_2), }[/math] where [math]\displaystyle{ H }[/math] is the Heaviside step function, defined as: [math]\displaystyle{ H(z) = \begin{cases} 1, & \text{if } z \geq 0, \\ 0, & \text{if } z \lt 0. \end{cases} }[/math]

(a)* Find weights [math]\displaystyle{ \beta_1, \beta_2 }[/math] and bias [math]\displaystyle{ \beta_0 }[/math] for a single perceptron that implements the AND function.

(b)* Find the weights [math]\displaystyle{ \beta_1, \beta_2 }[/math] and bias [math]\displaystyle{ \beta_0 }[/math] for a single perceptron that implements the OR function.

(c)** Given the truth table for the XOR function:

[math]\displaystyle{ \begin{array}{|c|c|c|} \hline x_1 & x_2 & x_1 \oplus x_2 \\ \hline 0 & 0 & 0 \\ 0 & 1 & 1 \\ 1 & 0 & 1 \\ 1 & 1 & 0 \\ \hline \end{array} }[/math]

Show that it cannot be learned by a single perceptron. Find a small neural network of multiple perceptrons that can implement the XOR function. (Hint: a hidden layer with 2 perceptrons).

Solution

(a) A perceptron that implements the AND function:

[math]\displaystyle{ y = H(-1.5 + x_1 + x_2). }[/math]

Here:

[math]\displaystyle{ \beta_0 = -1.5, \quad \beta_1 = 1, \quad \beta_2 = 1. }[/math]. This works because the AND function returns 1 only when both inputs are 1. For a perceptron, the condition for activation is: [math]\displaystyle{ \beta_0 + \beta_1 x_1 + \beta_2 x_2 \geq 0. }[/math] This must hold for (1, 1) but fail for all other combinations. Substituting values leads to the choice of [math]\displaystyle{ \beta_0 = -1.5 }[/math] and [math]\displaystyle{ \beta_1 = \beta_2 = 1 }[/math].

(b) A perceptron that implements the OR function:

[math]\displaystyle{ y = H(-0.5 + x_1 + x_2). }[/math]

Here:

[math]\displaystyle{ \beta_0 = -0.5, \quad \beta_1 = 1, \quad \beta_2 = 1. }[/math] This works because the OR function returns 1 if either or both inputs are 1. Using similar logic to the AND case, the decision boundary conditions lead to these parameters.

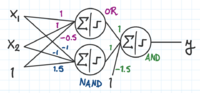

(c) XOR is not linearly separable, so it cannot be implemented by a single perceptron.

The XOR function returns 1 when the following are true:

- Either [math]\displaystyle{ x_1 }[/math] or [math]\displaystyle{ x_2 }[/math] are 1. In other words, the expression [math]\displaystyle{ x_1 }[/math] OR [math]\displaystyle{ x_2 }[/math] returns 1.

- [math]\displaystyle{ x_1 }[/math] and [math]\displaystyle{ x_2 }[/math] are not both 1. In other words, the expression [math]\displaystyle{ x_1 }[/math] NAND [math]\displaystyle{ x_2 }[/math] returns 1.

To implement this, the outputs of an OR and a NAND perceptron can be taken as inputs to an AND perceptron. (The NAND perceptron was derived by multiplying the weights and bias of the AND perceptron by -1.)

Why can't the perceptron converge in the case of linear non-separability?

In linearly separable data, there exists a weight vector [math]\displaystyle{ w }[/math] and bias [math]\displaystyle{ b }[/math] such that:

[math]\displaystyle{ y_i(w \cdot x_i + b) \gt 0 \quad \forall i }[/math]

But in the case of linear non-separability, w and b satisfying this condition do not exist, so the perceptron cannot satisfy the convergence condition.

Exercise 2.2

Level: * (Easy)

Exercise Types: Novel

Question

1.How do feedforward neural networks utilize backpropagation to adjust weights and improve the accuracy of predictions during training?

2. How would the training process be affected if the learning rate in optimization algorithm were too high or too low?

Solution

1. After a forward pass where inputs are processes to generate an output, the error between the prediction and actual values is calculated. This error is then propagated backward through the network, and the gradients of the loss function with respect to the weights are computed. Using these gradients, the weights are updated with an optimization algorithm like stochastic gradient descent, gradually minimizing the error and improving the networks' performance.

2. If the learning rate is too high, the weights might overshoot the optimal values, leading to oscillations or divergence. If it's too low, the training process might become very slow and stuck in local minimum.

Calculations

Step 1: Forward Propagation

Each neuron computes:

[math]\displaystyle{ z = W \cdot x + b }[/math]

[math]\displaystyle{ a = f(z) }[/math]

where:

- W = weights, b = bias

- f(z) = activation function (e.g., sigmoid, ReLU)

- a = neuron’s output

Step 2: Compute Loss

The error between predicted [math]\displaystyle{ \hat{y} }[/math] and actual [math]\displaystyle{ y }[/math] is calculated using a loss function, such as **Mean Squared Error (MSE)**:

[math]\displaystyle{ L = \frac{1}{n} \sum (y - \hat{y})^2 }[/math]

For classification, **Cross-Entropy Loss** is commonly used.

Step 3: Backward Propagation

Using the **chain rule**, gradients are computed:

[math]\displaystyle{ \frac{\partial L}{\partial W} = \frac{\partial L}{\partial a} \cdot \frac{\partial a}{\partial z} \cdot \frac{\partial z}{\partial W} }[/math]

These gradients guide weight updates to minimize loss.

Step 4: Weight Update using Gradient Descent

Weights are updated using:

[math]\displaystyle{ W = W - \alpha \frac{\partial L}{\partial W} }[/math]

where [math]\displaystyle{ \alpha }[/math] is the **learning rate**.

Exercise 2.3

Level: * (Easy)

Exercise Types: Modified

References: Simon J.D. Prince. Understanding Deep learning. 2024

This problem comes from Problem 3.5 in this textbook. In addition to the proof, I explained why this property is important to learning neural networks.

Question

Prove that the following property holds for [math]\displaystyle{ \alpha \in \mathbb{R}^+ }[/math]:

[math]\displaystyle{ \text{ReLU}[\alpha \cdot z] = \alpha \cdot \text{ReLU}[z] }[/math]

Explain why this property is important in neural networks.

Solution

This is known as the non-negative homogeneity property of the ReLU function.

Recall the definition of the ReLU function:

[math]\displaystyle{ \text{ReLU}(z) = \begin{cases} z & \text{if } z \geq 0, \\ 0 & \text{if } z \lt 0. \end{cases} }[/math]

We prove the property by considering the two possible cases for [math]\displaystyle{ z }[/math].

Case 1: [math]\displaystyle{ z \geq 0 }[/math]

If [math]\displaystyle{ z \geq 0 }[/math], then by the definition of the ReLU function:

[math]\displaystyle{ \text{ReLU}(z) = z }[/math]

Therefore:

[math]\displaystyle{ \text{ReLU}(\alpha \cdot z) = \alpha \cdot z }[/math]

and:

[math]\displaystyle{ \alpha \cdot \text{ReLU}(z) = \alpha \cdot z }[/math]

Hence, in this case:

[math]\displaystyle{ \text{ReLU}(\alpha \cdot z) = \alpha \cdot \text{ReLU}(z) }[/math]

Case 2: [math]\displaystyle{ z\lt 0 }[/math]

If [math]\displaystyle{ z \lt 0 }[/math], then [math]\displaystyle{ \alpha \cdot z \lt 0 }[/math].

Therefore:

[math]\displaystyle{ \text{ReLU}(\alpha \cdot z) = \text{ReLU}(z) = 0 }[/math]

and:

[math]\displaystyle{ \alpha \cdot \text{ReLU}(z) = \alpha \cdot 0 = 0 }[/math]

Hence, in this case:

[math]\displaystyle{ \text{ReLU}(\alpha \cdot z) = \alpha \cdot \text{ReLU}(z) }[/math]

Since the property holds in both cases, this completes the proof.

Why is this property important in neural networks?

In a neural network, the input to a neuron is often a linear combination of the weights and inputs.

When training neural networks, scaling the inputs or weights can affect the activations of neurons. However, because ReLU satisfies the homogeneity property, the output of the ReLU function scales proportionally with the input. This means that scaling the inputs by a positive constant (like a learning rate or normalization factor) does not change the overall pattern of activations — it only scales them. This stability in scaling is important during optimization because it makes the network's output more predictable and ensures that scaling transformations don't break the network's functionality.

Additionally, because of the non-negative homogeneity property, the gradients also scale proportionally, the scale of the gradient changes proportionally with the input scale, which ensures that the optimization process remains stable. It helps prevent exploding gradients when the inputs are scaled by large positive values.

The homogeneity property of ReLU also helps the network to perform well on different types of data. By keeping the scaling of activations consistent, it helps maintain the connection between inputs and outputs during training, even when the data is adjusted or scaled. This makes ReLU useful when input values vary a lot, and it simplifies the network's response to changes in input distributions, which is especially valuable when transferring a trained model to new data or domains.

Exercise 2.4

Level: * (Easy)

Exercise Types: Novel

Question

Train a perceptron on the given dataset using the following initial settings, and ensure it classifies all data points correctly.

- Initial weights: [math]\displaystyle{ w_0 = 0, w_1 = 0, w_2 = 0 }[/math]

- Learning rate: [math]\displaystyle{ \eta = 0.1 }[/math]

- Training dataset:

(x₁ = 1, x₂ = 2, y = 1) (x₁ = -1, x₂ = -1, y = -1) (x₁ = 2, x₂ = 1, y = 1)

[math]\displaystyle{ y = 1 }[/math] if the output [math]\displaystyle{ z = w_1 \cdot x_1 + w_2 \cdot x_2 + w_0 \geq 0 }[/math], otherwise [math]\displaystyle{ y = -1 }[/math].

Solution

Iteration 1

1. First data point (x₁ = 1, x₂ = 2) with label 1:

- Weighted sum: [math]\displaystyle{ \hat{y} = w_0 + w_1 x_1 + w_2 x_2 = 0 + 0(1) + 0(2) = 0 }[/math]

- Predicted label: [math]\displaystyle{ \hat{y} = 1 }[/math]

- Actual label: 1 → No misclassification

2. Second data point (x₁ = -1, x₂ = -1) with label -1:

- Weighted sum: [math]\displaystyle{ \hat{y} = w_0 + w_1 x_1 + w_2 x_2 = 0 + 0(-1) + 0(-1) = 0 }[/math]

- Predicted label: [math]\displaystyle{ \hat{y} = 1 }[/math]

- Actual label: -1 → Misclassified

3. Third data point (x₁ = 2, x₂ = 1) with label 1:

- Weighted sum: [math]\displaystyle{ \hat{y} = w_0 + w_1 x_1 + w_2 x_2 = 0 + 0(2) + 0(1) = 0 }[/math]

- Predicted label: [math]\displaystyle{ \hat{y} = 1 }[/math]

- Actual label: 1 → No misclassification

Update Weights (using the Perceptron rule with the cost as the distance of all misclassified points)

For the misclassified point (x₁ = -1, x₂ = -1):

- Updated weights:

- [math]\displaystyle{ w_0 = w_0 + \eta y = 0 + 0.1(-1) = -0.1 }[/math]

- [math]\displaystyle{ w_1 = w_1 + \eta y x_1 = 0 + 0.1(-1)(-1) = 0.1 }[/math]

- [math]\displaystyle{ w_2 = w_2 + \eta y x_2 = 0 + 0.1(-1)(-1) = 0.1 }[/math]

Updated weights after first iteration: [math]\displaystyle{ w_0 = -0.1, w_1 = 0.1, w_2 = 0.1 }[/math]

Iteration 2

1. First data point (x₁ = 1, x₂ = 2) with label 1:

- Weighted sum: [math]\displaystyle{ \hat{y} = -0.1 + 0.1(1) + 0.1(2) = -0.1 + 0.1 + 0.2 = 0.2 }[/math]

- Predicted label: [math]\displaystyle{ \hat{y} = 1 }[/math]

- Actual label: 1 → No misclassification

2. Second data point (x₁ = -1, x₂ = -1) with label -1:

- Weighted sum: [math]\displaystyle{ \hat{y} = -0.1 + 0.1(-1) + 0.1(-1) = -0.1 - 0.1 - 0.1 = -0.3 }[/math]

- Predicted label: [math]\displaystyle{ \hat{y} = -1 }[/math]

- Actual label: -1 → No misclassification

3. Third data point (x₁ = 2, x₂ = 1) with label 1:

- Weighted sum: [math]\displaystyle{ \hat{y} = -0.1 + 0.1(2) + 0.1(1) = -0.1 + 0.2 + 0.1 = 0.2 }[/math]

- Predicted label: [math]\displaystyle{ \hat{y} = 1 }[/math]

- Actual label: 1 → No misclassification

Since there are no misclassifications in the second iteration, the perceptron has converged!

Final Result

- Weights after convergence: [math]\displaystyle{ w_0 = -0.1, w_1 = 0.1, w_2 = 0.1 }[/math]

- Total cost after convergence: [math]\displaystyle{ Cost = 0 }[/math], since no misclassified points.

Exercise 2.5

Level: * (Moderate)

Exercise Types: Novel

Question

Consider a Feed-Forward Neural Network (FFN) with one or more hidden layers. Answer the following questions:

(a) Describe how does the Feed-Forward Neural Network (FFN) work in general. Describe the component of the Network.

(b) How does the forward pass work ? Provide the relevant formulas for each step.

(c) How does the backward pass (backpropagation) ? Explain and provide the formulas for each step.

Solution

(a): A Feed-Forward Neural Network (FFN) consists of an input layer, one or more hidden layers, and one output layer. Each layer transforms the input data, with each neuron's output being fed to the next layer as input. Each neuron in a layer is a perceptron, which is a basic computational unit that contains weights, bias and an activation function. The perceptron computes a weighted sum of its inputs, adds a bias term, and passes the result through an activation function. In this structure, each layer transforms the data as it passes through, with each neuron's output being fed to the next layer. The final output is the network’s prediction, then the loss function use the prediction and the true label of the data to calculate the loss. The backward pass computes the gradients of the loss with respect to each weight and bias in the network, and then update the weights and biases to minimize the loss. This process is repeated for each sample (or mini-batch) of data until the loss converges and the weights are optimized.

(b): The forward pass involves computing the output for each layer in the network. For each layer, the algorithm performs the following steps:

1. Compute the weighted sum of inputs to the layer:

[math]\displaystyle{ z^{(l)} = W^{(l)} a^{(l-1)} + b^{(l)} }[/math]

2. Use the activation function to calculate the output of the layer:

[math]\displaystyle{ \hat{y} = a^{(L)} = \sigma(z^{(L)}) }[/math]

3. Repeat these steps for each layer, until getting to the output layer

(c): The backward pass (backpropagation) updates the gradients of the loss function with respect to each weight and bias, and then use the gradient descents to update the weights.

1. Calculate the errors for each layer:

Error at the output layer: The error term at the output layer has this formula:[math]\displaystyle{ \delta^{(L)} = \frac{\partial \mathcal{L}}{\partial a^{(L)}} \cdot \sigma'(z^{(L)}) }[/math]

Error for the hidden layers: The error for each hidden layer is:

[math]\displaystyle{ \delta^{(l)} = \left( W^{(l+1)} \right)^T \delta^{(l+1)} \cdot \sigma'(z^{(l)}) }[/math]

3. Gradient of the loss with respect to weights and biases. Compute the gradients for the weights and biases:

The gradient for weights is:

[math]\displaystyle{ \frac{\partial \mathcal{L}}{\partial W^{(l)}} = a^{(l-1)} \cdot (\delta^{(l)})^T }[/math]

The gradient is for biases is:

[math]\displaystyle{ \frac{\partial \mathcal{L}}{\partial b^{(l)}} = \delta^{(l)} }[/math]

4. Update the weights and biases using gradient descent:

[math]\displaystyle{ W^{(l)} \leftarrow W^{(l)} - \rho \cdot \frac{\partial \mathcal{L}}{\partial W^{(l)}} }[/math]

Where [math]\displaystyle{ \rho }[/math] is the learning rate.

[math]\displaystyle{ b^{(l)} \leftarrow b^{(l)} - \rho \cdot \frac{\partial \mathcal{L}}{\partial b^{(l)}} }[/math]

Repeat these steps for each layers from output layer to input layers to update all the weights and biases

Exercise 2.6

Level: * (Easy)

Exercise Types: Novel

Question

A single neuron takes an input vector [math]\displaystyle{ x=[2,-3] }[/math], with weights [math]\displaystyle{ w=[0.4,-0.6] }[/math]. The target output is [math]\displaystyle{ y_{\text{true}}=1 }[/math].

1. Calculate the weighted sum [math]\displaystyle{ z = w \cdot x }[/math].

2. Compute the squared error loss: [math]\displaystyle{ L = 0.5 \cdot (z - y_{\text{true}})^2 }[/math]

3. Find the gradient of the loss with respect to the weights [math]\displaystyle{ w }[/math] and perform one step of gradient descent with a learning rate [math]\displaystyle{ \eta = 0.01 }[/math].

4.Provide the updated weights and the error after the update.

5. Compare the result of the previous step with the case of a learning rate of [math]\displaystyle{ \eta = 0.1 }[/math]

Solution

1. [math]\displaystyle{ z = w \cdot x = (0.4 \cdot 2) + (-0.6 \cdot -3) = 0.8 + 1.8 = 2.6 }[/math]

2. [math]\displaystyle{ L = 0.5 \cdot (z - y_{\text{true}})^2 = 0.5 \cdot (2.6 - 1)^2 = 0.5 \cdot (1.6)^2 = 0.5 \cdot 2.56 = 1.28 }[/math]

3. The gradient of the loss with respect to [math]\displaystyle{ w_i }[/math] is: [math]\displaystyle{ \frac{\partial L}{\partial w_i} = (z - y_{\text{true}}) \cdot x_i }[/math]

For [math]\displaystyle{ w_1 }[/math] (associated with [math]\displaystyle{ x_1 = 2 }[/math]): [math]\displaystyle{ \frac{\partial L}{\partial w_1} = (2.6 - 1) \cdot 2 = 1.6 \cdot 2 = 3.2 }[/math]

For [math]\displaystyle{ w_2 }[/math] (associated with [math]\displaystyle{ x_2 = -3 }[/math]): [math]\displaystyle{ \frac{\partial L}{\partial w_2} = (2.6 - 1) \cdot (-3) = 1.6 \cdot -3 = -4.8 }[/math] The updated weights are: [math]\displaystyle{ w_i = w_i - \eta \cdot \frac{\partial L}{\partial w_i} }[/math]

For [math]\displaystyle{ w_1 }[/math]: [math]\displaystyle{ w_1 = 0.4 - 0.01 \cdot 3.2 = 0.4 - 0.032 = 0.368 }[/math]

For [math]\displaystyle{ w_2 }[/math]: [math]\displaystyle{ w_2 = -0.6 - 0.01 \cdot (-4.8) = -0.6 + 0.048 = -0.552 }[/math]

4. Recalculate [math]\displaystyle{ z }[/math] with updated weights: [math]\displaystyle{ z = (0.368 \cdot 2) + (-0.552 \cdot -3) = 0.736 + 1.656 = 2.392 }[/math]

Recalculate the error: [math]\displaystyle{ L = 0.5 \cdot (z - y_{\text{true}})^2 = 0.5 \cdot (2.392 - 1)^2 = 0.5 \cdot (1.392)^2 = 0.5 \cdot 1.937 = 0.968 }[/math]

5. Compare the result of the previous step with the case of a learning rate of [math]\displaystyle{ \eta = 0.1 }[/math]:

For [math]\displaystyle{ w_1 }[/math]: [math]\displaystyle{ w_1 = 0.4 - 0.1 \cdot 3.2 = 0.4 - 0.032 = 0.08 }[/math]

For [math]\displaystyle{ w_2 }[/math]: [math]\displaystyle{ w_2 = -0.6 - 0.1 \cdot (-4.8) = -0.6 + 0.048 = -0.12 }[/math]

Recalculate [math]\displaystyle{ z }[/math] with the updated weights: [math]\displaystyle{ z = (0.08 \cdot 2) + (-0.12 \cdot -3) = 0.16 + 0.36 = 0.52 }[/math]

Recalculate the error: [math]\displaystyle{ L = 0.5 \cdot (z - y_{\text{true}})^2 = 0.5 \cdot (0.52 - 1)^2 = 0.5 \cdot (-0.48)^2 = 0.5 \cdot 0.2304 = 0.1152 }[/math]

Comparison:

- With a learning rate of [math]\displaystyle{ \eta = 0.01 }[/math], the error after one update is [math]\displaystyle{ L = 0.968 }[/math].

- With [math]\displaystyle{ \eta = 0.1 }[/math], the error after one update is [math]\displaystyle{ L = 0.1152 }[/math].

The error is much lower when using a larger learning rate [math]\displaystyle{ \eta = 0.1 }[/math] compared to a smaller learning rate [math]\displaystyle{ \eta = 0.01 }[/math]. However, large learning rates can sometimes cause overshooting of the optimal solution, so care must be taken when selecting a learning rate.

Exercise 2.7

Level: * (Easy)

Exercise Types: Copied

This problem comes from Exercise 2 : Perceptron Learning.

Question

Given two single perceptrons [math]\displaystyle{ a }[/math] and [math]\displaystyle{ b }[/math] each of which defined by the inequality [math]\displaystyle{ w_0 + w_1x_1 + w_2x_2 ≥ 0 }[/math], perceptron [math]\displaystyle{ a }[/math] has the weights [math]\displaystyle{ w_0 = 1 }[/math], [math]\displaystyle{ w_1 = 2 }[/math], [math]\displaystyle{ w_2 = 1 }[/math], perceptron [math]\displaystyle{ b }[/math] has the weights [math]\displaystyle{ w_0 = 0 }[/math], [math]\displaystyle{ w_1 = 2 }[/math], [math]\displaystyle{ w_2 = 1 }[/math]. Is perceptron [math]\displaystyle{ a }[/math] more general than perceptron [math]\displaystyle{ b }[/math]?

Solution

To determine whether perceptron [math]\displaystyle{ a }[/math] is more general than perceptron [math]\displaystyle{ b }[/math], we need to examine their decision boundaries and the regions they classify as "positive" (where [math]\displaystyle{ w_0 + w_1x_1 + w_2x_2 \geq 0 }[/math]).

The decision boundaries for both perceptrons are defined by: [math]\displaystyle{ w_0 + w_1x_1 + w_2x_2 = 0 }[/math].

For Perceptron [math]\displaystyle{ a }[/math]: [math]\displaystyle{ 1 + 2x_1 + x_2 \geq 0 }[/math]; the decision boundary is: [math]\displaystyle{ x_2 = -2x_1 - 1 }[/math].

For Perceptron [math]\displaystyle{ b }[/math]: [math]\displaystyle{ 2x_1 + x_2 \geq 0 }[/math]; the decision boundary is: [math]\displaystyle{ x_2 = -2x_1 }[/math].

We want to clarify what does more general mean. If perceptron [math]\displaystyle{ a }[/math] classifies every point classified positively by perceptron [math]\displaystyle{ b }[/math] as positive, then [math]\displaystyle{ a }[/math] is at least as general as [math]\displaystyle{ b }[/math]. If in addition, [math]\displaystyle{ a }[/math] classifies points as positive that [math]\displaystyle{ b }[/math] does not, then [math]\displaystyle{ a }[/math] is strictly more general.

Apparently perceptron [math]\displaystyle{ a }[/math]'s positive region includes all points that satisfy [math]\displaystyle{ x_2 \geq -2x_1 - 1 }[/math], which is strictly larger than [math]\displaystyle{ b }[/math]'s region because [math]\displaystyle{ -2x_1 - 1 \lt -2x_1 }[/math] for all [math]\displaystyle{ x_1 }[/math]; perceptron [math]\displaystyle{ b }[/math]'s positive region includes all points that satisfy [math]\displaystyle{ x_2 \geq -2x_1 }[/math], which is a subset of [math]\displaystyle{ a }[/math]'s region.

Therefore, perceptron [math]\displaystyle{ a }[/math] is more general than perceptron [math]\displaystyle{ b }[/math] because all points classified as positive by [math]\displaystyle{ b }[/math] are also classified as positive by [math]\displaystyle{ a }[/math], and [math]\displaystyle{ a }[/math] classifies additional points (where [math]\displaystyle{ -2x_1 - 1 \leq x_2 \lt -2x_1 }[/math]) as positive that [math]\displaystyle{ b }[/math] does not.

Exercise 2.10

Level: * (Easy)

Exercise Type: Novel

Question

In a simple linear regression

a). Derive the vectorized form of the SSE (loss function) in terms of [math]\displaystyle{ Y }[/math], [math]\displaystyle{ X }[/math] and [math]\displaystyle{ \theta }[/math].

b). Find the equation which minimizes [math]\displaystyle{ \theta }[/math] (Recall that this is the weights of the linear regression).

Solution

a).

[math]\displaystyle{ \begin{align*} SSE &= (Y - \hat{Y})^2 \\ &= (Y - \hat{Y})^T (Y - \hat{Y}) \\ &= Y^TY - Y^TX\theta - (X\theta)^T Y + (X\theta)^T(X\theta) \\ &= Y^TY - 2Y^TX\theta + (X\theta)^T(X\theta) \\ \end{align*} }[/math]

b).

[math]\displaystyle{ \begin{align*} 0 &= \frac{\partial SSE}{\partial \theta} \\ 0 &= \frac{\partial}{\partial \theta} \Big[ Y^TY - 2Y^TX\theta + (X\theta)^T(X\theta)\Big] \\ 0 &= 0 - 2YX^T + 2X^TX\theta \\ 2X^TX\theta &= 2YX^T \\ \theta &= (YX^T)(X^TX)^{-1} \end{align*} }[/math]

Exercise 2.11

Level: Moderate

Exercise Types: Novel

Question

Deep learning models often face challenges during training due to the vanishing gradient problem, especially when using sigmoid or tanh activation functions.

(a) Describe the vanishing gradient problem and its impact on the training of deep networks.

(b) Explain how the introduction of ReLU (Rectified Linear Unit) activation function mitigates this problem.

(c) Discuss one potential downside of using ReLU and propose an alternative activation function that addresses this limitation.

Solution

(a) Vanishing Gradient Problem: The vanishing gradient problem occurs when the gradients of the loss function become extremely small as they are propagated back through the layers of a deep network. This leads to:

Slow or stagnant weight updates in early layers. Difficulty in effectively training deep models. This issue is particularly pronounced with activation functions like sigmoid and tanh, where gradients approach zero as inputs saturate.

(b) Role of ReLU in Mitigation: ReLU, defined as [math]\displaystyle{ f(x) = \max(0, x) }[/math], mitigates the vanishing gradient problem by:

Producing non-zero gradients for positive inputs, maintaining effective weight updates. Introducing sparsity, as neurons deactivate (output zero) for negative inputs, which improves model efficiency.

(c) Downside of ReLU and Alternatives: One downside of ReLU is the "dying ReLU" problem, where neurons output zero for all inputs, effectively becoming inactive. This can happen when weights are poorly initialized or during training.

Alternative: Leaky ReLU allows a small gradient for negative inputs, defined as [math]\displaystyle{ f(x) = x }[/math] for [math]\displaystyle{ x \gt 0 }[/math] and [math]\displaystyle{ f(x) = \alpha x }[/math] for [math]\displaystyle{ x \leq 0 }[/math], where [math]\displaystyle{ \alpha }[/math] is a small positive constant. This prevents neurons from dying, ensuring all neurons contribute to learning.

Exercise 3.1

Level: ** (Moderate)

Exercise Type: Novel

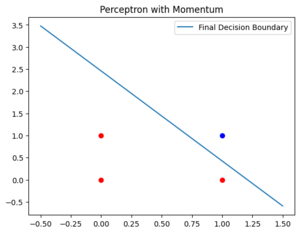

Implement the perceptron learning algorithm with momentum for the AND function. Plot the decision boundary after 10 epochs of training with a learning rate of 0.1 and a momentum of 0.9.

Solution

import numpy as np

import matplotlib.pyplot as plt

# Dataset

data = np.array([

[0, 0, -1],

[0, 1, -1],

[1, 0, -1],

[1, 1, 1]

])

# Initialize weights, learning rate, and momentum

weights = np.random.rand(3)

learning_rate = 0.1

momentum = 0.9

epochs = 10

previous_update = np.zeros(3)

# Add bias term to data

X = np.hstack((np.ones((data.shape[0], 1)), data[:, :2]))

y = data[:, 2]

# Training loop

for epoch in range(epochs):

for i in range(len(X)):

prediction = np.sign(np.dot(weights, X[i]))

if prediction != y[i]:

update = learning_rate * y[i] * X[i] + momentum * previous_update

weights += update

previous_update = update

# Plot final decision boundary

x_vals = np.linspace(-0.5, 1.5, 100)

y_vals = -(weights[1] * x_vals + weights[0]) / weights[2]

plt.plot(x_vals, y_vals, label='Final Decision Boundary')

# Plot dataset

for point in data:

color = 'blue' if point[2] == 1 else 'red'

plt.scatter(point[0], point[1], color=color)

plt.title('Perceptron with Momentum')

plt.legend()

plt.show()

Exercise 3.2

Level: ** (Moderate)

Exercise Types: Novel

Question

Write a python program showing how the back propagation algorithm work with a 2 inputs 2 hidden layer and 1 output layer neural network. Train the network on the XOR problem:

- Input[0,0] -> Output 0

- Input [0,1] -> Output 1

- Input [1,0] -> Output 1

- Input [1,1] -> Output 0

Use the sigmoid activation function. Use Mean Square Error as the loss function.

Solution

import numpy as np

# -------------------------

# 1. Define Activation Functions

# -------------------------

def sigmoid(x):

"""

Sigmoid activation function.

"""

return 1 / (1 + np.exp(-x))

def sigmoid_derivative(x):

"""

Derivative of the sigmoid function.

Here, 'x' is assumed to be sigmoid(x),

meaning x is already the output of the sigmoid.

"""

return x * (1 - x)

# -------------------------

# 2. Prepare the Training Data (XOR)

# -------------------------

# Input data (4 samples, each with 2 features)

X = np.array([

[0, 0],

[0, 1],

[1, 0],

[1, 1]

])

# Target labels (4 samples, each is a single output)

y = np.array([

[0],

[1],

[1],

[0]

])

# -------------------------

# 3. Initialize Network Parameters

# -------------------------

# Weights for input -> hidden (shape: 2x2)

W1 = np.random.randn(2, 2)

# Bias for hidden layer (shape: 1x2)

b1 = np.random.randn(1, 2)

# Weights for hidden -> output (shape: 2x1)

W2 = np.random.randn(2, 1)

# Bias for output layer (shape: 1x1)

b2 = np.random.randn(1, 1)

# Hyperparameters

learning_rate = 0.1

num_epochs = 10000

# -------------------------

# 4. Training Loop

# -------------------------

for epoch in range(num_epochs):

# 4.1. Forward Pass

# - Compute hidden layer output

hidden_input = np.dot(X, W1) + b1 # shape: (4, 2)

hidden_output = sigmoid(hidden_input)

# - Compute final output

final_input = np.dot(hidden_output, W2) + b2 # shape: (4, 1)

final_output = sigmoid(final_input)

# 4.2. Compute Loss (Mean Squared Error)

error = y - final_output # shape: (4, 1)

loss = np.mean(error**2)

# 4.3. Backpropagation

# - Gradient of loss w.r.t. final_output

d_final_output = error * sigmoid_derivative(final_output) # shape: (4, 1)

# - Propagate error back to hidden layer

error_hidden_layer = np.dot(d_final_output, W2.T) # shape: (4, 2)

d_hidden_output = error_hidden_layer * sigmoid_derivative(hidden_output) # shape: (4, 2)

# 4.4. Gradient Descent Updates

# - Update W2, b2

W2 += learning_rate * np.dot(hidden_output.T, d_final_output) # shape: (2, 1)

b2 += learning_rate * np.sum(d_final_output, axis=0, keepdims=True) # shape: (1, 1)

# - Update W1, b1

W1 += learning_rate * np.dot(X.T, d_hidden_output) # shape: (2, 2)

b1 += learning_rate * np.sum(d_hidden_output, axis=0, keepdims=True) # shape: (1, 2)

# Print loss every 1000 epochs

if epoch % 1000 == 0:

print(f"Epoch {epoch}, Loss: {loss:.6f}")

# -------------------------

# 5. Testing / Final Outputs

# -------------------------

print("\nTraining complete.")

print("Final loss:", loss)

# Feedforward one last time to see predictions

hidden_output = sigmoid(np.dot(X, W1) + b1)

final_output = sigmoid(np.dot(hidden_output, W2) + b2)

print("\nOutput after training:")

for i, inp in enumerate(X):

print(f"Input: {inp} -> Predicted: {final_output[i][0]:.4f} (Target: {y[i][0]})")

Output:

Epoch 0, Loss: 0.257193 Epoch 1000, Loss: 0.247720 Epoch 2000, Loss: 0.226962 Epoch 3000, Loss: 0.191367 Epoch 4000, Loss: 0.162169 Epoch 5000, Loss: 0.034894 Epoch 6000, Loss: 0.012459 Epoch 7000, Loss: 0.007127 Epoch 8000, Loss: 0.004890 Epoch 9000, Loss: 0.003687 Training complete. Final loss: 0.0029435579049382756 Output after training: Input: [0 0] -> Predicted: 0.0598 (Target: 0) Input: [0 1] -> Predicted: 0.9461 (Target: 1) Input: [1 0] -> Predicted: 0.9506 (Target: 1) Input: [1 1] -> Predicted: 0.0534 (Target: 0)

Exercise 3.3

Level: * (Easy)

Exercise Types: Novel

Question

Implement 4 iterations of gradient descent with and without momentum for the function [math]\displaystyle{ f(x) = x^2 + 2 }[/math] with learning rate [math]\displaystyle{ \eta=0.1 }[/math], momentum [math]\displaystyle{ \gamma=0.9 }[/math], starting value of [math]\displaystyle{ x_0=2 }[/math], starting velocity of [math]\displaystyle{ v_0=0 }[/math]. Comment on the differences.

Solution

Note that [math]\displaystyle{ f'(x) = 2x }[/math]

Without momentum:

Iteration 1: [math]\displaystyle{ x_1 = x_0 - \eta* f'(x_0) = 2 - 0.1*2*2 = 1.6 }[/math]

Iteration 2: [math]\displaystyle{ x_2 = x_1 - \eta* f'(x_1) = 1.6 - 0.1*2*1.6 = 1.28 }[/math]

Iteration 3: [math]\displaystyle{ x_3 = x_2 - \eta* f'(x_2) = 1.28 - 0.1*2*1.28 = 1.024 }[/math]

Iteration 4: [math]\displaystyle{ x_4 = x_3 - \eta* f'(x_3) =1.024 - 0.1*2*1.024 = 0.8192 }[/math]

With momentum:

Iteration 1: [math]\displaystyle{ v_1 = \gamma*v_0 + \eta * f'(x_0) = 0.9*0 + 0.1*2*2 = 0.4, x_1 = x_0-v_1 = 2-0.4 = 1.6 }[/math]

Iteration 2: [math]\displaystyle{ v_2 = \gamma*v_1 + \eta * f'(x_1) = 0.9*0.4+0.1*2*1.6 = 0.68, x_2 = x_1-v_2 = 1.6-0.68 = 0.92 }[/math]

Iteration 3: [math]\displaystyle{ v_3 = \gamma*v_2 + \eta * f'(x_2) = 0.9*0.68 + 0.1*2*0.92 = 0.796, x_3 = x_2-v_3 = 0.92-0.796 = 0.124 }[/math]

Iteration 4: [math]\displaystyle{ v_4 = \gamma*v_3 + \eta * f'(x_3) = 0.9*0.796 + 0.1*2*0.124 = 0.7412, x_4 = x_3-v_4 = 0.124 - 0.7412 = -0.6172 }[/math]

By observation, we know that the minimum of [math]\displaystyle{ f(x)=x^2+2 }[/math] occurs at [math]\displaystyle{ x=0 }[/math]. We can see that with momentum, the algorithm moves towards the minimum much faster than without momentum as past gradients are accumulated, leading to larger steps. However, we also can see that momentum can cause the algorithm to overshoot the minimum since we are taking larger steps.

Benefits for momentum: Momentum is a technique used in optimization to accelerate convergence. Inspired by physical momentum, it helps in navigating the optimization landscape.

By remembering the direction of previous gradients, which are accumulated into a running average (the velocity), momentum helps guide the updates more smoothly, leading to faster progress. This running average allows the optimizer to maintain a consistent direction even if individual gradients fluctuate. Additionally, momentum can help the algorithm escape from shallow local minima by carrying the updates through flat regions. This prevents the optimizer from getting stuck in small, unimportant minima and helps it continue moving toward a better local minimum.

Additional Comment: It is important to note that the use of running average is only there to help with intuition. At time t, while the velocity is a linear sum of previous gradients, the weight of the gradient decreases as time get further away. That is, the [math]\displaystyle{ \nabla Q(w_0) }[/math] term will have a coefficient of [math]\displaystyle{ (1-\gamma)^{t} }[/math].

Exercise 3.4

Level: ** (Moderate)

Exercise Types: Novel

Question

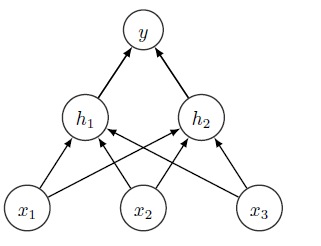

Perform one iteration of forward pass and backward propagation for the following network:

- Input layer: 2 neurons (x₁, x₂)

- Hidden layer: 2 neurons (h₁, h₂)

- Output layer: 1 neuron (ŷ)

- Input-to-Hidden Layer:

w₁₁¹ = 0.15, w₂₁¹ = 0.20, w₁₂¹ = 0.25, w₂₂¹ = 0.30 Bias: b¹ = 0.35 Activation function: sigmoid

- Hidden-to-Output Layer:

w₁₁² = 0.40, w₂₁² = 0.45 Bias: b² = 0.60 Activation function: sigmoid

Input:

- x₁ = 0.05, x₂ = 0.10

- Target output: y = 0.01

- Learning rate: η = 0.5

Solution

Step 1: Forward Pass

1. Hidden Layer Outputs

For neuron h₁:

- [math]\displaystyle{ z₁¹ = w₁₁¹ \cdot x₁ + w₂₁¹ \cdot x₂ + b¹ = 0.15(0.05) + 0.20(0.10) + 0.35 = 0.3775 }[/math]

- [math]\displaystyle{ h₁ = \sigma(z₁¹) = \frac{1}{1 + e^{-0.3775}} \approx 0.5933 }[/math]

For neuron h₂:

- [math]\displaystyle{ z₂¹ = w₁₂¹ \cdot x₁ + w₂₂¹ \cdot x₂ + b¹ = 0.25(0.05) + 0.30(0.10) + 0.35 = 0.3925 }[/math]

- [math]\displaystyle{ h₂ = \sigma(z₂¹) = \frac{1}{1 + e^{-0.3925}} \approx 0.5968 }[/math]

2. Output Layer

- [math]\displaystyle{ z² = w₁₁² \cdot h₁ + w₂₁² \cdot h₂ + b² = 0.40(0.5933) + 0.45(0.5968) + 0.60 = 1.1051 }[/math]

- [math]\displaystyle{ \hat{y} = \sigma(z²) = \frac{1}{1 + e^{-1.1051}} \approx 0.7511 }[/math]

Step 2: Compute Error

- [math]\displaystyle{ E = \frac{1}{2} (\hat{y} - y)^2 = \frac{1}{2} (0.7511 - 0.01)^2 \approx 0.2738 }[/math]

Step 3: Backpropagation

3.1: Gradients for Output Layer

1. Gradient w.r.t. output neuron:

- [math]\displaystyle{ \delta² = (\hat{y} - y) \cdot \hat{y} \cdot (1 - \hat{y}) }[/math]

- [math]\displaystyle{ \delta² = (0.7511 - 0.01) \cdot 0.7511 \cdot (1 - 0.7511) = 0.1381 }[/math]

2. Update weights and bias for hidden-to-output layer:

- [math]\displaystyle{ w₁₁² = w₁₁² - \eta \cdot \delta² \cdot h₁ = 0.40 - 0.5 \cdot 0.1381 \cdot 0.5933 = 0.359 }[/math]

- [math]\displaystyle{ w₂₁² = w₂₁² - \eta \cdot \delta² \cdot h₂ = 0.45 - 0.5 \cdot 0.1381 \cdot 0.5968 = 0.409 }[/math]

- [math]\displaystyle{ b² = b² - \eta \cdot \delta² = 0.60 - 0.5 \cdot 0.1381 = 0.53095 }[/math]

3.2: Gradients for Hidden Layer

1. Gradients for hidden layer neurons:

For h₁:

- [math]\displaystyle{ \delta₁ = \delta² \cdot w₁₁² \cdot h₁ \cdot (1 - h₁) }[/math]

- [math]\displaystyle{ \delta₁ = 0.1381 \cdot 0.40 \cdot 0.5933 \cdot (1 - 0.5933) = 0.0138 }[/math]

For h₂:

- [math]\displaystyle{ \delta₂ = \delta² \cdot w₂₁² \cdot h₂ \cdot (1 - h₂) }[/math]

- [math]\displaystyle{ \delta₂ = 0.1381 \cdot 0.45 \cdot 0.5968 \cdot (1 - 0.5968) = 0.0148 }[/math]

2. Update weights and bias for input-to-hidden layer:

For w₁₁¹:

- [math]\displaystyle{ w₁₁¹ = w₁₁¹ - \eta \cdot \delta₁ \cdot x₁ = 0.15 - 0.5 \cdot 0.0138 \cdot 0.05 = 0.14965 }[/math]

For w₂₁¹:

- [math]\displaystyle{ w₂₁¹ = w₂₁¹ - \eta \cdot \delta₁ \cdot x₂ = 0.20 - 0.5 \cdot 0.0138 \cdot 0.10 = 0.19931 }[/math]

For b¹:

- [math]\displaystyle{ b¹ = b¹ - \eta \cdot (\delta₁ + \delta₂) = 0.35 - 0.5 \cdot (0.0138 + 0.0148) = 0.3347 }[/math]

This completes one iteration of forward and backward propagation.

Exercise 3.5

Level: * (Easy)

Exercise Types: Novel

Question

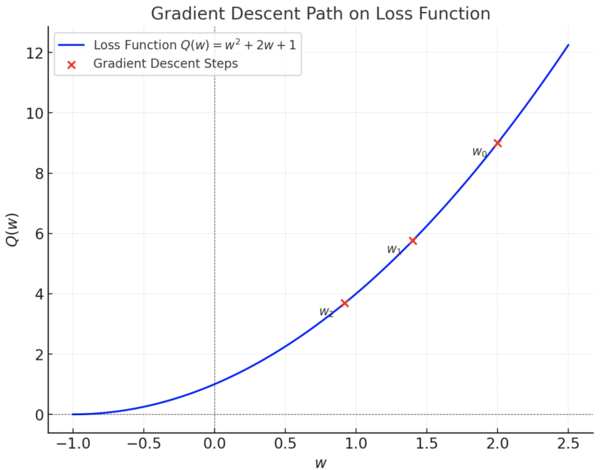

Consider the loss function [math]\displaystyle{ Q(w) = w^2 + 2w + 1 }[/math]. Compute the gradient of [math]\displaystyle{ Q(w) }[/math]. Starting from [math]\displaystyle{ w_0 = 2 }[/math], perform two iterations of stochastic gradient descent using a learning rate [math]\displaystyle{ \rho = 0.1 }[/math].

Solution

Compute the gradient at [math]\displaystyle{ w_0 }[/math]:[math]\displaystyle{ \nabla Q(w_0) = \frac{d}{dw_0}(w_0^2 + 2w_0 + 1) = 2w_0 + 2 = 2(2) + 2 = 4 + 2 = 6 }[/math]

Update the weight using SGD: [math]\displaystyle{ w_1 = w_0 - \rho \cdot \nabla Q(w_0) = 2 - 0.1 \cdot 6 = 2 - 0.6 = 1.4 }[/math]