stat940F24

Regularization in Deep Learning

Introduction

Regularization is a fundamental concept in machine learning, particularly in deep learning, where models with a high number of parameters are prone to overfitting. Overfitting occurs when a model learns the noise in the training data rather than the underlying distribution, leading to poor generalization on unseen data. Regularization techniques aim to constrain the model’s capacity, thus preventing overfitting and improving generalization. This chapter will explore various regularization methods in detail, complete with mathematical formulations, intuitive explanations, and practical implementations.

Classical Regularization: Parameter Norm Penalties

L2 Regularization (Weight Decay)

L2 Parameter Regularization (Weight Decay)

Overview

L2 parameter regularization, commonly known as weight decay, is a technique used to prevent overfitting in machine learning models by penalizing large weights. This penalty helps in constraining the model's complexity.

The regularization term is given by:

[math]\displaystyle{ \mathcal{R}(w) = \frac{\lambda}{2} \|w\|_2^2 }[/math]

where:

- [math]\displaystyle{ \lambda }[/math] is the regularization strength (a hyperparameter),

- [math]\displaystyle{ w }[/math] represents the model weights,

- [math]\displaystyle{ \|w\|_2 }[/math] denotes the L2 norm of the weight vector.

Gradient of the Total Objective Function

The gradient of the total objective function, which includes both the loss and the regularization term, is given by:

[math]\displaystyle{ \nabla_w \mathcal{L}_{\text{total}}(w; X, y) = \lambda w + \nabla_w \mathcal{L}(w; X, y) }[/math]

The weight update rule with L2 regularization using gradient descent is:

[math]\displaystyle{ w := w - \eta (\lambda w + \nabla_w \mathcal{L}(w; X, y)) }[/math]

where [math]\displaystyle{ \eta }[/math] is the learning rate.

Quadratic Approximation to the Objective Function

Consider a quadratic approximation to the objective function:

[math]\displaystyle{ \mathcal{L}(w) \approx \mathcal{L}(w^*) + \frac{1}{2} (w - w^*)^\top H (w - w^*) }[/math]

where:

- [math]\displaystyle{ w^* }[/math] is the optimum weight vector,

- [math]\displaystyle{ H }[/math] is the Hessian matrix of second derivatives.

The modified gradient equation becomes:

[math]\displaystyle{ \lambda w + H (w - w^*) = 0 }[/math]

Solving for [math]\displaystyle{ w }[/math], we get:

[math]\displaystyle{ w = (H + \lambda I)^{-1} H w^* }[/math]

where [math]\displaystyle{ I }[/math] is the identity matrix.

Eigenvalue Decomposition

Assume [math]\displaystyle{ H = Q \Lambda Q^\top }[/math] where [math]\displaystyle{ Q }[/math] is the orthogonal matrix of eigenvectors and [math]\displaystyle{ \Lambda }[/math] is the diagonal matrix of eigenvalues.

Then the weight vector can be expressed as:

[math]\displaystyle{ w = Q(\Lambda + \lambda I)^{-1} \Lambda Q^\top w^* }[/math]

The effect of weight decay is to rescale the coefficients of the eigenvectors. The [math]\displaystyle{ i }[/math]-th component is rescaled by a factor of [math]\displaystyle{ \frac{\lambda_i}{\lambda_i + \lambda} }[/math], where [math]\displaystyle{ \lambda_i }[/math] is the [math]\displaystyle{ i }[/math]-th eigenvalue.

- If [math]\displaystyle{ \lambda_i \gt \lambda }[/math], the effect of regularization is relatively small.

- Components with [math]\displaystyle{ \lambda_i \lt \lambda }[/math] will be shrunk to have nearly zero magnitude.

Effective Number of Parameters

Directions along which the parameters contribute significantly to reducing the objective function are preserved. A small eigenvalue of the Hessian indicates that movement in this direction will not significantly increase the gradient.

The effective number of parameters can be defined as:

[math]\displaystyle{ \text{Effective Number of Parameters} = \sum_i \frac{\lambda_i}{\lambda_i + \lambda} }[/math]

As [math]\displaystyle{ \lambda }[/math] increases, the effective number of parameters decreases, which reduces the model's complexity.

(Placeholder for Image) (Include an image illustrating the effect of weight decay on the eigenvalues and the effective number of parameters)

Dataset Augmentation

Overview

Dataset augmentation is a technique used to improve the generalization ability of machine learning models by artificially increasing the size of the training dataset. This is particularly useful when the amount of available data is limited. The idea is to create new, synthetic data by applying various transformations to the original dataset.

- Key Idea: The best way to make a machine learning model generalize better is to train it on more data. When the amount of available data is limited, creating synthetic data (e.g., by applying transformations like rotation, translation, and noise addition) and adding it to the training set can be effective.

- Practical Example: Operations like translating training images a few pixels in each direction can greatly improve generalization. Another approach is to train neural networks with random noise applied to their inputs, which also serves as a form of dataset augmentation. This technique can be applied not only to the input layer but also to hidden layers, effectively performing dataset augmentation at multiple levels of abstraction.

---

Noise Injection

Overview

Noise injection is a regularization strategy that can be applied in two main ways:

1. Adding Noise to the Input: This method can be interpreted as a form of dataset augmentation and also has a direct connection to traditional regularization methods. 2. Adding Noise to the Weights: This method is primarily used in the context of recurrent neural networks and can be viewed as a stochastic implementation of Bayesian inference over the weights.

Mathematical Proof for Injecting Noise at the Input

Consider a regression setting where we have an input-output pair \( (x, y) \) and the goal is to minimize the expected loss function:

[math]\displaystyle{ J = \mathbb{E}_{x, y} \left[(f(x) - y)^2\right] }[/math]

Now, suppose we inject noise into the input \( x \), where the noise \( \epsilon \) is drawn from a distribution with mean zero (e.g., Gaussian noise \( \epsilon \sim \mathcal{N}(0, \sigma^2) \)). The modified objective function with noise-injected inputs becomes:

[math]\displaystyle{ J_{\text{noise}} = \mathbb{E}_{x, y, \epsilon} \left[(f(x + \epsilon) - y)^2\right] }[/math]

To understand the effect of noise injection, we can expand the function \( f(x + \epsilon) \) around \( x \) using a Taylor series:

[math]\displaystyle{ f(x + \epsilon) = f(x) + \epsilon^\top \nabla_x f(x) + \frac{1}{2} \epsilon^\top \nabla_x^2 f(x) \epsilon + \mathcal{O}(\|\epsilon\|^3) }[/math]

Since the expectation of the noise \( \epsilon \) is zero:

[math]\displaystyle{ \mathbb{E}[\epsilon] = 0 }[/math]

and assuming that the noise is isotropic with covariance matrix \( \sigma^2 I \), the expectation of the second-order term becomes:

[math]\displaystyle{ \mathbb{E}[\epsilon \epsilon^\top] = \sigma^2 I }[/math]

Substituting the Taylor expansion into the objective function:

[math]\displaystyle{ J_{\text{noise}} = \mathbb{E}_{x, y} \left[(f(x) - y)^2\right] + \frac{\sigma^2}{2} \mathbb{E}_{x, y} \left[\|\nabla_x f(x)\|^2\right] + \mathcal{O}(\sigma^4) }[/math]

This shows that the objective function with noise injection is equivalent to the original objective function plus a regularization term that penalizes large gradients of the function \( f(x) \). Specifically, the added term [math]\displaystyle{ \frac{\sigma^2}{2} \mathbb{E}_{x, y} \left[\|\nabla_x f(x)\|^2\right] }[/math] reduces the sensitivity of the network's output to small variations in its input.

Key Result:

For small noise variance \( \sigma^2 \), the minimization of the loss function with noise-injected input is equivalent to minimizing the original loss function with an additional regularization term that penalizes large gradients:

[math]\displaystyle{ J_{\text{noise}} \approx J + \frac{\sigma^2}{2} \mathbb{E}_{x, y} \left[\|\nabla_x f(x)\|^2\right] }[/math]

This regularization term effectively reduces the sensitivity of the output with respect to small changes in the input \( x \), which is beneficial in avoiding overfitting.

Connection to Weight Decay:

In linear models, where \( f(x) = w^\top x \), the gradient \( \nabla_x f(x) \) is simply the weight vector \( w \). Therefore, the regularization term becomes:

[math]\displaystyle{ \frac{\sigma^2}{2} \|w\|^2 }[/math]

which is equivalent to L2 regularization or weight decay.

Manifold Tangent Classifier

Overview

The Manifold Tangent Classifier (MTC) is a classification technique that leverages the idea that data often lies on a lower-dimensional manifold within the high-dimensional input space. The key assumption is that examples on the same manifold share the same category, and the classifier should be invariant to local factors of variation that correspond to movements on the manifold.

- Key Idea: The classifier should be invariant to variations along the manifold while being sensitive to changes that move the data off the manifold.

Tangent Propagation Algorithm

One approach to achieve invariance to manifold variations is to use the Tangent-Prop algorithm (Simard et al., 1992). The main idea is to add a penalty to the loss function that encourages the neural network's output to be locally invariant to known factors of variation. This is achieved by requiring the gradient of the output with respect to the input to be orthogonal to the known manifold tangent vectors \( v_i \) at each point \( x \).

The regularization term can be expressed as:

[math]\displaystyle{ \text{Regularizer} = \lambda \sum_{i} \left(\frac{\partial f(x)}{\partial x} \cdot v_i \right)^2 }[/math]

where:

- \( \frac{\partial f(x)}{\partial x} \) is the gradient of the neural network output with respect to the input,

- \( v_i \) are the known tangent vectors of the manifold,

- \( \lambda \) is the regularization strength.

This regularization ensures that the directional derivative of \( f(x) \) in the directions \( v_i \) is small, promoting invariance along the manifold.

Manifold Tangent Classifier (MTC)

A more recent approach, introduced by Rifai et al. (2011), eliminates the need to know the tangent vectors a priori. The Manifold Tangent Classifier automatically learns these tangent vectors during training, making it more flexible and applicable to a wider range of problems.

---

Early Stopping as a Form of Regularization

Overview

Early stopping is one of the most commonly used forms of regularization in deep learning. Instead of running the optimization algorithm until it reaches a local minimum of the training error, early stopping involves monitoring the validation error during training and halting the process when the validation error stops improving.

- Key Idea: During training, whenever the error on the validation set improves, a copy of the model parameters is stored. The training is stopped when the validation error has not improved for a predetermined amount of time, and the best model parameters (those that resulted in the lowest validation error) are returned.

Mathematical Formulation

Assume that \( w \) represents the model weights (ignoring bias parameters). We take a quadratic approximation to the objective function \( J(w) \) around the empirically optimal value of the weights \( w^* \):

[math]\displaystyle{ J(w) \approx J(w^*) + \frac{1}{2} (w - w^*)^\top H (w - w^*) }[/math]

where:

- \( H \) is the Hessian matrix of second derivatives.

The gradient of the objective function is:

[math]\displaystyle{ \nabla_w J(w) = H(w - w^*) }[/math]

During training, the parameter vector is updated according to:

[math]\displaystyle{ w^{(t+1)} = w^{(t)} - \eta \nabla_w J(w^{(t)}) }[/math]

Substituting the expression for the gradient:

[math]\displaystyle{ w^{(t+1)} - w^* = (I - \eta H) (w^{(t)} - w^*) }[/math]

where \( \eta \) is the learning rate. If we assume that the initial weights are zero (i.e., \( w^{(0)} = 0 \)), we can express the weight update after \( t \) iterations as:

[math]\displaystyle{ w^{(t)} - w^* = (I - \eta H)^t (w^{(0)} - w^*) }[/math]

If we perform an eigenvalue decomposition of \( H \), we get:

[math]\displaystyle{ H = Q \Lambda Q^\top }[/math]

where \( Q \) is the orthogonal matrix of eigenvectors, and \( \Lambda \) is the diagonal matrix of eigenvalues. The weight update can then be rewritten as:

[math]\displaystyle{ w^{(t)} - w^* = Q (I - \eta \Lambda)^t Q^\top (w^{(0)} - w^*) }[/math]

Assuming \( w^{(0)} = 0 \) and that \( |1 - \eta \lambda_i| < 1 \) for all eigenvalues \( \lambda_i \), after \( t \) training updates, we have:

[math]\displaystyle{ Q^\top w^{(t)} \approx [I - (1 - \eta \Lambda)^t] Q^\top w^* }[/math]

Taking the logarithm and using the series expansion for \( \log(1 + x) \), it can be shown that the number of training iterations \( t \) plays a role inversely proportional to the L2 regularization parameter \( \lambda \), and the inverse of \( t \) plays the role of the weight decay coefficient.

Key Insight:

This result shows that early stopping can be interpreted as a form of implicit regularization, where the number of training iterations controls the effective complexity of the model.

Early Stopping as a Form of Regularization

Overview

Early stopping is one of the most commonly used forms of regularization in deep learning. Instead of running the optimization algorithm until it reaches a local minimum of the training error, early stopping involves monitoring the validation error during training and halting the process when the validation error stops improving.

- Key Idea: During training, whenever the error on the validation set improves, a copy of the model parameters is stored. The training is stopped when the validation error has not improved for a predetermined amount of time, and the best model parameters (those that resulted in the lowest validation error) are returned.

Mathematical Formulation

Assume that \( w \) represents the model weights (ignoring bias parameters). We take a quadratic approximation to the objective function \( J(w) \) around the empirically optimal value of the weights \( w^* \):

[math]\displaystyle{ J(w) \approx J(w^*) + \frac{1}{2} (w - w^*)^\top H (w - w^*) }[/math]

where:

\( H \) is the Hessian matrix of second derivatives.

The gradient of the objective function is:

[math]\displaystyle{ \nabla_w J(w) = H(w - w^*) }[/math]

During training, the parameter vector is updated according to:

[math]\displaystyle{ w^{(t+1)} = w^{(t)} - \eta \nabla_w J(w^{(t)}) }[/math]

Substituting the expression for the gradient:

[math]\displaystyle{ w^{(t+1)} - w^* = (I - \eta H) (w^{(t)} - w^*) }[/math]

where \( \eta \) is the learning rate. If we assume that the initial weights are zero (i.e., \( w^{(0)} = 0 \)), we can express the weight update after \( t \) iterations as:

[math]\displaystyle{ w^{(t)} - w^* = (I - \eta H)^t (w^{(0)} - w^*) }[/math]

If we perform an eigenvalue decomposition of \( H \), we get:

[math]\displaystyle{ H = Q \Lambda Q^\top }[/math]

where \( Q \) is the orthogonal matrix of eigenvectors, and \( \Lambda \) is the diagonal matrix of eigenvalues. The weight update can then be rewritten as:

[math]\displaystyle{ w^{(t)} - w^* = Q (I - \eta \Lambda)^t Q^\top (w^{(0)} - w^*) }[/math]

Assuming \( w^{(0)} = 0 \) and that \( |1 - \eta \lambda_i| < 1 \) for all eigenvalues \( \lambda_i \), after \( t \) training updates, we have:

[math]\displaystyle{ Q^\top w^{(t)} \approx [I - (1 - \eta \Lambda)^t] Q^\top w^* }[/math]

Taking the logarithm and using the series expansion for \( \log(1 + x) \), it can be shown that the number of training iterations \( t \) plays a role inversely proportional to the L2 regularization parameter \( \lambda \), and the inverse of \( t \) plays the role of the weight decay coefficient.

Key Insight:

This result shows that early stopping can be interpreted as a form of implicit regularization, where the number of training iterations controls the effective complexity of the model.

Label Smoothing

Label smoothing is a technique to prevent a model from becoming over-confident on a specific class by not forcing the model to fit the data exactly. This approach provides more flexibility and generalization abilities.

Suppose the predicted output is [math]\displaystyle{ y = [0, 1, 0] }[/math], then after applying label smoothing, it becomes [math]\displaystyle{ y = [0.033, 0.933, 0.033] }[/math].

The formula for label smoothing is:

[math]\displaystyle{ y_{\text{smooth}} = (1 - a) \cdot y + \frac{a}{k} }[/math]

where:

- [math]\displaystyle{ a }[/math] is the smoothing factor,

- [math]\displaystyle{ y }[/math] is the original label,

- [math]\displaystyle{ k }[/math] is the number of classes.

Bagging/Ensemble

Bagging (short for bootstrap aggreggating) is a technique for reducing gen-eralization error by combining seveal models (Breiman,1994)

Train several different models sepaately, then have all of the models vote on the output for test examples. For example, random forest.

However, it is not practical to vote in deep learning.

Dropout

Overview

Dropout is one of the techniques for preventing overfitting in deepneural network which contains a large number of parameters.

The key idea is to randomly drop units from the neural networkduring training.

- During training, dropout samples from number of different “thinned” network.

- At test time, we approximate the effect of averaging the predictions of all these thinned networks

Model

Consider a neural network with [math]\displaystyle{ L }[/math] hidden layers:

- Let [math]\displaystyle{ z^{(l)} }[/math] denote the vector inputs into layer [math]\displaystyle{ l }[/math].

- Let [math]\displaystyle{ y^{(l)} }[/math] denote the vector of outputs from layer [math]\displaystyle{ l }[/math].

- Let [math]\displaystyle{ W^{(l)} }[/math] and [math]\displaystyle{ b^{(l)} }[/math] represent the weights and biases at layer [math]\displaystyle{ l }[/math].

With dropout, the feed-forward operation becomes:

[math]\displaystyle{ r^{(l)} \sim \text{Bernoulli}(p) }[/math]

[math]\displaystyle{ y^{(l)} = r^{(l)} \odot y^{(l)} }[/math]

where [math]\displaystyle{ \odot }[/math] denotes element-wise multiplication.

The feed-forward equation for layer [math]\displaystyle{ l+1 }[/math] becomes:

[math]\displaystyle{ z^{(l+1)} = W^{(l+1)} y^{(l)} + b^{(l+1)} }[/math]

[math]\displaystyle{ y^{(l+1)} = f(z^{(l+1)}) }[/math]

where [math]\displaystyle{ f }[/math] is the activation function.

For any layer [math]\displaystyle{ l }[/math], [math]\displaystyle{ r^{(l)} }[/math] is a vector of independent Bernoulli random variables, each of which has a probability [math]\displaystyle{ p }[/math] of being 1. The vector [math]\displaystyle{ y^{(l)} }[/math] is the input after some hidden units are dropped. The rest of the model remains the same as a regular feed-forward neural network.

Training

Dropout neural network can be trained using stochastic gradientdescent. The only difference here is that we only back propagate on eachthinned network. The gradient for each parameter are averaged over the training cases in each mini-batch.

Test Time

Use a single neural net without dropout If a unit is retained with probability p during training, the outgoing weights of that unit are multiplied by p at test time. [math]\displaystyle{ p \cdot w }[/math]

Additional Regularization

L1 norm

L1 norm regularization, also known as Lasso, is a technique that adds a penalty equal to the absolute value of the magnitude of coefficients to the loss function. This encourages sparsity in the learned weights, meaning it forces some of the weights to become exactly zero, effectively selecting important features and reducing model complexity.

Mixup

Mixup is a data augmentation technique that creates new training examples by taking convex combinations of pairs of input data and their labels. By blending images and labels together, the model learns smoother decision boundaries and becomes more robust to adversarial examples and noise.

Cutout

Cutout is a form of data augmentation where random square regions are masked out (set to zero) in input images during training. This forces the model to focus on a broader range of features across the image rather than relying on any single part, leading to better generalization and robustness.

Gradient Clipping

Gradient clipping is a technique used to prevent the gradients from becoming too large during training, which can cause the model to diverge. This is done by capping the gradients at a predefined threshold, ensuring that updates remain stable, especially in models like recurrent neural networks (RNNs) where exploding gradients are a common issue.

Generalization Paradox

- Models with many parameters tend to overfit

- However, deep neural network, despite using many parameters, works well with unseen data (look up the Double Descent Curve), the reason remain unknown

Batch Normalization

Overview

Batch normalization is a technique used to improve the training process of deep neural networks by normalizing the inputs of each layer. Despite the initial intuition for the method being somewhat incorrect, it has proven to be highly effective in practice. Batch normalization speeds up convergence, allows for larger learning rates, and makes the model less sensitive to initialization, resulting in more stable and efficient training.

Batch normalization motivated by internal covariate shift (2015 lofee & Szegedy)

Internal Covariance Shift

Batch normalization was originally proposed as a solution to the internal covariance shift problem, where the distribution of inputs to each layer changes during training. This shift complicates training because the model must constantly adapt to new input distributions.

The transformation of layers can be described as:

[math]\displaystyle{ l = F_2(F_1(u, \theta_1), \theta_2) }[/math]

For a mini-batch of activations [math]\displaystyle{ X = \{x_1, x_2, \dots, x_m\} }[/math] from a specific layer, batch normalization proceeds as follows:

1. Compute the mean: [math]\displaystyle{ \mu_B = \frac{1}{m} \sum_{i=1}^{m} x_i }[/math]

2. Compute the variance: [math]\displaystyle{ \sigma_B^2 = \frac{1}{m} \sum_{i=1}^{m} (x_i - \mu_B)^2 }[/math]

3. Normalize the activations: [math]\displaystyle{ \hat{x}_i = \frac{x_i - \mu_B}{\sqrt{\sigma_B^2 + \epsilon}} }[/math]

4. Scale and shift the normalized activations using learned parameters [math]\displaystyle{ \gamma }[/math] (scale) and [math]\displaystyle{ \beta }[/math] (shift): [math]\displaystyle{ y_i = \gamma \hat{x}_i + \beta }[/math]

where:

- [math]\displaystyle{ x_i }[/math] represents the activations in the mini-batch,

- [math]\displaystyle{ \mu_B }[/math] is the mean of the mini-batch,

- [math]\displaystyle{ \sigma_B^2 }[/math] is the variance of the mini-batch,

- [math]\displaystyle{ \epsilon }[/math] is a small constant added for numerical stability,

- [math]\displaystyle{ \hat{x}_i }[/math] is the normalized activation,

- [math]\displaystyle{ \gamma }[/math] and [math]\displaystyle{ \beta }[/math] are learned parameters for scaling and shifting.

The batch normalization improves validation accuracy by removing the dropout and enables higher learning rate

Batch Normalization

As mentioned earlier, the original intuition behind batch normalization was found to be incorrect after further research. A paper by Santurkar, S., Tsipras, D., Ilyas, A., & Madry, A. (NeurIPS 2019) contradicted the original 2015 paper on BatchNorm by highlighting the following points:

- Batch normalization does not fix covariate shift.

- If we fix covariate shift, it doesn't help.

- lf we intentionally increase lCS, it doesn't harm.

- Batch Norm is not the only possible normalization. There are alternatives.

Instead, they argue that Batch normalization works better due to other factors, particularly related to its effect on the optimization process:

1. Reparameterization of the loss function:

- Improved Lipschitzness: Batch normalization improves the Lipschitz continuity of the loss function, meaning that the loss changes at a smaller rate, and the magnitudes of the gradients are smaller. This makes the gradients of the loss more "Lipschitz."

- Better β-smoothness: The loss exhibits significantly better smoothness, which aids in optimization by preventing large, erratic changes in the gradient.

2. Variation of the loss function: BatchNorm reduces the variability of the value of the loss. Consider the variation of the loss function: [math]\displaystyle{ \mathcal{L}(x + \eta \nabla \mathcal{L}(x)) }[/math] where [math]\displaystyle{ \eta \in [0.05, 0.4] }[/math]. A smaller variability of the loss indicates that the steps taken during training are less likely to cause the loss to increase uncontrollably.

3. Gradient predictiveness: BatchNorm enhances the predictiveness of the gradients, meaning the changes in the loss gradient are more stable and predictable. This can be expressed as: [math]\displaystyle{ || \nabla \mathcal{L}(x) - \nabla \mathcal{L}(x + \eta \nabla \mathcal{L}(x)) || }[/math], where [math]\displaystyle{ \eta \in [0.05, 0.4] }[/math]. A good gradient predictiveness implies that the gradient evaluated at a given point remains relevant over longer distances, which allows for larger step sizes during training.

Alternatives to Batch Norm

Weight Normalization: Weight normalization is a technique where the weights, instead of the activations, are normalized. This method reparameterizes the weight vectors to accelerate the training of deep neural networks.

Tim Salimans and Diederik P. Kingma, "Weight Normalization: A Simple Reparameterization to Accelerate Training of Deep Neural Networks," 2016.

ELU (Exponential Linear Unit) and SELU (Scaled Exponential Linear Unit): ELU and SELU are two proposed activation functions that have a decaying slope instead of a sharp saturation. They can be used as alternatives to BatchNorm by providing smoother, non-linear activation without requiring explicit normalization.

Djork-Arné Clevert, Thomas Unterthiner, and Sepp Hochreiter, "Fast and Accurate Deep Network Learning by Exponential Linear Units (ELUs)," In International Conference on Learning Representations (ICLR), 2016. Günter Klambauer, Thomas Unterthiner, Andreas Mayr, and Sepp Hochreiter, "Self-Normalizing Neural Networks," ICLR, 2017.

Convolutional Neural Network (CNN)

Introduction

Convolutional networks are simply neural networks that use convolution instead of general matrix multiplication in at least one of their layers. CNN is mainly used for image processing.

Convolution

In ML, convolution means dot product

[math]\displaystyle{ h = \sigma(\langle x, w\rangle+b) }[/math]

- Same x, different w -- multi-layer perception (MLP)

- Different x, same w -- CNN (weight sharing)

From class, the following operation is called convolution

[math]\displaystyle{ s(t) = \int x(a)w(t-a)ds }[/math]

The convolution operation is typically denoted with an asterisk:

[math]\displaystyle{ s(t) = (x \ast w)(t) }[/math]

Discrete Convolution

If we now assume that x and w are defined only on integer t, we can define the discrete convolution:

[math]\displaystyle{ s[t] = (x \ast w)(t) = \sum_{a=-\infty}^{\infty} x[a] \, w[t-a] }[/math]

[math]\displaystyle{ w[t-a] }[/math] represents the sequence [math]\displaystyle{ w[t] }[/math] shifted by [math]\displaystyle{ a }[/math] units.

In practice

We often use convolutions over more than one axis at a time.

[math]\displaystyle{ s[i,j] = (I * K)[i,j] = \sum_m \sum_n I[m,n] K[i-m, j-n] }[/math]

- Input: usually a multidimensional array of data.

- Kernel: usually a multidimensional array of parameters that should be learned.

We assume that these functions are zero everywhere but the finite set of points for which we store the values.

We can implement the infinite summation as a summation over a finite number of array elements.

Convolution and Cross-Correlation

Convolution is commutative:

[math]\displaystyle{ s[i,j] = (I * K)[i,j] = \sum_m \sum_n I[i-m, j-n] K[m, n] }[/math]

Cross-correlation:

[math]\displaystyle{ s[i,j] = (I * K)[i,j] = \sum_m \sum_n I[i+m, j+n] K[m, n] }[/math]

Many machine learning libraries implement cross-correlation but call it convolution. In the context of backpropagation in neural networks, cross-correlation simplifies the computation of gradients with respect to the input and kernel.

Visualization of Cross-Correlation and Convolution with Matlab (https://www.youtube.com/v/Ma0YONjMZLI)

Image to Convolved Feature

- Kernel\filter size: weight [math]\displaystyle{ \times }[/math] height, e.g. 3 [math]\displaystyle{ \times }[/math] 3 in below example

- Stride: how many pixels to move the filter each time

- Padding: add zeros (or any other value) around the boundary of the input

Example

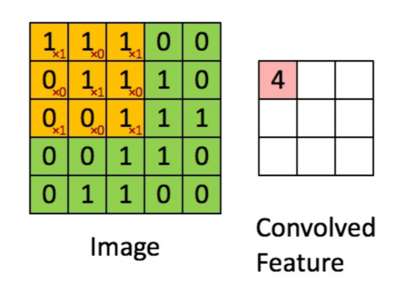

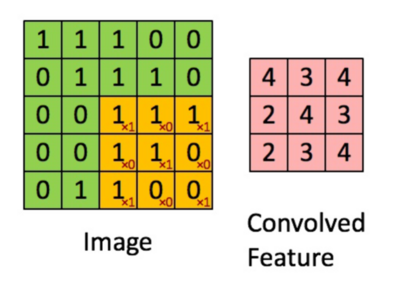

The following image illustrates a 2D convolution operation between an input image and a filter to produce a convolved feature map.

Image (Input): The grid on the left represents a 5[math]\displaystyle{ \times }[/math]5 matrix of pixel values. The orange-highlighted 3[math]\displaystyle{ \times }[/math]3 region is part of the image currently being convolved with the filter.

Convolution Operation: The filter values are applied to the selected region in an element-wise multiplication followed by a summation. The operation is as follows:

[math]\displaystyle{ (1 \times 1) + (1 \times 0) + (1 \times 1) + (0 \times 0) + (1 \times 1) + (1 \times 0) + (0 \times 1) + (0 \times 0) + (1 \times 1) = 4 }[/math]

Convolved Feature Map: The result value [math]\displaystyle{ 4 }[/math], is placed in the corresponding position (top-left) of the convolved feature map on the right.

This process is repeated as the filter slides across the entire image. The final feature map is shown below.

Sparse interactions

Parameter Sharing

Equivariance

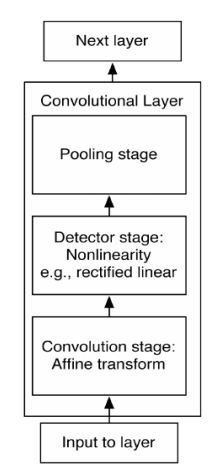

Convolutional Networks

Pooling

Popular Pooling functions

Pooling with Downsampling

Pooling and Translations

Input of Varying Size

Convolution and Pooling as an Infinitely Strong Prior

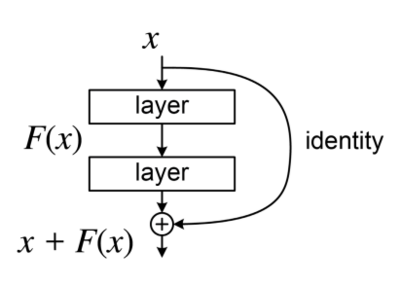

Residual Networks (ResNet)

Overview

Deeper models are harder to train due to vanishing/exploding gradients and can be worse than shallower networks if not properly trained. There are advanced networks to deal with the degradation problem.

ResNet, short for Residual Networks, was introduced by Kaiming He et al. from Microsoft Research in 2015. It brought a significant breakthrough in deep learning by enabling the training of much deeper networks, addressing the vanishing gradient problem.

- ResNet introduces the concept of skip connections (or residual connections) that allow the gradient to be directly backpropagated to earlier layers

- Skip connections help in overcoming the degradation problem, where the accuracy saturates and then degrades rapidly as the network depth increases

Variants

Several variants of ResNet have been developed, including ResNet-50, ResNet-101, and ResNet-152, differing in the number of layers.

Application

ResNet has been widely adopted for various computer vision tasks, including image classification, object detection, and facial recognition.

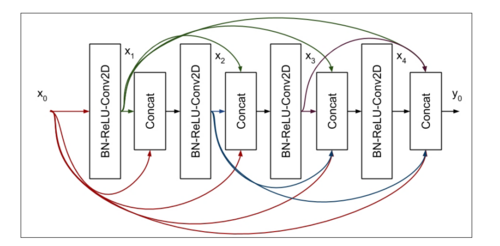

DenseNet

Overview

- DenseNet, short for Densely Connected Networks, was introduced by Gao Huang et al. in 2017.

- It is known for its efficient connectivity between layers, which enhances feature propagation and reduces the number of parameters

Key Feature

The key feature is Dense Connectivity.

- In DenseNet, each layer receives feature maps from all preceding layers and passes its own feature maps to all subsequent layers

- This dense connectivity improves the flow of information and gradients throughout the network, mitigating the vanishing gradient problem