a neural representation of sketch drawings

Introduction

lalala

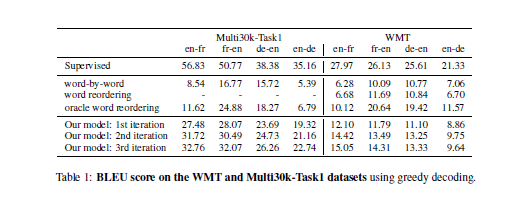

- To provide a strong lower bound that any semi-supervised machine translation system is supposed to yield

Related Work

The authors offer two motivations for their work:

- To translate between languages for which large parallel corpora does not exist

Methodology

Dataset

Sketch-RNN

Unconditional Generation

Training

Experiments

Conditional Reconstruction

Latent Space Interpolation

Sketch Drawing Analogies

Predicting Different Endings of Incomplete Sketches

Applications and Future Work

Conclusion

References

- Bahdanau, Dzmitry, Kyunghyun Cho, and Yoshua Bengio. "Neural machine translation by jointly learning to align and translate." arXiv preprint arXiv:1409.0473 (2014).

fonts and examples

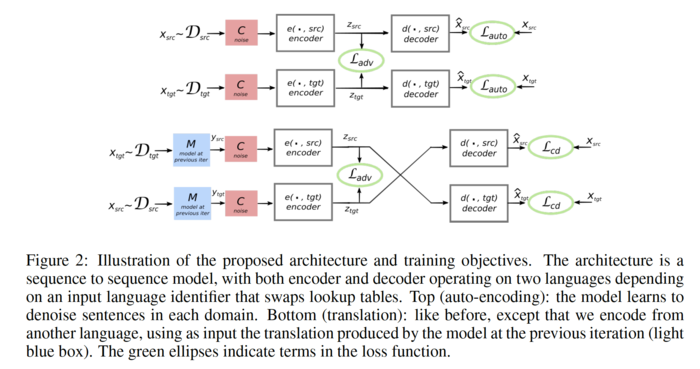

The unsupervised translation scheme has the following outline:

- The word-vector embeddings of the source and target languages are aligned in an unsupervised manner.

- Sentences from the source and target language are mapped to a common latent vector space by an encoder, and then mapped to probability distributions over

The objective function is the sum of:

- The de-noising auto-encoder loss,

I shall describe these in the following sections.