Speech2Face: Learning the Face Behind a Voice

Presented by

Ian Cheung, Russell Parco, Scholar Sun, Jacky Yao, Daniel Zhang

Introduction

Previous Work

Motivation

Often, when we listen to a person speaking without seeing his/her face, on the phone, or on the radio, we build a mental image in our head for what we think the person may look like. There is a strong connection between speech and appearance, which is a direct result of the factors that affect speech, such as, age, gender (which affects the pitch of our voice), the shape of the mouth, etc. In addition, other voice-appearance correlations stem from the way in which we talk: language, accent, speed, pronunciations—such properties of speech are often shared among nationalities and cultures, which can, in turn, translate to common physical features. Our goal in this work is to study to what extent we can infer how a person looks from the way they talk. Specifically, from a short input audio segment of a person speaking, our method directly reconstructs an image of the person’s face in a canonical form (i.e., frontal-facing, neutral expression). Obviously, there is no one-to-one matching between faces and voices. Thus, our goal is not to predict a recognizable image of the exact face, but rather to capture dominant facial then we listen to a person speaking without seeing his/her face, on the phone, or on the radio, we often build a mental model for the way the person looks. There is a strong connection between speech and appearance, part of which is a direct result of the mechanics of speech production: age, gender (which affects the pitch of our voice), the shape of the mouth, facial bone structure, thin or full lips—all can affect the sound we generate. In addition, other voice-appearance correlations stem from the way in which we talk: language, accent, speed, pronunciations—such properties of speech are often shared among nationalities and cultures, which can, in turn, translate to common physical features. Our goal in this work is to study to what extent we can infer how a person looks from the way they talk. Specifically, from a short input audio segment of a person speaking, our method directly reconstructs an image of the person’s face in a canonical form (i.e., frontal-facing, neutral expression). Obviously, there is no one-to-one matching between faces and voices. Thus, our goal is not to predict a recognizable image of the exact face, but rather to capture dominant facial traits of the person that are correlated with the input speech

Model Architecture

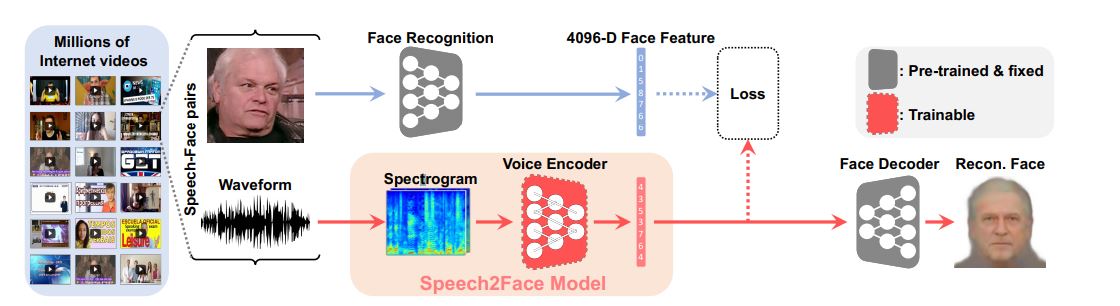

Speech2Face model and training pipeline

The Speech2Face Model used to achieve the desired result consist of 2 parts - a voice encoder which takes in a spectrogram of speech as input and outputs low dimensional face features, and a face decoder which takes in face features as input and outputs a normalized image of a face (neutral expression, looking forward). The image above * * * gives a visual representation of the pipeline of the entire model, from video input to a recognizable face. The face decoder itself was taken from previous work by Cole et al (cite) and will not be explored in great detail here, but in essence the facenet model (cite) is combined with a single multilayer perceptron layer, the result of which is passed through a convolutional neural network to determine the texture of the image, and a multilayer perception to determine the landmark locations. The two results are combined to form an image.

Voice Encoder Architechture

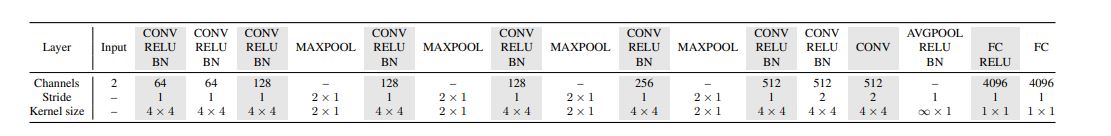

The voice encoder itself is a convolutional neural network, with the exact architecture given above * * *. The model alternates between convolution, ReLU, batch normalization layers, and layers of max-pooling. In each max-pooling layer, pooling is only done along the temporal dimension of the data. This is to ensure that the frequency, an important factor in determining vocal characteristics such as tone, is preserved. In the final pooling layer, an average pooling is applied along the temporal dimension. This allows the model to aggregate information over time, and allows the model to be used for input speeches of varying length. Two fully connected layers at the end are used to return a 4096 dimensional facial feature output.

Training

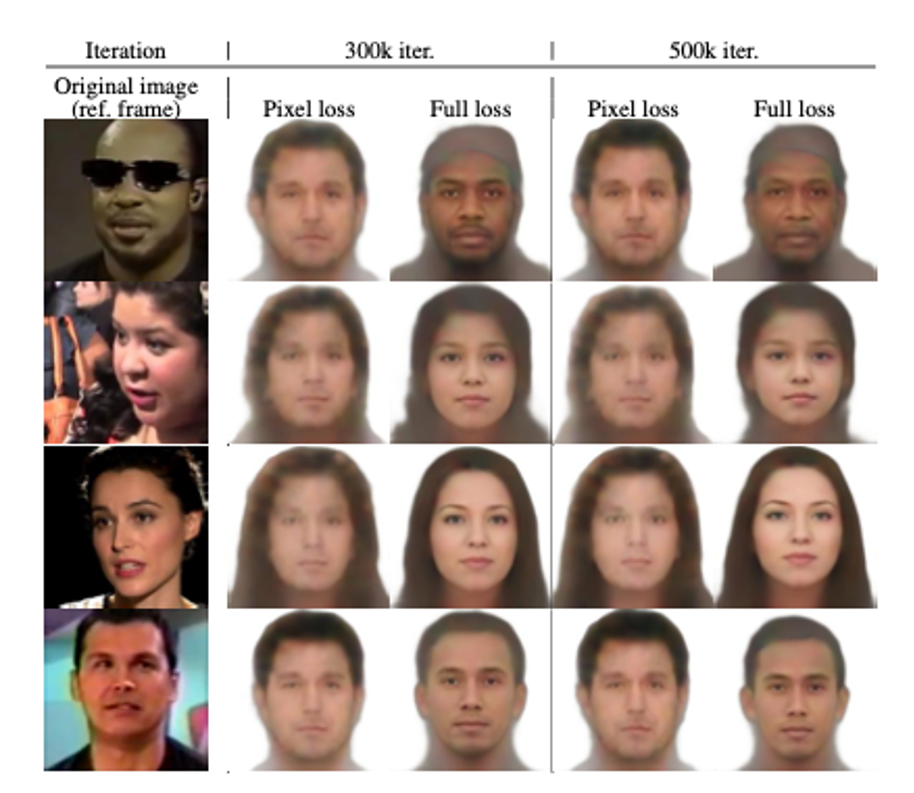

In order to train this model, a proper loss function must be defined. Let [math]\displaystyle{ v_s }[/math] be the 4096 dimensional facial feature vector from the voice encoder, and [math]\displaystyle{ v_f }[/math] be the 4096 dimensional facial feature vector given by the face decoder on a single frame from the input video. The L1 norm of the difference between [math]\displaystyle{ v_s }[/math] and [math]\displaystyle{ v_f }[/math], given by [math]\displaystyle{ ||v_f - v_s||_1 }[/math], may seem like a suitable loss function, but in actuality results in unstable results and long training times. The image below shows the difference in predicted facial features given by [math]\displaystyle{ ||v_f - v_s||_1 }[/math] and the following loss. Based on the work of Castrejon et al. (cite), a loss function which penalizes the differences in the last layer of the face decoder [math]\displaystyle{ f_{VGG} }[/math] and the first layer [math]\displaystyle{ f_{dec} }[/math]. The final loss function is given by: $$L_{total} = ||f_{dec}(v_f) - f_{dec}(v_s)|| + \lambda_1||\frac{v_f}{||v_f||} - \frac{v_s}{||v_s||}||^2_2 + \lambda_2 L_{distill}(f_{VGG}(v_f), f_{VGG}(v_s))$$ This loss penalizes on both the normalized Euclidean distance between the 2 facial feature vectors and the knowledge distillation loss, which is given by: $$L_{distill}(a,b) = -\sum_ip_{(i)}logp_{(i)}(b)$$ $$p_{(i)}(a) = \frac{exp(a_i/T)}{\sum_jexp(a_j/T)}$$ Knowledge distillation is used as an alternative to Cross-Entropy. By recommendation of Cole et al (cite), [math]\displaystyle{ T = 2 }[/math] was used to ensure a smooth activation. [math]\displaystyle{ \lambda_1 = 0.025 }[/math] and [math]\displaystyle{ \lambda_2 = 200 }[/math] were chosen so that magnitude of the gradient of each term with respect to [math]\displaystyle{ v_s }[/math] are of similar scale at the [math]\displaystyle{ 1000^{th} }[/math] iteration.

From each video, a 224x224 pixels image of the face was passed through the face decoder to compute a facial feature vector. Combined with a spectrogram of the audio, a training and test set of 1.7 and 0.15 million entries respectively were constructed.

Results

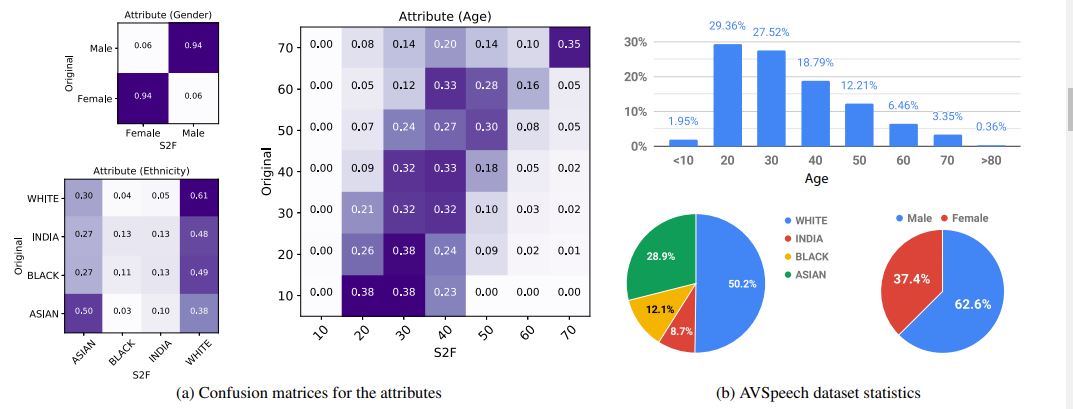

In order to determine the similarity between the generated images and the ground truth, a commercial service known as Face++ which classifies faces for distinct attributes (such as gender, ethnicity, etc) was used. The following image * * *

Confusion Matrix and Dataset statistics

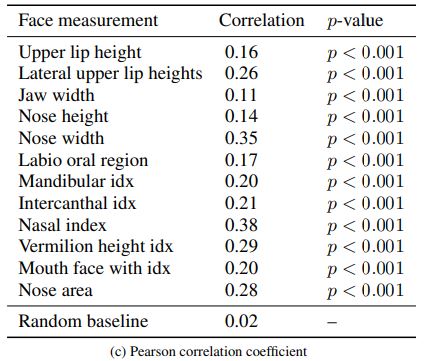

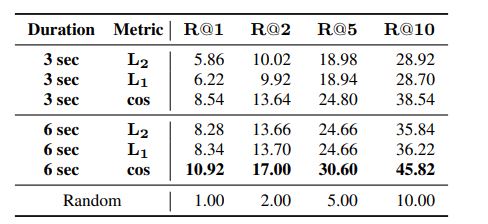

Correlation of Craniofacial features

Feature Similarity

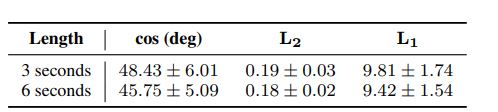

S2f -> Face retrieval performance

Conclusion

The report presented a novel study of face reconstruction from audio recordings of a person speaking. The model was demonstrated to be able to predict plausible face reconstructions with similar facial features to real images of the person speaking. The problme was addressed by learning to align the feature space of speech to that of a pretrained face decoder. The model was trained on millions of videos of people speaking from YouTube. The model was then evaluated by comparing the reconstructed faces with. The authors believe that facial reconstruction allows a more comprehensive view of voice-face correlation compared to predicting individual features, which may lead to new research opportunities and applications.

Discussion and Critiques

Their is evidence that the results of the model may be heavily influenced by external factors. Their method of sampling random YouTube videos resulted in an unbalanced sample in terms of ethnicity. Over half of the samples were white. We also saw a large bias in the models prediction of ethnicity towards white. The bias in the results show that the model may be overfitting the training data and puts into question what the performance of the model would be when trained and tested on a balanced dataset. Also the model was shown to infer different faces features based on language. This puts into question how heavily the model depends on the spoken language. testing a more controlled sample where all speech recording were of the same language may help address this concern to determine the models reliance on spoken language. The evaluation of the result is also highly dependent on the Face++ classifiers. Since they compare the age, gender and ethnicity by running the Face++ classifiers on the original images and the reconstructions to evaluate their model, the model that they create can only be as good as the one they are using to evaluate it. Therefore, any limitations of the Face++ classifier may become a limitation of Speech2Face.