semi-supervised Learning with Deep Generative Models

Introduction

Large labelled data sets have led to massive improvements in the performance of machine learning algorithms, especially supervised neural networks. However, the world in general is not labelled and there exist a far greater amount of unlabelled data than labelled data. A common situation is to have a comparatively small quantity of labelled data paired with a larger amount of unlabelled data. This leads to the idea of a semi-supervised learning model where the unlabelled data is used to prime the model for relevant features and the labels are then learned for classification. A prominent example of this type of model is the restricted Boltzmann machine based Deep Belief Network (DBN). Where layers of RBM are trained to learn unsupervised features of the data and then a final classification layer is applied such that labels can be assigned. Unsupervised learning creates what is known as a generative model which creates a joint distribution [math]\displaystyle{ P(x, y) }[/math] (which can be sampled from). This is contrasted by the supervised discriminative model, which create conditional distributions [math]\displaystyle{ P(y | x) }[/math]. The paper combines these two methods to achieve high performance on benchmark tasks and uses deep neural networks in an innovative manner to create a layered semi-supervised classification/generation model.

Current Models and Limitations

Proposed Method

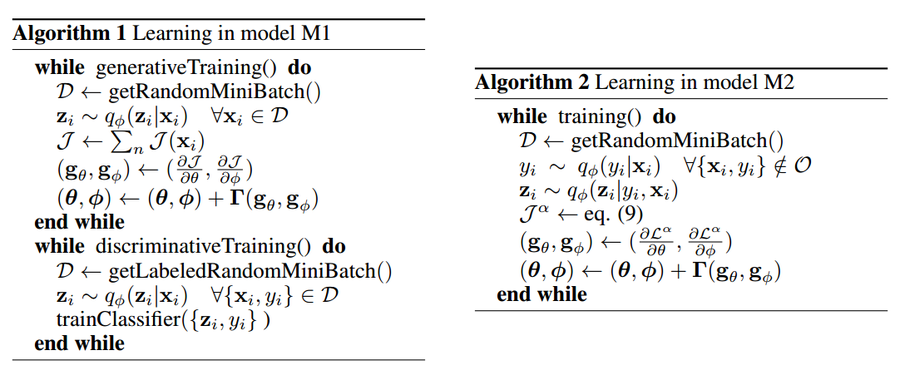

Latent Feature Discriminative Model (M1)

Generative Semi-Supervised Model (M2)

Stacked Generative Semi-Supervised Model (M1+M2)