Robust Imitation of Diverse Behaviors: Difference between revisions

No edit summary |

No edit summary |

||

| Line 19: | Line 19: | ||

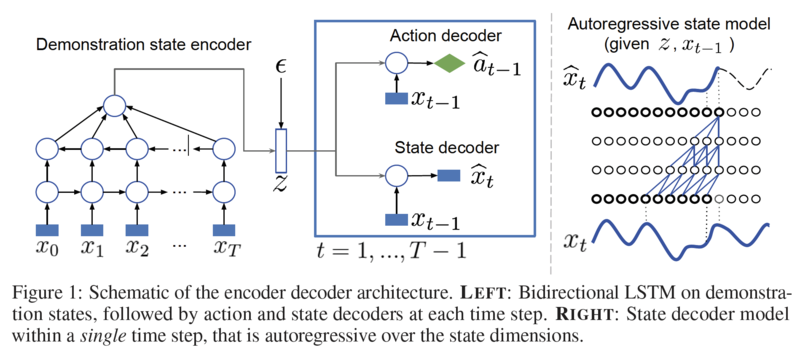

The authors first introduce a variational autoencoder (VAE) for supervised imitation, consisting of a bi-directional LSTM encoder mapping demonstration sequences to embedding vectors, and two decoders. The first decoder is a multi-layer perceptron (MLP) policy mapping a trajectory embedding and the current state to a continuous action vector. The second is a dynamics model mapping the embedding and previous state to the present state while modeling correlations among states with a WaveNet. | The authors first introduce a variational autoencoder (VAE) for supervised imitation, consisting of a bi-directional LSTM encoder mapping demonstration sequences to embedding vectors, and two decoders. The first decoder is a multi-layer perceptron (MLP) policy mapping a trajectory embedding and the current state to a continuous action vector. The second is a dynamics model mapping the embedding and previous state to the present state while modeling correlations among states with a WaveNet. | ||

[[File: Model_Architecture.png| | [[File: Model_Architecture.png|800px|Schematic of the encoder-decoder architecture. LEFT: Bidirectional LSTM on demonstration states, followed by action and state decoders at each time step. RIGHT: State decoder model | ||

within a single time step, that is autoregressive over the state dimensions.]] | within a single time step, that is autoregressive over the state dimensions.]] | ||

==Behavioral cloning with VAE suited for control== | ==Behavioral cloning with VAE suited for control== | ||

In this section, | In this section, the authors follow a similar approach to Duan et al., but opt for stochastic VAEs as having a distribution <math display="inline">q_\phi(z|x_{1:T})</math> to better regularize the latent space. In their VAE, an encoder maps a demonstration sequence to an embedding vector <math display="inline">z</math>. Given <math display="inline">z</math>, they decode both the state and action trajectories as shown in the figure above. To train the model, the following loss is minimized: | ||

a distribution | |||

In | \begin{align} | ||

decode both the state and action trajectories as shown in | \L\left( \alpha, w, \phi; \tau_i \right) = | ||

the following loss: | \end{align} | ||

L( | |||

The encoder <math display="inline">q</math> uses a bi-directional LSTM. To produce the final embedding, it calculates the average of all the outputs of the second layer of this LSTM before applying a final linear transformation to | |||

generate the mean and standard deviation of a Gaussian. Then, one sample from this Gaussian is taken as the demonstration encoding. | |||

" | |||

The action decoder is an MLP that maps the concatenation of the state and the embedding of the parameters of a Gaussian policy. The state decoder is similar to a conditional WaveNet model. In particular, it conditions on the embedding <math display="inline">z</math> and previous state <math display="inline">x_{t-1}</math> to generate the vector <math display="inline">x_t</math> autoregressively. That is, the autoregression is over the components of the vector <math display="inline">x_t</math>. Finally, instead of a Softmax, the model uses a mixture of Gaussians as the output of the WaveNet. | |||

of all the outputs of the second layer of this LSTM before applying a final linear transformation to | |||

generate the mean and standard deviation of | |||

The action decoder is an MLP that maps the concatenation of the state and the embedding | |||

parameters of a Gaussian policy. The state decoder is similar to a conditional WaveNet model | |||

In particular, it conditions on the embedding z and previous state | |||

autoregressively. That is, the autoregression is over the components of the vector | |||

mixture of Gaussians as the output of the WaveNet. | |||

Revision as of 11:13, 7 March 2018

Introduction

One of the longest standing challenges in AI is building versatile embodied agents, both in the form of real robots and animated avatars, capable of a wide and diverse set of behaviors. State-of-the-art robots cannot compete with the effortless variety and adaptive flexibility of motor behaviors produced by toddlers. Towards addressing this challenge, the authors combine several deep generative approaches to imitation learning in a way that accentuates their individual strengths and addresses their limitations. The end product is a robust neural network policy that can imitate a large and diverse set of behaviors using few training demonstrations.

Motivation

Some of the models that have recently shown great promise in imitation learning for motor control are the deep generative models. The authors primarily talk about two approaches viz. supervised approaches that condition on demonstrations and Generative Adversarial Imitation Learning (GAIL) and their limitations and try to combine those two approaches in order to address these limitations. Some of these limitations are as follows:

- Supervised approaches that condition on demonstrations (VAE):

- They require large training datasets in order to work for non-trivial tasks

- They tend to be brittle and fail when the agent diverges too much from the demonstration trajectories

- Generative Adversarial Imitation Learning (GAIL)

- Adversarial training leads to mode-collapse (the tendency of adversarial generative models to cover only a subset of modes of a probability distribution, resulting in a failure to produce adequately diverse samples)

- More difficult and slow to train as they do not immediately provide a latent representation of the data

Thus, the former approach can model diverse behaviors without dropping modes but does not learn robust policies, while the latter approach gives robust policies but insufficiently diverse behaviors. Thus, the authors combine the favorable aspects of these two approaches. The base of their model is a new type of variational autoencoder on demonstration trajectories that learns semantic policy embeddings. Leveraging these policy representations, they develop a new version of GAIL that

- is much more robust than the purely-supervised controller, especially with few demonstrations, and

- avoids mode collapse, capturing many diverse behaviors when GAIL on its own does not.

Model

The authors first introduce a variational autoencoder (VAE) for supervised imitation, consisting of a bi-directional LSTM encoder mapping demonstration sequences to embedding vectors, and two decoders. The first decoder is a multi-layer perceptron (MLP) policy mapping a trajectory embedding and the current state to a continuous action vector. The second is a dynamics model mapping the embedding and previous state to the present state while modeling correlations among states with a WaveNet.

Behavioral cloning with VAE suited for control

In this section, the authors follow a similar approach to Duan et al., but opt for stochastic VAEs as having a distribution [math]\displaystyle{ q_\phi(z|x_{1:T}) }[/math] to better regularize the latent space. In their VAE, an encoder maps a demonstration sequence to an embedding vector [math]\displaystyle{ z }[/math]. Given [math]\displaystyle{ z }[/math], they decode both the state and action trajectories as shown in the figure above. To train the model, the following loss is minimized:

\begin{align} \L\left( \alpha, w, \phi; \tau_i \right) = \end{align}

The encoder [math]\displaystyle{ q }[/math] uses a bi-directional LSTM. To produce the final embedding, it calculates the average of all the outputs of the second layer of this LSTM before applying a final linear transformation to generate the mean and standard deviation of a Gaussian. Then, one sample from this Gaussian is taken as the demonstration encoding.

The action decoder is an MLP that maps the concatenation of the state and the embedding of the parameters of a Gaussian policy. The state decoder is similar to a conditional WaveNet model. In particular, it conditions on the embedding [math]\displaystyle{ z }[/math] and previous state [math]\displaystyle{ x_{t-1} }[/math] to generate the vector [math]\displaystyle{ x_t }[/math] autoregressively. That is, the autoregression is over the components of the vector [math]\displaystyle{ x_t }[/math]. Finally, instead of a Softmax, the model uses a mixture of Gaussians as the output of the WaveNet.