Wasserstein Auto-Encoders: Difference between revisions

| Line 29: | Line 29: | ||

\small W_1(P_X, P_G) := \underset{f \in {\mathcal{F_L}}} {\sup} {\pmb{\mathbb{E}}_{X \sim P_X}[f(X)]} -{\pmb{\mathbb{E}}_{Y \sim P_G}[f(Y)]} | \small W_1(P_X, P_G) := \underset{f \in {\mathcal{F_L}}} {\sup} {\pmb{\mathbb{E}}_{X \sim P_X}[f(X)]} -{\pmb{\mathbb{E}}_{Y \sim P_G}[f(Y)]} | ||

\end{align} | \end{align} | ||

where <math>\small {\mathcal{F_L | where <math>\small {\mathcal{F_L}}</math> is the class of all bounded Lipschitz continuous functions. | ||

==Wasserstein Auto-Encoders== | ==Wasserstein Auto-Encoders== | ||

Revision as of 10:08, 12 March 2018

Introduction

Recent years have seen a convergence of two previously distinct approaches: representation learning from high dimensional data, and unsupervised generative modeling. In the field that formed at their intersection, Variational Auto-Encoders (VAEs) and Generative Adversarial Networks (GANs) have emerged to become well-established. VAEs are theoretically elegant but with the drawback that they tend to generate blurry samples when applied to natural images. GANs on the other hand produce better visual quality of sampled images, but come without an encoder, are harder to train and suffer from the mode-collapse problem when the trained model is unable to capture all the variability in the true data distribution. Thus there has been a push to come up with the best way to combine them together, but a principled unifying framework is yet to be discovered.

This work proposes a new family of regularized auto-encoders called the Wasserstein Auto-Encoder (WAE). The proposed method provides a novel theoretical insight into setting up an objective function for auto-encoders from the point of view of of optimal transport (OT). This theoretical formulation leads the authors to examine adversarial and maximum mean discrepancy based regularizers for matching a prior and the distribution of encoded data points in the latent space. An empirical evaluation is performed on MNIST and CelebA datasets, where WAE is found to generate samples of better quality than VAE while preserving training stability, encoder-decoder structure and nice latent manifold structure.

The main contribution of the proposed algorithm is to provide theoretical foundations for using optimal transport cost as the auto-encoder objective function, while blending auto-encoders and GANs in a principled way. It also theoretically and experimentally explores the interesting relationships between WAEs, VAEs and adversarial auto-encoders.

Proposed Approach

Theory of Optimal Transport and Wasserstein Distance

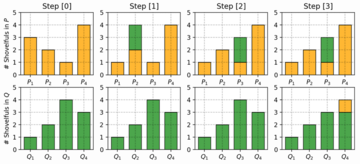

Wasserstein Distance is a measure of the distance between two probability distributions. It is also called Earth Mover’s distance, short for EM distance, because informally it can be interpreted as moving piles of dirt that follow one probability distribution at a minimum cost to follow the other distribution. The cost is quantified by the amount of dirt moved times the moving distance. A simple case where the probability domain is discrete is presented below.

When dealing with the continuous probability domain, the EM distance or the minimum one among the costs of all dirt moving solutions becomes:

\begin{align}

\small W(p_r, p_g) = \underset{\gamma\sim\Pi(p_r, p_g)} {\inf}\pmb{\mathbb{E}}_{(x,y)\sim\gamma}[\parallel x-y\parallel]

\end{align}

The Wasserstein distance or the cost of Optimal Transport (OT) provides a much weaker topology, which informally means that it makes it easier for a sequence of distribution to converge as compared to other f-divergences. This is particularly important in applications where data is supported on low dimensional manifolds in the input space. As a result, stronger notions of distances such as KL-divergence, often max out, providing no useful gradients for training. In contrast, OT has a much nicer linear behavior even upon saturation.

Problem Formulation and Notation

This work aims to minimize OT [math]\displaystyle{ \small W_c(P_X, P_G) }[/math] between the true (but unknown) data distribution [math]\displaystyle{ \small P_X }[/math] and a latent variable model [math]\displaystyle{ \small P_G }[/math] specified by the prior distribution [math]\displaystyle{ \small P_Z }[/math] of latent codes [math]\displaystyle{ \small Z \in \pmb{\mathbb{Z}} }[/math] and the generative model [math]\displaystyle{ \small P_G(X|Z) }[/math] of the data points [math]\displaystyle{ \small X \in \pmb{\mathbb{X}} }[/math] given [math]\displaystyle{ \small Z }[/math]. On the other hand, Kantorovich's formulation of the OT problem is given by: \begin{align} \small W_c(P_X, P_G) := \underset{\Gamma\sim {\mathcal{P}}(X \sim P_X, Y \sim P_G)}{\inf} {\pmb{\mathbb{E}}_{(X,Y)\sim\Gamma}[c(X,Y)]} \end{align} where [math]\displaystyle{ \small c(x,y) }[/math] is any measurable cost function and [math]\displaystyle{ \small {\mathcal{P}(X \sim P_X,Y \sim P_G)} }[/math] is a set of all joint distributions of [math]\displaystyle{ \small (X,Y) }[/math] with marginals [math]\displaystyle{ \small P_X }[/math] and [math]\displaystyle{ \small P_G }[/math]. When [math]\displaystyle{ \small c(x,y)=d(x,y) }[/math], the following Kantorovich-Rubinstein duality holds for the [math]\displaystyle{ \small 1^{st} }[/math] root of [math]\displaystyle{ \small W_c }[/math]: \begin{align} \small W_1(P_X, P_G) := \underset{f \in {\mathcal{F_L}}} {\sup} {\pmb{\mathbb{E}}_{X \sim P_X}[f(X)]} -{\pmb{\mathbb{E}}_{Y \sim P_G}[f(Y)]} \end{align} where [math]\displaystyle{ \small {\mathcal{F_L}} }[/math] is the class of all bounded Lipschitz continuous functions.