probabilistic Matrix Factorization: Difference between revisions

| Line 1: | Line 1: | ||

==Introduction and Motivation (Netflix Dataset)== | ==Introduction and Motivation (Netflix Dataset)== | ||

The problem provides an approach to [http://en.wikipedia.org/wiki/Collaborative_filtering| collaborative filtering] that can scale linearly and, thus, handle large datasets. The methods are discussed in the context of the [http://en.wikipedia.org/wiki/Netflix_Prize Netflix dataset]. A very popular | The problem provides an approach to [http://en.wikipedia.org/wiki/Collaborative_filtering| collaborative filtering] that can scale linearly and, thus, handle large datasets. The methods are discussed in the context of the [http://en.wikipedia.org/wiki/Netflix_Prize Netflix dataset]. A very popular way to do collaborative filtering is to train low-dimensional factor models. This approach is based on the idea that attitudes or preferences of a user can be determined by a small number of unobserved factors. | ||

This dataset is <math>N \times M</math> matrix where each row corresponds to a user, each column to a movie and the entries to a rating. Ratings take on values <math>\,1,...,K</math>. The difficulty in this problem arises from the fact that each user has only rated a subset of movies. If there is some latent rank-<math>\,D</math> structure to these ratings (that is, underlying variables that determine how much a user enjoys a movie) then it would beneficial to factor the matrix. | This dataset is <math>N \times M</math> matrix where each row corresponds to a user, each column to a movie and the entries to a rating. Ratings take on values <math>\,1,...,K</math>. The difficulty in this problem arises from the fact that each user has only rated a subset of movies. If there is some latent rank-<math>\,D</math> structure to these ratings (that is, underlying variables that determine how much a user enjoys a movie) then it would beneficial to factor the matrix. | ||

Formally, given a preference matrix <math>R\,</math> which is <math>N \times M</math>, we wish to find an <math>N \times D</math> matrix <math>\,U^T</math> and an <math>D \times M</math> matrix <math>\,V</math>. <math>\,V</math> is a factor matrix (i.e. for the <math>\,D</math> latent variables, it gives the value for each movie) and <math>\,U^T</math> gives coefficients of these factors for each user. The actual "Prize" dataset has <math>N=480,189, M = 17,770</math> with over 100 million of these entries having values. | Formally, given a preference matrix <math>R\,</math> which is <math>N \times M</math>, we wish to find an <math>N \times D</math> matrix <math>\,U^T</math> and an <math>D \times M</math> matrix <math>\,V</math>. <math>\,V</math> is a factor matrix (i.e. for the <math>\,D</math> latent variables, it gives the value for each movie) and <math>\,U^T</math> gives coefficients of these factors for each user. The actual "Prize" dataset has <math>\,N=480,189, M = 17,770</math> with over 100 million of these entries having values. | ||

To train a low-dimensional factor model for this dataset, one must find the best rank-<math>\,D</math> approximation to the observed <math>N \times M</math> target matrix R (under the given loss function). | |||

Another difficulty that arises with this dataset is that it is unbalanced. There is much variance in user activity; thus, some rows are much sparser than others. | Another difficulty that arises with this dataset is that it is unbalanced. There is much variance in user activity; thus, some rows are much sparser than others. | ||

Revision as of 18:23, 2 December 2010

Introduction and Motivation (Netflix Dataset)

The problem provides an approach to collaborative filtering that can scale linearly and, thus, handle large datasets. The methods are discussed in the context of the Netflix dataset. A very popular way to do collaborative filtering is to train low-dimensional factor models. This approach is based on the idea that attitudes or preferences of a user can be determined by a small number of unobserved factors.

This dataset is [math]\displaystyle{ N \times M }[/math] matrix where each row corresponds to a user, each column to a movie and the entries to a rating. Ratings take on values [math]\displaystyle{ \,1,...,K }[/math]. The difficulty in this problem arises from the fact that each user has only rated a subset of movies. If there is some latent rank-[math]\displaystyle{ \,D }[/math] structure to these ratings (that is, underlying variables that determine how much a user enjoys a movie) then it would beneficial to factor the matrix.

Formally, given a preference matrix [math]\displaystyle{ R\, }[/math] which is [math]\displaystyle{ N \times M }[/math], we wish to find an [math]\displaystyle{ N \times D }[/math] matrix [math]\displaystyle{ \,U^T }[/math] and an [math]\displaystyle{ D \times M }[/math] matrix [math]\displaystyle{ \,V }[/math]. [math]\displaystyle{ \,V }[/math] is a factor matrix (i.e. for the [math]\displaystyle{ \,D }[/math] latent variables, it gives the value for each movie) and [math]\displaystyle{ \,U^T }[/math] gives coefficients of these factors for each user. The actual "Prize" dataset has [math]\displaystyle{ \,N=480,189, M = 17,770 }[/math] with over 100 million of these entries having values. To train a low-dimensional factor model for this dataset, one must find the best rank-[math]\displaystyle{ \,D }[/math] approximation to the observed [math]\displaystyle{ N \times M }[/math] target matrix R (under the given loss function).

Another difficulty that arises with this dataset is that it is unbalanced. There is much variance in user activity; thus, some rows are much sparser than others.

There are several existing ways to solve this problem but they often require approximations that are slow and, at times, inaccurate. Another instinctive approach might be to use SVD. However, since there is a lot of missing data in this case, the optimization problem that needs to be solved is not convex. Thus, existing methods do not scale well and often have lower accuracy in cases where a row is sparse.

The proposed solution is to use a probabilistic model - probabilistic matrix factorization<ref name="PMF">R. Salakhutdinov and A.Mnih. "Probabilistic Matrix Factorization".</ref>.

Probabilistic Matrix Factorization

Given the preference matrix described above with entries [math]\displaystyle{ R_{ij} }[/math], the aim is to find a factorization that minimizes the root mean squared error(RMSE) on the test set. An initial attempt is to use linear model where we assume that there is Gaussian noise in the data. Define [math]\displaystyle{ I_{ij} }[/math] to be 1 if [math]\displaystyle{ R_{ij} }[/math] is known and 0 otherwise. Further, let [math]\displaystyle{ \,\mathcal N(x|\mu,\sigma^2) = f_X(x) }[/math] with [math]\displaystyle{ X \sim \mathcal N(\mu,\sigma^2) }[/math]. Then, we can define a conditional probability of the ratings with hyperparameter [math]\displaystyle{ \sigma^2 }[/math]

[math]\displaystyle{ p(R|U,V,\sigma^2) = \prod_{i=1}^N \prod_{j=1}^M \left[ \mathcal N(R_{ij}|U_i^TV_j,\sigma^2)\right]^{I_{ij}}\,\,\,\,\,\,\,\,(1) }[/math]

and priors on [math]\displaystyle{ U }[/math] and [math]\displaystyle{ V }[/math] with hyperparameters [math]\displaystyle{ \sigma^2_U, \sigma^2_V }[/math]

[math]\displaystyle{ p(U|\sigma^2_U) = \prod_{i=1}^N \mathcal N(U_i|0,\sigma^2_U\textbf{I})\,\,\,\,\,\,\,\,p(V|\sigma^2_V) = \prod_{i=1}^M \mathcal N(V_i|0,\sigma^2_V\textbf{I})\,\,\,\,\,\,\,\,(2) }[/math]

Then we aim to maximize the log posterior over [math]\displaystyle{ U }[/math] and [math]\displaystyle{ V }[/math] (to derive this, simply substitute the definition of [math]\displaystyle{ \mathcal{N} }[/math] and take the log): [math]\displaystyle{ \ln p(U,V,\sigma^2,\sigma^2_V,\sigma^2_U) = - \frac{1}{2\sigma^2}\sum_{i=1}^N\sum_{j=1}^M I_{ij}(R_{ij} - U_i^TV_j)^2 - \frac{1}{2\sigma^2_U} \sum_{i=1}^N U_i^TU_i - \frac{1}{2\sigma^2_V} \sum_{i=1}^N V_i^TV_i }[/math][math]\displaystyle{ - \frac{1}{2}\left(\kappa\ln\sigma^2 + ND\ln\sigma^2_U + MD\ln\sigma^2_V\right) + C\,\,\,\,\,\,\,\,(3) }[/math]

where [math]\displaystyle{ \kappa }[/math] is the number of entries that are known and [math]\displaystyle{ C }[/math] is a constant independent of the parameters. We can fix the three variance hyperparameters as constants which reduces the optimization to the first three terms. Then defining [math]\displaystyle{ \lambda_M = \sigma^2/\sigma^2_M }[/math] for [math]\displaystyle{ M=U,V }[/math] and multiplying by [math]\displaystyle{ -\sigma^2\lt 0 }[/math] results in a goal of minimizing

[math]\displaystyle{ E = \frac{1}{2}\left(\sum_{i=1}^N\sum_{j=1}^M I_{ij}(R_{ij} - U_i^TV_j)^2 + \lambda_U \sum_{i=1}^N \|U_i \|^2_F + \lambda_V \sum_{i=1}^M \| V_i \|^2_F \right)\,\,\,\,\,\,\,(4) }[/math]

where [math]\displaystyle{ \|A \|^2_F = \sum_{i=1}^m\sum_{j=1}^n |a_{ij}|^2 }[/math] is the Frobenius norm. Note that if all values are known (i.e. [math]\displaystyle{ I_{ij} = 1 \,\, \forall(i,j) }[/math] ) then as [math]\displaystyle{ \sigma^2_U, \sigma^2_V \rightarrow \infty }[/math], [math]\displaystyle{ E }[/math] reduces to the objective function of SVD.

It is also important to note that this model can result in values greater than [math]\displaystyle{ K }[/math] which is the range of the ratings. To remedy this, map the ratings to [math]\displaystyle{ [0,1] }[/math] with the function [math]\displaystyle{ t(x) = (x-1)/(K-1) }[/math]. Consequently, in the optimization problem (5), replace [math]\displaystyle{ (U_i^TV_j) }[/math] with [math]\displaystyle{ g((U_i^TV_j)) }[/math] where g is the logistic function, [math]\displaystyle{ g(x) = (1+\exp(-x))^{-1} }[/math].

Finally, (4) can be minimized using the method of steepest descent which is linear in the number of observations.

Constrained Probabilistic Matrix Factorization

In the above PMF approach, if a row is very sparse (that is, a user has rated very movies) then the estimated values will just approximately be the mean rating by the other users. Thus, we need a way remedy this. This is done by introducing a constraint and modifying PMF to created a constrained PMF algorithm.

First, we define an indicator matrix I which is 1 for entries that are known and 0 for entries that are not. Recall that D is the estimated number of latent factors. Let W be a [math]\displaystyle{ D \times M }[/math] that measures similarity between movies and the latent variables.

Then for a user i we can define [math]\displaystyle{ U_i = Y_i + \frac{\sum_{k=1}^M I_{ik}W_k}{I_{ik}} }[/math]

This means that users with similar sets of watched movies will have similar priors for [math]\displaystyle{ U_i }[/math]. Constrained PMF differs from PMF with the second term here so that [math]\displaystyle{ U_i \neq Y_i }[/math]. So, replacing [math]\displaystyle{ U_i }[/math] in the distribution above gives [math]\displaystyle{ p(R|Y,V,W,\sigma^2) = \prod_{i=1}^N \prod_{j=1}^M \left[ \mathcal N(R_{ij}|g\left(\left[Y_i + \frac{\sum_{k=1}^M I_{ik}W_k}{I_{ik}}\right]^TV_j\right),\sigma^2)\right]^{I_{ij}}\,\,\,\,\,\,\,\,(5) }[/math]

where, again, g is the logistic function.

Again, we can transform this maximization into a minimization like with (4) and use gradient descent (linear in number of observations). There is a significant improvement in performance.

Experimental Results

Consider a subset of the Netflix data set with 50,000 users and 1850 movies, with 1,082,982 known values. Additionally, more than half of the rows are sparse (less than 10 entries), which will help to show the improvement offered by constrained PMF.

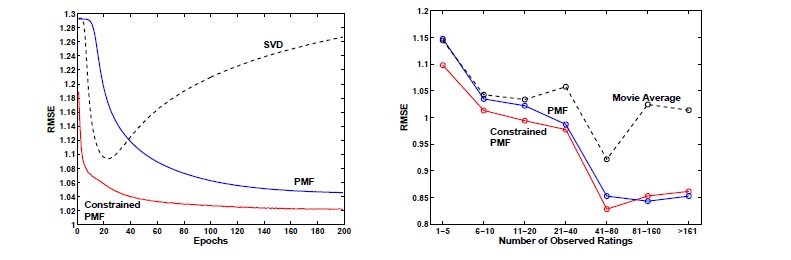

A factorization was created with [math]\displaystyle{ D = 30 }[/math] using SVD, PMF and constrained PMF. The parameters [math]\displaystyle{ \lambda_K = 0.002 }[/math] for [math]\displaystyle{ K = U,V,W,Y }[/math]. The results are shown in the figures below<ref name="PMF"/>. On the left RMSE is plotted against the number of passes through the data. On the right, the RMSE is plotted against the sparsity of the rows.

Both PMF models outperform SVD and, further, constrained PMF beats PMF. An interesting, but expected, result is that constrained PMF is clearly the best method in the case of sparse rows, but is indistinguishable from PMF when there are many known entries. They both do much better than a simple average of the movie ratings as the amount of information increases.

Another test was done looking at matrix in which the only known data is whether a movie has been rated. Tests show that PMF can use this information to provide better estimates than a simple average.

Results of similar tests on the full Netflix dataset provide comparable results.

Conclusion

The main problem is to factor a matrix that has many missing values, including many sparse rows, with hopes of using the known values to provide information about the missing values.

PMF provides a probabilistic approach using Gaussian assumptions on the known data and the factor matrices. We can further constrain priors to improve the algorithm, especially in the case of sparse rows.

Experimental results show that PMF and constrained PMF perform quite well.

The next steps might be a completely Bayesian approach which would involve a computationally expensive MCMC step but has shown improvement in preliminary experiments.

References

<references />