stat341f11: Difference between revisions

m (Conversion script moved page Stat341f11 to stat341f11: Converting page titles to lowercase) |

|||

| (230 intermediate revisions by 20 users not shown) | |||

| Line 66: | Line 66: | ||

Facts about this algorithm: | Facts about this algorithm: | ||

*In this example, the first 30 terms in the sequence are a permutation of integers from 1 to 30 and then the sequence repeats itself. | *In this example, the first 30 terms in the sequence are a permutation of integers from 1 to 30 and then the sequence repeats itself. In the general case, this algorithm has a period of m-1. | ||

*Values are between <b>0</b> and <b>m-1</b>, inclusive. | *Values are between <b>0</b> and <b>m-1</b>, inclusive. | ||

*Dividing the numbers by <b> m-1 </b> yields numbers in the interval <b>[0,1]</b>. | *Dividing the numbers by <b> m-1 </b> yields numbers in the interval <b>[0,1]</b>. | ||

| Line 73: | Line 73: | ||

===Inverse Transform Method=== | ===Inverse Transform Method=== | ||

This is a basic method for sampling. Theoretically using this method we can generate sample numbers at random from any probability distribution once we know its cumulative distribution function (cdf). | This is a basic method for sampling. Theoretically using this method we can generate sample numbers at random from any probability distribution once we know its cumulative distribution function (cdf). This method is very efficient computationally if the cdf of can be analytically inverted. | ||

====Theorem==== | ====Theorem==== | ||

Take <math>U \sim~ \mathrm{Unif}[0, 1]</math> and let <math>X = F^{-1}(U) </math>. Then <math>X</math> has distribution function <math>F(\cdot)</math>, | Take <math>U \sim~ \mathrm{Unif}[0, 1]</math> and let <math>\ X = F^{-1}(U) </math>. Then <math>\ X</math> has a cumulative distribution function of <math>F(\cdot)</math>, ie. <math>F(x)=P(X \leq x)</math>, where <math>F^{-1}(\cdot)</math> is the inverse of <math>F(\cdot)</math>. | ||

'''Proof''' | '''Proof''' | ||

| Line 161: | Line 159: | ||

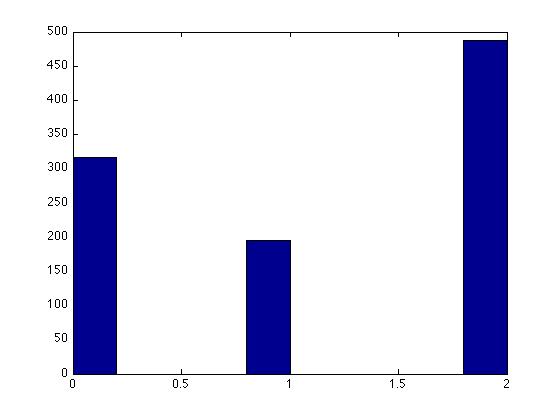

F(x) = \begin{cases} | F(x) = \begin{cases} | ||

0 & x < 0 \\ | 0 & x < 0 \\ | ||

0.3 & | 0.3 & x \leq 0 \\ | ||

0.5 & | 0.5 & x \leq 1 \\ | ||

1 & | 1 & x \leq 2 | ||

\end{cases}</math> | \end{cases}</math> | ||

| Line 268: | Line 266: | ||

<math>P(accepted|y) =P(u\leq \frac{f(y)}{c \cdot g(y)}) =\frac{f(y)}{c \cdot g(y)} </math>,where u ~ Unif [0,1] | <math>P(accepted|y) =P(u\leq \frac{f(y)}{c \cdot g(y)}) =\frac{f(y)}{c \cdot g(y)} </math>,where u ~ Unif [0,1] | ||

<math>P(accepted) = \sum P(accepted|y)\cdot P(y)=\int^{}_y \frac{f( | <math>P(accepted) = \sum P(accepted|y)\cdot P(y)=\int^{}_y \frac{f(s)}{c \cdot g(s)}g(s) ds=\int^{}_y \frac{f(s)}{c} ds=\frac{1}{c} \cdot\int^{}_y f(s) ds=\frac{1}{c}</math> | ||

So, | So, | ||

| Line 282: | Line 280: | ||

In general: | In general: | ||

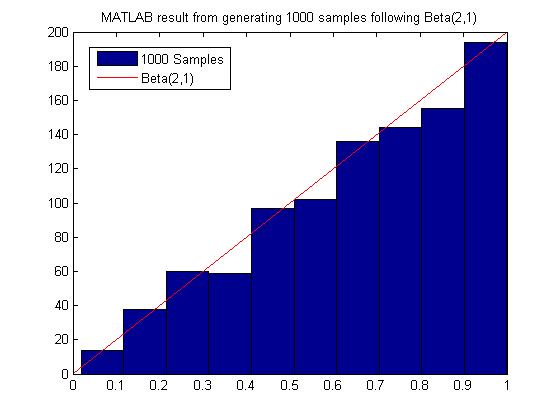

<math>Beta(\alpha, \beta) = \frac{\Gamma (\alpha + \beta)}{\Gamma(\alpha)\Gamma(\beta)}</math> <math>\displaystyle x^{\alpha-1}</math> <math>\displaystyle(1-x)^{\beta-1}</math>, <math>\displaystyle 0<x<1</math> | |||

Note: <math>\!\Gamma(n) = (n-1)!</math> if n is a positive integer | Note: <math>\!\Gamma(n) = (n-1)!</math> if n is a positive integer | ||

| Line 291: | Line 289: | ||

&= 2x \end{align}</math> | &= 2x \end{align}</math> | ||

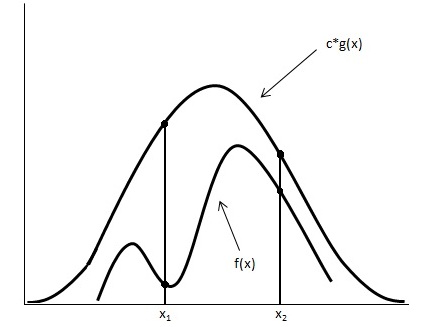

We want to choose <math>\displaystyle g(x)</math> that is easy to sample from. So we choose <math>\displaystyle g(x)</math> to be uniform distribution. | We want to choose <math>\displaystyle g(x)</math> that is easy to sample from. So we choose <math>\displaystyle g(x)</math> to be uniform distribution on <math>\ (0,1)</math> since that is the domain for the Beta function. | ||

We now want a constant c such that <math>f(x) \leq c \cdot g(x) </math> for all x from Unif(0,1) | We now want a constant c such that <math>f(x) \leq c \cdot g(x) </math> for all x from Unif(0,1) | ||

| Line 298: | Line 296: | ||

So,<br /> | So,<br /> | ||

<math>c \geq \frac{f(x)}{g(x)}</math>, for all x from (0,1) | <math>c \geq \frac{f(x)}{g(x)}</math>, for all x from (0,1) | ||

| Line 409: | Line 407: | ||

:<math> \sum_{i=1}^{t} X_i \sim~ Gamma (t, \lambda) </math> where <math>X_i \sim~Exp(\lambda)</math><br> | :<math> \sum_{i=1}^{t} X_i \sim~ Gamma (t, \lambda) </math> where <math>X_i \sim~Exp(\lambda)</math><br> | ||

'''Method''' | '''Method''' <br> | ||

Suppose we want to sample <math>\ k </math> points from <math>\ Gamma (t, \lambda) </math>. <br> | |||

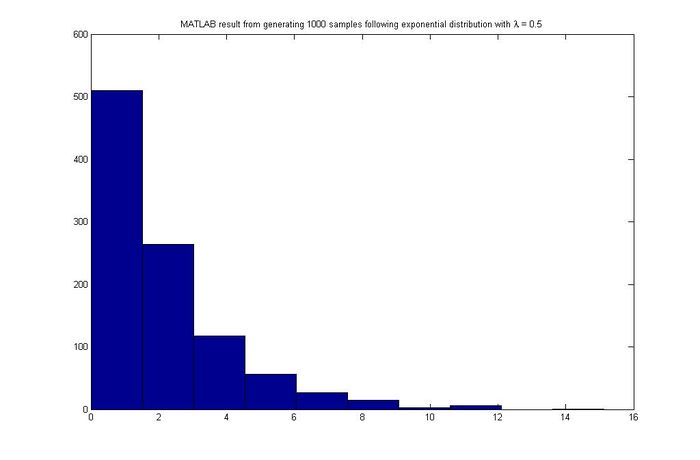

We can sample the exponential distribution using the inverse transform method from the previous class,<br> | We can sample the exponential distribution using the inverse transform method from the previous class,<br> | ||

:<math>\,f(x)={\lambda}e^{-{\lambda}x}</math><br> | :<math>\,f(x)={\lambda}e^{-{\lambda}x}</math><br> | ||

| Line 416: | Line 414: | ||

:<math>\,F^{-1}(u)=\frac{-ln(u)}{\lambda}</math> | :<math>\,F^{-1}(u)=\frac{-ln(u)}{\lambda}</math> | ||

(1 - u) is the same as x since <math>U \sim~ unif [0,1] </math><br> | (1 - u) is the same as x since <math>U \sim~ unif [0,1] </math><br> | ||

:<math> \begin{align} \frac{-ln(u_1)}{\lambda} - \frac{ln(u_2)}{\lambda} - ... - \frac{ln(u_t)}{\lambda} = x_1\\ \vdots \\ \frac{-ln(u_1)}{\lambda} - \frac{ln(u_2)}{\lambda} - ... - \frac{ln(u_t)}{\lambda} = | :<math> \begin{align} \frac{-ln(u_1)}{\lambda} - \frac{ln(u_2)}{\lambda} - ... - \frac{ln(u_t)}{\lambda} = x_1\\ \vdots \\ \frac{-ln(u_1)}{\lambda} - \frac{ln(u_2)}{\lambda} - ... - \frac{ln(u_t)}{\lambda} = x_k \end{align} | ||

:</math><br> | :</math><br> | ||

:<math> \frac {-\sum_{i=1}^{t} ln(u_i)}{\lambda} = x</math> | :<math> \frac {-\sum_{i=1}^{t} ln(u_i)}{\lambda} = x</math> | ||

| Line 463: | Line 461: | ||

# Generate <math>\displaystyle u_1</math> and <math>\displaystyle u_2</math>, two values sampled from a uniform distribution between 0 and 1. | # Generate <math>\displaystyle u_1</math> and <math>\displaystyle u_2</math>, two values sampled from a uniform distribution between 0 and 1. | ||

# Set <math>\displaystyle R^2 = -2log(u_1)</math> so that <math>\displaystyle R^2</math> is exponential with mean | # Set <math>\displaystyle R^2 = -2log(u_1)</math> so that <math>\displaystyle R^2</math> is exponential with mean 2 <br> Set <math>\!\theta = 2*\pi*u_2</math> so that <math>\!\theta</math> ~ <math>\ Unif[0, 2\pi] </math> | ||

# Set <math>\displaystyle X = R cos(\theta)</math> <br> Set <math>\displaystyle Y = R sin(\theta)</math> | # Set <math>\displaystyle X = R cos(\theta)</math> <br> Set <math>\displaystyle Y = R sin(\theta)</math> | ||

| Line 517: | Line 515: | ||

<pre> | <pre> | ||

v = a + b * x; | |||

</pre> | </pre> | ||

| Line 562: | Line 560: | ||

root_E = u * (s ^ (1 / 2)); | root_E = u * (s ^ (1 / 2)); | ||

z = (root_E * [x y]); | z = (root_E * [x y]'); | ||

z(: | z(1,:) = z(1,:) + 0; | ||

z(: | z(2,:) = z(2,:) + -3; | ||

scatter(z(: | scatter(z(1,:), z(2,:)) | ||

</pre> | </pre> | ||

| Line 617: | Line 615: | ||

In MatLab this can be coded with a single line. The following generates a sample from <math>\displaystyle X \sim Bin(n, p)</math> | In MatLab this can be coded with a single line. The following generates a sample from <math>\displaystyle X \sim Bin(n, p)</math> | ||

sum(rand(n, 1) <= p, 1) | |||

==Bayesian Inference and Frequentist Inference - October 4, 2011== | ==Bayesian Inference and Frequentist Inference - October 4, 2011== | ||

| Line 623: | Line 621: | ||

===Bayesian inference vs Frequentist inference=== | ===Bayesian inference vs Frequentist inference=== | ||

The Bayesian method has become popular in the last few decades as simulation and computer technology makes it more applicable. For more information about its history and application, please refer to http://en.wikipedia.org/wiki/Bayesian_inference. | The Bayesian method has become popular in the last few decades as simulation and computer technology makes it more applicable. For more information about its history and application, please refer to http://en.wikipedia.org/wiki/Bayesian_inference. | ||

As for | As for frequentist inference, please refer to http://en.wikipedia.org/wiki/Frequentist_inference. | ||

====Example==== | ====Example==== | ||

Consider | Consider the 'probability' that a person drinks a cup of coffee on a specific day. The interpretations of this for a frequentist and a bayesian are as follows: | ||

<br><br> | <br><br> | ||

Frequentist: There is no explanation to this | Frequentist: There is no explanation to this expression. It is essentially meaningless since it has only occurred once. Therefore, it is not a probability. | ||

<br> | <br> | ||

Bayesian: Probability | Bayesian: Probability captures not only the frequency of occurrences but also one's degree of belief about the random component of a proposition. Therefore it is a valid probability. | ||

====Example of face identification==== | ====Example of face identification==== | ||

Take the face as input x and the person as output y. The person can be either Ali or Tom. | Consider a picture of a face that is associated with an identity (person). Take the face as input x and the person as output y. The person can be either Ali or Tom. We have y=1 if it is Ali and y=0 if it is Tom. We can divide the picture into 100*100 pixels and insert them into a 10,000*1 column vector, which captures x. | ||

If you are a frequentist, you would compare Pr(X=x|y=1) with Pr(X=x|y=0) and see which one is higher. If you are | If you are a frequentist, you would compare <math>\Pr(X=x|y=1)</math> with <math>\Pr(X=x|y=0)</math> and see which one is higher.<br /> If you are Bayesian, you would compare <math>\Pr(y=1|X=x)</math> with <math>\Pr(y=0|X=x)</math>. | ||

====Summary of differences between two schools==== | ====Summary of differences between two schools==== | ||

| Line 646: | Line 643: | ||

*Bayesian: Parameters are random variables and we can make probabilistic statement about them. | *Bayesian: Parameters are random variables and we can make probabilistic statement about them. | ||

---- | ---- | ||

*Frequentist: Statistical procedures should have long run frequency | *Frequentist: Statistical procedures should be designed to have long run frequency properties. | ||

e.g. a 95% confidence interval should trap true value of the parameter | e.g. a 95% confidence interval should trap true value of the parameter with limiting frequency at least 95%. | ||

*Bayesian: It makes inferences about <math>\theta</math> by producing a | *Bayesian: It makes inferences about <math>\theta</math> by producing a probability distribution for <math>\theta</math>. Inference (e.g. point estimates and interval estimates) will be extracted from this distribution :<math>f(\theta|X) = \frac{f(X | \theta)\, f(\theta)}{f(X)}.</math> | ||

====Bayesian inference==== | ====Bayesian inference==== | ||

| Line 666: | Line 663: | ||

We denote <math>\displaystyle f({x_1,...,x_n}|\theta)</math> as <math>\displaystyle L_n(\theta)</math> which is called likelihood. And we use <math>\displaystyle x^n</math> to denote <math>\displaystyle (x_1,...,x_n)</math>. | We denote <math>\displaystyle f({x_1,...,x_n}|\theta)</math> as <math>\displaystyle L_n(\theta)</math> which is called likelihood. And we use <math>\displaystyle x^n</math> to denote <math>\displaystyle (x_1,...,x_n)</math>. | ||

<math>f(\theta|x^n) = \frac{f(x^n|\theta) \cdot f(\theta)}{f(x^n)}=\frac{f(x^n|\theta) \cdot f(\theta)}{\int^{}_\theta f(x^n|\theta) \cdot f(\theta) d\theta}</math> , where <math>\int^{}_\theta f(x^n|\theta) \cdot f(\theta) d\theta</math> is a constant <math>\displaystyle c_n</math>. So <math>f(\theta|x^n) \propto f(x^n|\theta) \cdot f(\theta)</math>. The posterior probability is proportional to the likelihood times prior probability. | <math>f(\theta|x^n) = \frac{f(x^n|\theta) \cdot f(\theta)}{f(x^n)}=\frac{f(x^n|\theta) \cdot f(\theta)}{\int^{}_\theta f(x^n|\theta) \cdot f(\theta) d\theta}</math> , where <math>\int^{}_\theta f(x^n|\theta) \cdot f(\theta) d\theta</math> is a constant <math>\displaystyle c_n</math> which does not depend on <math>\displaystyle \theta </math>. So <math>f(\theta|x^n) \propto f(x^n|\theta) \cdot f(\theta)</math>. The posterior probability is proportional to the likelihood times prior probability. | ||

Note that it does not matter if we throw away <math>\displaystyle c_n</math>,we can always recover it. | |||

What do we do about the posterier distribution? | |||

*Point estimate | |||

<math> \bar{\theta}=\int\theta \cdot f(\theta|x^n) d\theta=\frac{\int\theta \cdot L_n(\theta)\cdot f(\theta) d(\theta)}{c_n}</math> | |||

*Baysian Interval estimate | |||

<math> \int^{a}_{-\infty} f(\theta|x^n) d\theta=\int^{\infty}_{b} f(\theta|x^n) d\theta=1-\alpha </math> | |||

<math> | Let C=(a,b); | ||

Then <math> P(\theta\in C|x^n)=\int^{b}_{a} f(\theta|x^n)d(\theta)=1-\alpha </math>. | |||

C is a <math>\displaystyle 1-\alpha</math> posterior interval. | |||

Let <math>\tilde{\theta}=(\theta_1,...,\theta_d)^T</math>, then <math>f(\theta_1|x^n) = \int^{} \int^{} \dots \int^{}f(\theta|X)d\theta_2d\theta_3 \dots d\theta_d </math> and <math>E(\theta_1)=\int^{}\theta_1 \cdot f(\theta_1|x^n) d\theta_1</math> | Let <math>\tilde{\theta}=(\theta_1,...,\theta_d)^T</math>, then <math>f(\theta_1|x^n) = \int^{} \int^{} \dots \int^{}f(\tilde{\theta}|X)d\theta_2d\theta_3 \dots d\theta_d </math> and <math>E(\theta_1|x^n)=\int^{}\theta_1 \cdot f(\theta_1|x^n) d\theta_1</math> | ||

====Example 1: Estimating parameters of a univariate Gaussian distribution==== | ====Example 1: Estimating parameters of a univariate Gaussian distribution==== | ||

| Line 680: | Line 686: | ||

<math>f(x|\theta)= \frac{1}{\sqrt{2\pi}\sigma} \cdot e^{-\frac{1}{2}(\frac{x-\mu}{\sigma})^2}</math> | <math>f(x|\theta)= \frac{1}{\sqrt{2\pi}\sigma} \cdot e^{-\frac{1}{2}(\frac{x-\mu}{\sigma})^2}</math> | ||

<math>L_n(\theta)= \prod_{i=1}^n \frac{1}{\sqrt{2\pi}\sigma} \cdot e^{-\frac{1}{2}(\frac{x_i-\mu}{\sigma})^2}</math> | <math>L_n(\theta)= \prod_{i=1}^n \frac{1}{\sqrt{2\pi}\sigma} \cdot e^{-\frac{1}{2}(\frac{x_i-\mu}{\sigma})^2}</math> | ||

<math>\ln L_n(\theta) = l(\theta) = \sum_{i=1}^n -\frac{1}{2}\ln 2\pi-\ln \sigma-\frac{1}{2}(\frac{x_i-\mu}{\sigma})^2</math> | <math>\ln L_n(\theta) = l(\theta) = \sum_{i=1}^n[ -\frac{1}{2}\ln 2\pi-\ln \sigma-\frac{1}{2}(\frac{x_i-\mu}{\sigma})^2]</math> | ||

To get the maximum likelihood estimator of <math>\!\mu</math> (mle), we find the <math>\hat{\mu}</math> which maximizes <math>\displaystyle L_n(\theta)</math>: | To get the maximum likelihood estimator of <math>\!\mu</math> (mle), we find the <math>\hat{\mu}</math> which maximizes <math>\displaystyle L_n(\theta)</math>: | ||

| Line 700: | Line 707: | ||

so <math>f(\mu) = \frac{1}{\sqrt{2\pi}\Gamma} \cdot e^{-\frac{1}{2}(\frac{\mu-\mu_0}{\Gamma})^2}</math> | so <math>f(\mu) = \frac{1}{\sqrt{2\pi}\Gamma} \cdot e^{-\frac{1}{2}(\frac{\mu-\mu_0}{\Gamma})^2}</math> | ||

<math>f(\mu | <math>f(x|\mu) = \frac{1}{\sqrt{2\pi}\tilde{\sigma}} \cdot e^{-\frac{1}{2}(\frac{x-\mu}{\tilde{\sigma}})^2}</math> | ||

<math>\tilde{\mu} = \frac{\frac{n}{\sigma^2}}{\frac{n}{\sigma^2}+\frac{1}{\Gamma^2}}\bar{x}+\frac{\frac{1}{\Gamma^2}}{\frac{n}{\sigma^2}+\frac{1}{\Gamma^2}}\mu_0</math>, where <math>\tilde{\mu}</math> is the estimator of <math>\!\mu</math>. | <math>\tilde{\mu} = \frac{\frac{n}{\sigma^2}}{\frac{n}{\sigma^2}+\frac{1}{\Gamma^2}}\bar{x}+\frac{\frac{1}{\Gamma^2}}{\frac{n}{\sigma^2}+\frac{1}{\Gamma^2}}\mu_0</math>, where <math>\tilde{\mu}</math> is the estimator of <math>\!\mu</math>. | ||

* If prior belief about <math>\!\mu_0</math> is strong, then <math>\!\Gamma</math> is small and <math>\frac{1}{\Gamma^2}</math> is large. <math>\tilde{\mu}</math> is close to <math>\!\mu_0</math> and the observations will not affect too much. On the contrary, if prior belief about <math>\!\mu_0</math> is weak, <math>\!\Gamma</math> is large and <math>\frac{1}{\Gamma^2}</math> is small. <math>\tilde{\mu}</math> depends more on observations.(This is intuitive, when our original belief is reliable, then the sample is not important in improving the result; when the belief is not reliable, then we depend a lot on the sample.) | * If prior belief about <math>\!\mu_0</math> is strong, then <math>\!\Gamma</math> is small and <math>\frac{1}{\Gamma^2}</math> is large. <math>\tilde{\mu}</math> is close to <math>\!\mu_0</math> and the observations will not affect too much. On the contrary, if prior belief about <math>\!\mu_0</math> is weak, <math>\!\Gamma</math> is large and <math>\frac{1}{\Gamma^2}</math> is small. <math>\tilde{\mu}</math> depends more on observations. (This is intuitive, when our original belief is reliable, then the sample is not important in improving the result; when the belief is not reliable, then we depend a lot on the sample.) | ||

* When the sample is large (i.e. n <math>\to \infty</math>), <math>\tilde{\mu} \to \bar{x}</math> and the impact of prior belief about <math>\!\mu</math> is weakened. | * When the sample is large (i.e. n <math>\to \infty</math>), <math>\tilde{\mu} \to \bar{x}</math> and the impact of prior belief about <math>\!\mu</math> is weakened. | ||

| Line 735: | Line 742: | ||

===='''General Procedure'''==== | ===='''General Procedure'''==== | ||

i) Draw n samples <math> x_i \sim~ | i) Draw n samples <math> x_i \sim~ Unif[a,b] </math> | ||

ii) Compute <math> \ w(x_i) </math> for every sample | ii) Compute <math> \ w(x_i) </math> for every sample | ||

| Line 741: | Line 748: | ||

iii) Obtain an estimate of the integral, <math> \hat{I} </math>, as follows: | iii) Obtain an estimate of the integral, <math> \hat{I} </math>, as follows: | ||

<math> \hat{I} = \frac{1}{n} \sum_{i=1}^{n} w( | <math> \hat{I} = \frac{1}{n} \sum_{i=1}^{n} w(x_i)</math> . Clearly, this is just the average of the simulation results. | ||

By the strong law of large numbers <math> \hat{I} </math> converges to <math> \ I </math> as <math> \ n \rightarrow \infty </math>. Because of this, we can compute all sorts of useful information, such as variance, standard error, and confidence intervals. | By the strong law of large numbers <math> \hat{I} </math> converges to <math> \ I </math> as <math> \ n \rightarrow \infty </math>. Because of this, we can compute all sorts of useful information, such as variance, standard error, and confidence intervals. | ||

| Line 747: | Line 754: | ||

Standard Error: <math> SE = \frac{Standard Deviation} {\sqrt{n}} </math> | Standard Error: <math> SE = \frac{Standard Deviation} {\sqrt{n}} </math> | ||

Variance: <math> V = \frac{\sum_{i=1}^{n} (w(x)-I)^2}{n-1} </math> | Variance: <math> V = \frac{\sum_{i=1}^{n} (w(x)-\hat{I})^2}{n-1} </math> | ||

Confidence Interval: <math> I \pm t_{(\alpha/2)} SE </math> | Confidence Interval: <math> \hat{I} \pm t_{(\alpha/2)} SE </math> | ||

==='''Example: Uniform Distribution'''=== | ==='''Example: Uniform Distribution'''=== | ||

| Line 755: | Line 762: | ||

Consider the integral, <math> \int_{0}^{1} x^3dx </math>, which is easily solved through standard analytical integration methods, and is equal to .25. Now, let us check this answer with a numerical approximation using Monte Carlo Integration. | Consider the integral, <math> \int_{0}^{1} x^3dx </math>, which is easily solved through standard analytical integration methods, and is equal to .25. Now, let us check this answer with a numerical approximation using Monte Carlo Integration. | ||

We generate a 1 by 10000 vector of uniform (on the interval [0,1]) random variables and call that vector 'u'. We see that our 'w' in this case is <math> x^3 </math>, so we set <math> w = u^3 </math>. Our | We generate a 1 by 10000 vector of uniform (on the interval [0,1]) random variables and call that vector 'u'. We see that our 'w' in this case is <math> x^3 </math>, so we set <math> w = u^3 </math>. Our <math>\hat{I}</math> is equal to the mean of w. | ||

In Matlab, we can solve this integration problem with the following code: | In Matlab, we can solve this integration problem with the following code: | ||

| Line 874: | Line 881: | ||

Therefore, <math> \displaystyle f(p,q|x,y)\propto p^x(1-p)^{n-x}q^y(1-q)^{m-y} </math>. | Therefore, <math> \displaystyle f(p,q|x,y)\propto p^x(1-p)^{n-x}q^y(1-q)^{m-y} </math>. | ||

<math> E[\delta] = \int_{0}^{1} \int_{0}^{1} (p-q)f(p,q|x,y) | <math> E[\delta|x,y] = \int_{0}^{1} \int_{0}^{1} (p-q)f(p,q|x,y)dpdq </math>. | ||

As you can see this is much tougher than the frequentist approach. | As you can see this is much tougher than the frequentist approach. | ||

| Line 886: | Line 893: | ||

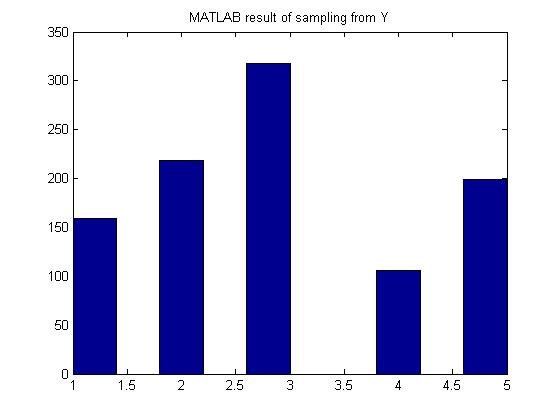

Frequentist approach: <br><br><math>\displaystyle \hat{p_1} = \frac{X}{n}</math> ; <math>\displaystyle \hat{p_2} = \frac{Y}{m}</math><br><br><math>\displaystyle \hat{\delta} = \hat{p_1} - \hat{p_2} = \frac{X}{n} - \frac{Y}{m}</math><br><br> | Frequentist approach: <br><br><math>\displaystyle \hat{p_1} = \frac{X}{n}</math> ; <math>\displaystyle \hat{p_2} = \frac{Y}{m}</math><br><br><math>\displaystyle \hat{\delta} = \hat{p_1} - \hat{p_2} = \frac{X}{n} - \frac{Y}{m}</math><br><br> | ||

Bayesian approach to compute the expected value of <math>\displaystyle \delta</math>:<br><br> | Bayesian approach to compute the expected value of <math>\displaystyle \delta</math>:<br><br> | ||

<math>\displaystyle E(\delta) = \int\int(p_1-p_2) f(p_1,p_2|X,Y)\,dp_1dp_2</math><br><br> | <math>\displaystyle E(\delta|X,Y) = \int\int(p_1-p_2) f(p_1,p_2|X,Y)\,dp_1dp_2</math><br><br> | ||

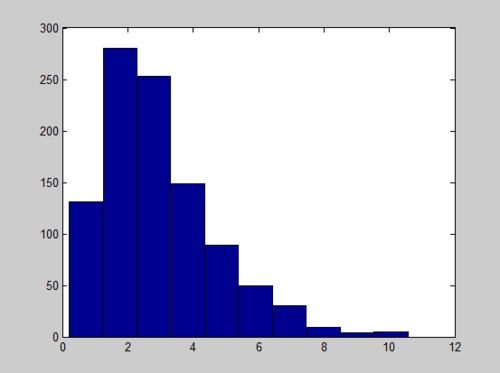

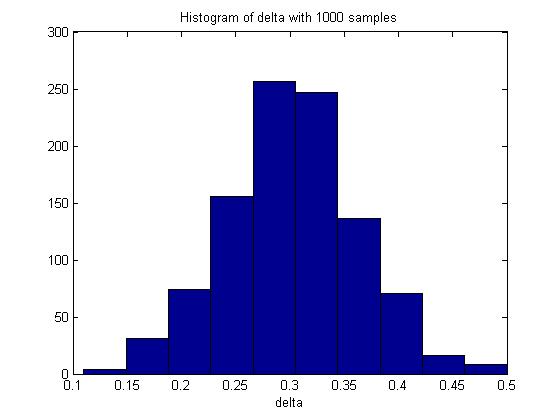

Assume that <math>\displaystyle n = 100, m = 100, p_1 = 0.5, p_2 = 0.8</math> and the sample size is 1000.<br> | Assume that <math>\displaystyle n = 100, m = 100, p_1 = 0.5, p_2 = 0.8</math> and the sample size is 1000.<br> | ||

| Line 895: | Line 902: | ||

p_1 = 0.5; | p_1 = 0.5; | ||

p_2 = 0.8; | p_2 = 0.8; | ||

p1 = mean(rand(n,1000)<p_1); | p1 = mean(rand(n,1000)<p_1,1); | ||

p2 = mean(rand(m,1000)<p_2); | p2 = mean(rand(m,1000)<p_2,1); | ||

delta = p2 - p1; | delta = p2 - p1; | ||

hist(delta) | hist(delta) | ||

| Line 943: | Line 950: | ||

====Problem==== | ====Problem==== | ||

If <math>\displaystyle g(x)</math> is not chosen appropriately, then the variance of the estimate <math>\hat{I}</math> may be very large. | If <math>\displaystyle g(x)</math> is not chosen appropriately, then the variance of the estimate <math>\hat{I}</math> may be very large. The problem here is actually similar to what we encounter with the Rejection-Acceptance method. Consider the second moment of <math>y(x)</math>:<br><br> | ||

<math>\begin{align} | <math>\begin{align} | ||

E_g((y(x))^2) \\ | |||

& = \int (\frac{h(x)f(x)}{g(x)})^2 g(x) dx \\ | & = \int (\frac{h(x)f(x)}{g(x)})^2 g(x) dx \\ | ||

& = \int \frac{h^2(x) f^2(x)}{g^2(x)} g(x) dx \\ | & = \int \frac{h^2(x) f^2(x)}{g^2(x)} g(x) dx \\ | ||

| Line 957: | Line 964: | ||

1. We can actually compute the form of <math>\displaystyle g(x)</math> to have optimal variance. <br>Mathematically, it is to find <math>\displaystyle g(x)</math> subject to <math>\displaystyle \min_g [\ E_g([y(x)]^2) - (E_g[y(x)])^2\ ]</math><br> | 1. We can actually compute the form of <math>\displaystyle g(x)</math> to have optimal variance. <br>Mathematically, it is to find <math>\displaystyle g(x)</math> subject to <math>\displaystyle \min_g [\ E_g([y(x)]^2) - (E_g[y(x)])^2\ ]</math><br> | ||

It can be shown that the optimal <math>\displaystyle g(x)</math> is <math>\displaystyle | It can be shown that the optimal <math>\displaystyle g(x)</math> is <math>\displaystyle {|h(x)|f(x)}</math>. Using the optimal <math>\displaystyle g(x)</math> will minimize the variance of estimation in Importance Sampling. This is of theoretical interest but not useful in practice. As we can see, if we can actually show the expression of g(x), we must first have the value of the integration---which is what we want in the first place. | ||

2. In practice, we shall choose <math>\displaystyle g(x)</math> which has similar shape as <math>\displaystyle f(x)</math> but with a thicker tail than <math>\displaystyle f(x)</math> in order to avoid the problem mentioned above.<br> | 2. In practice, we shall choose <math>\displaystyle g(x)</math> which has similar shape as <math>\displaystyle f(x)</math> but with a thicker tail than <math>\displaystyle f(x)</math> in order to avoid the problem mentioned above.<br> | ||

| Line 987: | Line 994: | ||

<math>\displaystyle I = Pr(Z>3)= \int^\infty_3 f(x)\,dx </math><br> | <math>\displaystyle I = Pr(Z>3)= \int^\infty_3 f(x)\,dx </math><br> | ||

To apply importance sampling, we have to choose a <math>\displaystyle g(x)</math> which we will sample from. In this example, we can choose <math>\displaystyle g(x)</math> to be the probability density function of exponential distribution, normal distribution with mean 0 and variance greater than 1 or normal distribution with mean greater than 0 and variance 1 etc. | To apply importance sampling, we have to choose a <math>\displaystyle g(x)</math> which we will sample from. In this example, we can choose <math>\displaystyle g(x)</math> to be the probability density function of exponential distribution, normal distribution with mean 0 and variance greater than 1 or normal distribution with mean greater than 0 and variance 1 etc. The goal is to minimize the number of rejected samples in order to produce a more accurate result! For the following, we take <math>\displaystyle g(x)</math> to be the pdf of <math>\displaystyle N(4,1)</math>.<br> | ||

Procedure: | Procedure: | ||

| Line 1,043: | Line 1,050: | ||

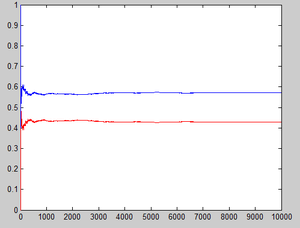

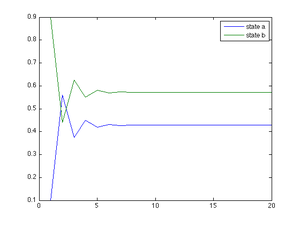

A stochastic process <math> \{ x_t : t \in T \}</math> is a collection of random variables. Variables <math>\displaystyle x_t</math> take values in some set <math>\displaystyle X</math> called the '''state space.''' The set <math>\displaystyle T</math> is called the '''index set.''' | A stochastic process <math> \{ x_t : t \in T \}</math> is a collection of random variables. Variables <math>\displaystyle x_t</math> take values in some set <math>\displaystyle X</math> called the '''state space.''' The set <math>\displaystyle T</math> is called the '''index set.''' | ||

====Markov Chain==== | ===='''Markov Chain'''==== | ||

A Markov Chain is a stochastic process for which the distribution of <math>\displaystyle x_t</math> depends only on <math>\displaystyle x_{t-1}</math>. It is a random process characterized as being memoryless, meaning that the next occurrence of a defined event is only dependent on the current event;not on the preceding sequence of events. | A Markov Chain is a stochastic process for which the distribution of <math>\displaystyle x_t</math> depends only on <math>\displaystyle x_{t-1}</math>. It is a random process characterized as being memoryless, meaning that the next occurrence of a defined event is only dependent on the current event;not on the preceding sequence of events. | ||

Formal Definition: The process <math> \{ x_t : t \in T \}</math> is a Markov Chain if <math>\displaystyle Pr(x_t|x_0, x_1,..., x_{t-1})= Pr(x_t|x_{t-1})</math> for all <math> \{t \in T \}</math> and for all <math> \{x \in X \}</math> | Formal Definition: The process <math> \{ x_t : t \in T \}</math> is a Markov Chain if <math>\displaystyle Pr(x_t|x_0, x_1,..., x_{t-1})= Pr(x_t|x_{t-1})</math> for all <math> \{t \in T \}</math> and for all <math> \{x \in X \}</math> | ||

For a Markov Chain, <math>\displaystyle f(x_1,...x_n)= f(x_1)f(x_2|x_1)f(x_3|x_2)...f(x_n|x_{n-1})</math> | For a Markov Chain, <math>\displaystyle f(x_1,...x_n)= f(x_1)f(x_2|x_1)f(x_3|x_2)...f(x_n|x_{n-1})</math> | ||

<br><br>Real Life Example: | <br><br>'''Real Life Example:''' | ||

<br>When going for an interview, the employer only looks at your highest education achieved. The employer would not look at the past educations received (elementary school, high school etc.) because the employer believes that the highest education achieved summarizes your previous educations. Therefore, anything before your most recent previous education is irrelevant. In other word, we can say that<math> x_t </math>is regarded as the summary of <math>x_{t-1},...,x_2,x_1</math>, so when we need to determine <math>x_{t+1}</math>, we only need to pay attention in <math>x_{t}</math>. | <br>When going for an interview, the employer only looks at your highest education achieved. The employer would not look at the past educations received (elementary school, high school etc.) because the employer believes that the highest education achieved summarizes your previous educations. Therefore, anything before your most recent previous education is irrelevant. In other word, we can say that <math>\! x_t </math> is regarded as the summary of <math>\!x_{t-1},...,x_2,x_1</math>, so when we need to determine <math>\!x_{t+1}</math>, we only need to pay attention in <math>\!x_{t}</math>. | ||

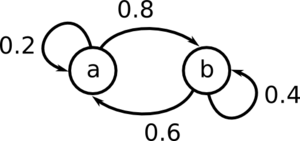

====Transition Probabilities==== | ====Transition Probabilities==== | ||

The <b>transition probability</b> is the probability of jumping from one state to another state in a Markov Chain. | |||

That is, | Formally, let us define <math>\displaystyle P_{ij} = Pr(x_{t+1}=j|x_t=i)</math> to be the transition probability. | ||

That is, <math>\displaystyle P_{ij}</math> is the probability of transitioning from state i to state j in a single step. Then the matrix <math>\displaystyle P</math> whose (i,j) element is <math>\displaystyle P_{ij}</math> is called the <b>transition matrix</b>. | |||

Properties of P: | Properties of P: | ||

:1) <math>\displaystyle P_{ij} >= 0</math> The probability of going to another state cannot be negative | :1) <math>\displaystyle P_{ij} >= 0</math> (The probability of going to another state cannot be negative) | ||

:2) <math>\displaystyle \sum_{\forall | :2) <math>\displaystyle \sum_{\forall j}P_{ij} = 1</math> (The probability of transitioning to some state from state i (including remaining in state i) is one) | ||

====Random Walk==== | ====Random Walk==== | ||

| Line 1,186: | Line 1,195: | ||

Here is an example: | Here is an example: | ||

Suppose we want to find the | Suppose we want to find the stationary distribution of | ||

<math>P=\left(\begin{matrix} | <math>P=\left(\begin{matrix} | ||

1/3&1/3&1/3\\ | 1/3&1/3&1/3\\ | ||

| Line 1,209: | Line 1,218: | ||

<math>\displaystyle \pi_2 = \frac{2}{9}</math> | <math>\displaystyle \pi_2 = \frac{2}{9}</math> | ||

Thus, the | Thus, the stationary distribution is <math>\displaystyle \pi = (\frac{1}{3}, \frac{4}{9}, \frac{2}{9})</math> | ||

====Detailed Balance==== | ====Detailed Balance==== | ||

| Line 1,236: | Line 1,245: | ||

::<math>\! = \pi_j \sum_{i=1}^N P_{ji}</math> | ::<math>\! = \pi_j \sum_{i=1}^N P_{ji}</math> | ||

::<math>\! = \pi_j</math>, as the sum of the entries in a | ::<math>\! = \pi_j</math>, as the sum of the entries in a row of P must sum to 1. | ||

So <math>\!\pi = \pi P</math>. | So <math>\!\pi = \pi P</math>. | ||

| Line 1,344: | Line 1,353: | ||

<math>\mu = \begin{pmatrix} 0.2 & 0.1 & 0.7 \end{pmatrix}</math> | <math>\mu = \begin{pmatrix} 0.2 & 0.1 & 0.7 \end{pmatrix}</math> | ||

Then <math>\!\mu | Then <math>\!\mu \neq \mu P</math>. | ||

This can be observed through the following Matlab code. | This can be observed through the following Matlab code. | ||

| Line 1,363: | Line 1,372: | ||

Note that <math>\!\mu_1 = \!\mu_4</math>, which indicates that <math>\!\mu</math> will cycle forever. | Note that <math>\!\mu_1 = \!\mu_4</math>, which indicates that <math>\!\mu</math> will cycle forever. | ||

This means that this chain has a stationary distribution, but is not limiting. A chain has a limiting distribution iff it is ergodic, that is, aperiodic and | This means that this chain has a stationary distribution, but is not limiting. A chain has a limiting distribution iff it is ergodic, that is, aperiodic and positive recurrent. While cycling breaks detailed balance and limiting distribution on the whole state space does not exist, the cycling behavior itself is the "limiting distribution". Also, for each cycles (closed class), we will have a mini-limiting distribution which is useful in analyzing small scale long-term behavior. | ||

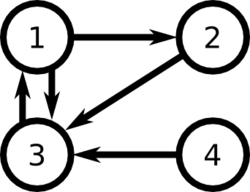

===Page Rank=== | ===Page Rank=== | ||

| Line 1,559: | Line 1,568: | ||

</math> | </math> | ||

The beta function, ''B'', appears as a | The beta function, ''B'', appears as a normalizing constant but it can be simplified by construction of the method. | ||

====Example==== | ====Example==== | ||

| Line 1,628: | Line 1,637: | ||

[[File:redaccoursb10.JPG|300px]] [[File:010metro.PNG|300px]] | [[File:redaccoursb10.JPG|300px]] [[File:010metro.PNG|300px]] | ||

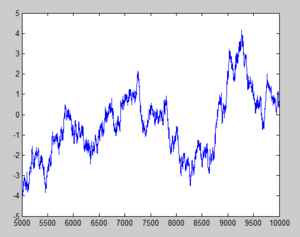

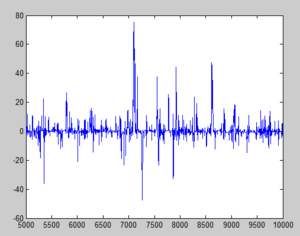

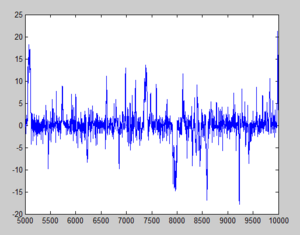

With <math>\,b=10</math>, jumps are very unlikely to be accepted as they deviate far from the mean (i.e. <math>\,y</math> is rejected as <math>\ u | With <math>\,b=10</math>, jumps are very unlikely to be accepted as they deviate far from the mean (i.e. <math>\,y</math> is rejected as <math>\ u>r </math> and <math>\,x(i)=x(i-1)</math> most of the time, hence most sample points stay fairly close to the origin. | ||

The third graph that resembles white noise (as in the case of <math>\,b=2</math>) indicates better sampling as more points are covered and accepted. For <math>\,b=0.1</math>, we have lots of jumps but most values are not repeated, hence the stationary distribution is less obvious; whereas in the <math>\,b=10</math> case, many points remains around 0. Approximately 73% were selected as x(i-1). | The third graph that resembles white noise (as in the case of <math>\,b=2</math>) indicates better sampling as more points are covered and accepted. For <math>\,b=0.1</math>, we have lots of jumps but most values are not repeated, hence the stationary distribution is less obvious; whereas in the <math>\,b=10</math> case, many points remains around 0. Approximately 73% were selected as x(i-1). | ||

| Line 1,643: | Line 1,652: | ||

==''' Theory and Applications of Metropolis-Hastings - October 27th, 2011'''== | ==''' Theory and Applications of Metropolis-Hastings - October 27th, 2011'''== | ||

As mentioned in the previous section, the idea of the Metropolis-Hastings (MH) algorithm is to produce a Markov chain that converges to a stationary distribution <math>!f</math> which we are interested in sampling from. | As mentioned in the previous section, the idea of the Metropolis-Hastings (MH) algorithm is to produce a Markov chain that converges to a stationary distribution <math>\!f</math> which we are interested in sampling from. | ||

====Convergence==== | ====Convergence==== | ||

| Line 1,657: | Line 1,666: | ||

In the MH algorithm, we use a proposal distribution to generate <math>\, y </math>~<math>\displaystyle q(y|x)</math>, and accept y with probability <math>min\left\{\frac{f(y)}{f(x)}\frac{q(x|y)}{q(y|x)},1\right\}</math> | In the MH algorithm, we use a proposal distribution to generate <math>\, y </math>~<math>\displaystyle q(y|x)</math>, and accept y with probability <math>min\left\{\frac{f(y)}{f(x)}\frac{q(x|y)}{q(y|x)},1\right\}</math> | ||

Suppose, without loss of generality, that <math>\frac{f(y)}{f(x)}\frac{q(x|y)}{q(y|x)} | Suppose, without loss of generality, that <math>\frac{f(y)}{f(x)}\frac{q(x|y)}{q(y|x)} \le 1</math>. This implies that <math>\frac{f(x)}{f(y)}\frac{q(y|x)}{q(x|y)} \ge 1</math> | ||

Let <math>\,r(x,y)</math> be the chance of accepting point y given that we are at point x. | Let <math>\,r(x,y)</math> be the chance of accepting point y given that we are at point x. | ||

So <math>\,r(x,y) = min\left\{\frac{f(y)}{f(x)}\frac{q(x|y)}{q(y|x)},1\right\} = \frac{f( | So <math>\,r(x,y) = min\left\{\frac{f(y)}{f(x)}\frac{q(x|y)}{q(y|x)},1\right\} = \frac{f(y)}{f(x)} \frac{q(x|y)}{q(y|x)}</math> | ||

Let <math>\,r(y,x)</math> be the chance of accepting point x given that we are at point y. | Let <math>\,r(y,x)</math> be the chance of accepting point x given that we are at point y. | ||

| Line 1,683: | Line 1,692: | ||

:i.e. <math>\,f(x)</math> is stationary distribution | :i.e. <math>\,f(x)</math> is stationary distribution | ||

It can be shown (although not here) that <math>f</math> is a limiting distribution as well. Therefore, the MH algorithm generates a sequence whose distribution converges to <math>f</math>, the target. | It can be shown (although not here) that <math>\!f</math> is a limiting distribution as well. Therefore, the MH algorithm generates a sequence whose distribution converges to <math>\!f</math>, the target. | ||

====Implementation==== | ====Implementation==== | ||

In the implementation of MH, the proposal distribution is commonly chosen to be symmetric, which simplifies the calculations and makes the algorithm more intuitively understandable. The MH algorithm can usually be regarded as a random walk along the distribution we want to sample from. Suppose we have a distribution <math>f</math>: | In the implementation of MH, the proposal distribution is commonly chosen to be symmetric, which simplifies the calculations and makes the algorithm more intuitively understandable. The MH algorithm can usually be regarded as a random walk along the distribution we want to sample from. Suppose we have a distribution <math>\!f</math>: | ||

[[File:Standard normal distribution.gif]] | [[File:Standard normal distribution.gif]] | ||

Suppose we start the walk at point <math>x</math>. The point <math>y_{1}</math> is in a denser region than <math>x</math>, therefore, the walk will always progress from <math>x</math> to <math>y_{1}</math>. On the other hand, <math>y_{2}</math> is in a less dense region, so it is not certain that the walk will progress from <math>x</math> to <math>y_{2}</math>. In terms of the MH algorithm: | Suppose we start the walk at point <math>\!x</math>. The point <math>\!y_{1}</math> is in a denser region than <math>\!x</math>, therefore, the walk will always progress from <math>\!x</math> to <math>\!y_{1}</math>. On the other hand, <math>\!y_{2}</math> is in a less dense region, so it is not certain that the walk will progress from <math>\!x</math> to <math>\!y_{2}</math>. In terms of the MH algorithm: | ||

<math>r(x,y_{1})=min(\frac{f(y_{1})}{f(x)},1)=1</math> since <math>f(y_{1})>f(x)</math>. Thus, any generated value with a higher density will be accepted. | <math>r(x,y_{1})=min(\frac{f(y_{1})}{f(x)},1)=1</math> since <math>\!f(y_{1})>f(x)</math>. Thus, any generated value with a higher density will be accepted. | ||

<math>r(x,y_{2})=\frac{f(y_{2})}{f(x)}</math>. The lower the density of <math>y_{2}</math> is, the less chance it will have of being accepted. | <math>r(x,y_{2})=\frac{f(y_{2})}{f(x)}</math>. The lower the density of <math>\!y_{2}</math> is, the less chance it will have of being accepted. | ||

A certain class of proposal distributions can be written in the form: | A certain class of proposal distributions can be written in the form: | ||

| Line 1,713: | Line 1,722: | ||

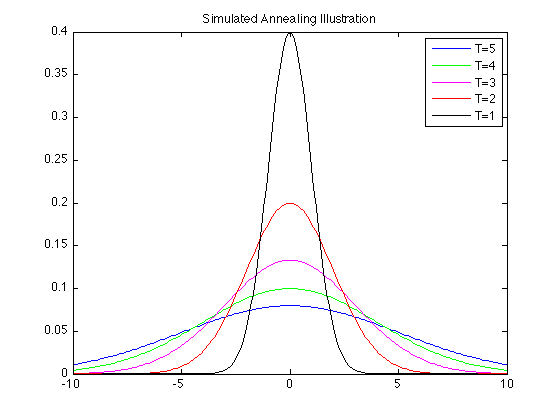

====Simulated Annealing==== | ====Simulated Annealing==== | ||

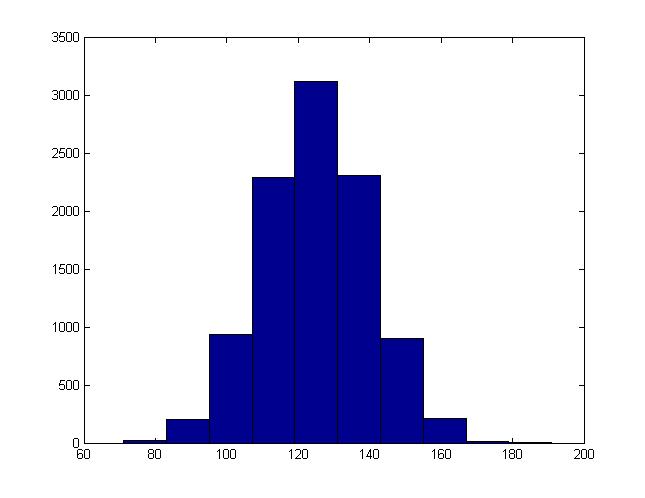

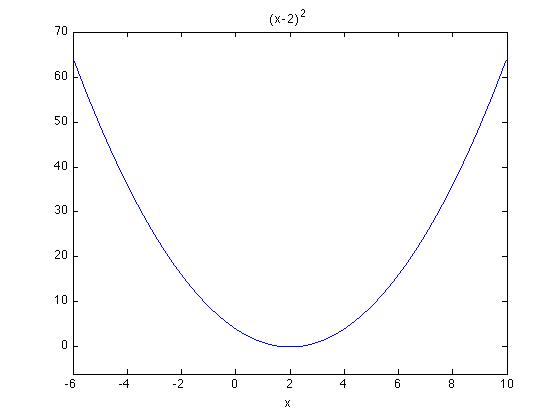

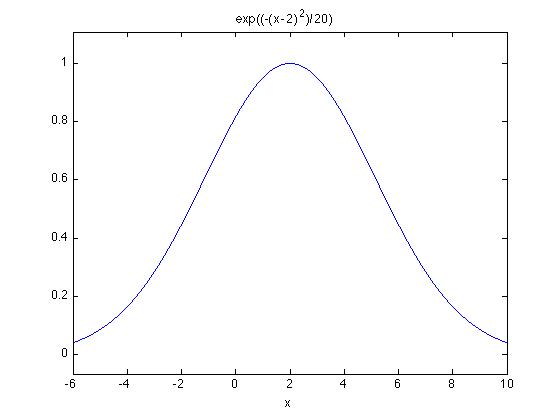

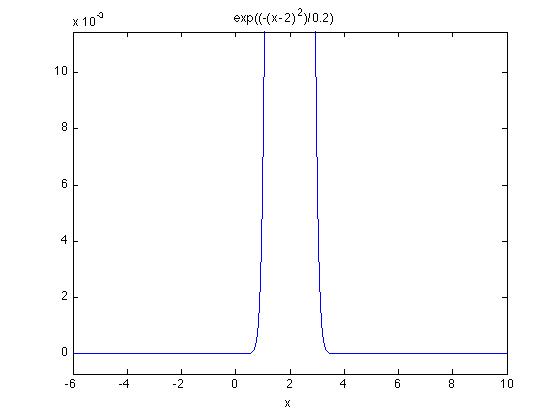

Metropolis-Hastings is very useful in simulation methods for solving optimization problems. One such application is simulated annealing, which addresses the problems of minimizing a function <math>h(x)</math>. This method will not always produce the global solution, but it is intuitively simple and easy to implement. | Metropolis-Hastings is very useful in simulation methods for solving optimization problems. One such application is simulated annealing, which addresses the problems of minimizing a function <math>\!h(x)</math>. This method will not always produce the global solution, but it is intuitively simple and easy to implement. | ||

Consider <math>e^{\frac{-h(x)}{T}}</math>, maximizing this expression is equivalent to minimizing <math>h(x)</math>. Suppose <math>\mu</math> is the maximizing value and <math>h(x)=(x-\mu)^2</math>, then the maximization function is a gaussian distribution <math>e^{-\frac{(x-\mu)^2}{T}}</math>. When many samples are taken from this distribution, the mean will converge to the desired maximizing value. The annealing comes into play by lowering T (the temperature) as the sampling progresses, making the distribution narrower. The steps of simulated annealing are outlined below: | Consider <math>e^{\frac{-h(x)}{T}}</math>, maximizing this expression is equivalent to minimizing <math>\!h(x)</math>. Suppose <math>\mu</math> is the maximizing value and <math>h(x)=(x-\mu)^2</math>, then the maximization function is a gaussian distribution <math>\!e^{-\frac{(x-\mu)^2}{T}}</math>. When many samples are taken from this distribution, the mean will converge to the desired maximizing value. The annealing comes into play by lowering ''T'' (the temperature) as the sampling progresses, making the distribution narrower. The steps of simulated annealing are outlined below: | ||

1. start with a random <math>x</math> and set T to a large number | 1. start with a random <math>\!x</math> and set ''T'' to a large number | ||

2. generate <math>y</math> from a proposal distribution <math>q(y|x)</math>, which should be symmetric | 2. generate <math>\!y</math> from a proposal distribution <math>\!q(y|x)</math>, which should be symmetric | ||

3. accept <math>y</math> with probability <math>min(\frac{f(y)}{f(x)},1)</math> | 3. accept <math>\!y</math> with probability <math>min(\frac{f(y)}{f(x)},1)</math> . (Note: <math>f(x) = e^{\frac{-h(x)}{T}}</math>) | ||

4. decrease T, and then go to step 2 | 4. decrease ''T'', and then go to step 2 | ||

To decrease T in Step 4, a variety of functions can be used. For example, a common temperature function used is with geometric decline, given by an initial temperature <math> | To decrease ''T'' in Step 4, a variety of functions can be used. For example, a common temperature function used is with geometric decline, given by an initial temperature <math>\! T_o </math>, final temperature <math>\! T_f </math>, the number of steps n, and the temperature function <math>\ T(t) = T_0(\frac{T_f}{T_o})^{t/n} </math> | ||

The following plot and Matlab code illustrates the simulated annealing procedure as temperature ''T'', the variance, decreases for a Gaussian distribution with zero mean. Starting off with a large value for the temperature ''T'' allows the Metropolis-Hastings component of the procedure to capture the mean, before gradually decreasing the temperature ''T'' in order to converge to the mean. | The following plot and Matlab code illustrates the simulated annealing procedure as temperature ''T'', the variance, decreases for a Gaussian distribution with zero mean. Starting off with a large value for the temperature ''T'' allows the Metropolis-Hastings component of the procedure to capture the mean, before gradually decreasing the temperature ''T'' in order to converge to the mean. | ||

| Line 1,752: | Line 1,761: | ||

continued from previous lecture... | continued from previous lecture... | ||

Recall <math>\ r(x,y)=min | Recall <math>\ r(x,y)=min(\frac{f(y)}{f(x)},1)</math> where <math> \frac{f(y)}{f(x)} = \frac{e^{\frac{-h(y)}{T}}}{e^{\frac{-h(x)}{T}}} = e^{\frac{h(x)-h(y)}{T}}</math> where <math>\ r(x,y) </math> represents the probability of accepting <math>\ y</math>. | ||

We will now look at a couple cases where <math> \displaystyle h(y) > h(x) </math> or <math> \displaystyle h(y) < h(x) </math>, and explore whether to accept or reject <math>\ y </math>. | We will now look at a couple cases where <math> \displaystyle h(y) > h(x) </math> or <math> \displaystyle h(y) < h(x) </math>, and explore whether to accept or reject <math>\ y </math>. | ||

| Line 1,809: | Line 1,818: | ||

===Gibbs Sampling=== | ===Gibbs Sampling=== | ||

Gibbs sampling is another Markov chain Monte Carlo method, similar to Metropolis-Hastings. There are two main differences between Metropolis-Hastings and Gibbs sampling. First, the candidate state is always accepted as the next state in Gibbs sampling. Second, it is assumed that the full conditional distributions are known, i.e. <math>P(X_i=x|X_j=x_j, \forall j\neq i)</math> for all <math>\displaystyle i</math>. The idea is that it is easier to sample from conditional distributions which are sets of one dimensional distributions than to sample from a joint distribution which is a higher dimensional distribution. Gibbs is a way to turn the joint distribution into multiple conditional | Gibbs sampling is another Markov chain Monte Carlo method, similar to Metropolis-Hastings. There are two main differences between Metropolis-Hastings and Gibbs sampling. First, the candidate state is always accepted as the next state in Gibbs sampling. Second, it is assumed that the full conditional distributions are known, i.e. <math>P(X_i=x|X_j=x_j, \forall j\neq i)</math> for all <math>\displaystyle i</math>. The idea is that it is easier to sample from conditional distributions which are sets of one dimensional distributions than to sample from a joint distribution which is a higher dimensional distribution. Gibbs is a way to turn the joint distribution into multiple conditional distributions. | ||

<b>Advantages:</b><br /> | <b>Advantages:</b><br /> | ||

- | - Sampling from conditional distributions may be easier than sampling from joint distributions | ||

<b>Disadvantages:</b><br /> | <b>Disadvantages:</b><br /> | ||

- | - We do not necessarily know the conditional distributions | ||

For example, if we want to sample from <math>\, f_{X,Y}(x,y)</math>, we need to know how to sample from <math>\, f_{X|Y}(x|y)</math> and <math>\, f_{Y|X}(y|x)</math>. Suppose the chain starts with <math>\,(X_0,Y_0)</math> and <math>(X_1,Y_1), \dots , (X_n,Y_n)</math> have been sampled. Then, | For example, if we want to sample from <math>\, f_{X,Y}(x,y)</math>, we need to know how to sample from <math>\, f_{X|Y}(x|y)</math> and <math>\, f_{Y|X}(y|x)</math>. Suppose the chain starts with <math>\,(X_0,Y_0)</math> and <math>(X_1,Y_1), \dots , (X_n,Y_n)</math> have been sampled. Then, | ||

| Line 1,872: | Line 1,881: | ||

<math>\, f(x_2|x_1)=N(\mu_2 + \rho(x_1-\mu_1), 1-\rho^2)</math> | <math>\, f(x_2|x_1)=N(\mu_2 + \rho(x_1-\mu_1), 1-\rho^2)</math> | ||

In general, if the joint distribution has parameters | |||

<math>\mu = \begin{bmatrix}\mu_1 \\ \mu_2 \end{bmatrix}</math> and <math>\Sigma = \begin{bmatrix} \Sigma _{1,1} && \Sigma _{1,2} \\ \Sigma _{2,1} && \Sigma _{2,2} \end{bmatrix}</math> | <math>\mu = \begin{bmatrix}\mu_1 \\ \mu_2 \end{bmatrix}</math> and <math>\Sigma = \begin{bmatrix} \Sigma _{1,1} && \Sigma _{1,2} \\ \Sigma _{2,1} && \Sigma _{2,2} \end{bmatrix}</math> | ||

then the conditional distribution <math>\, f(x_1|x_2)</math> has mean <math>\, \mu_1 + \Sigma _{1,2}(\Sigma _{ | then the conditional distribution <math>\, f(x_1|x_2)</math> has mean <math>\, \mu_{1|2} = \mu_1 + \Sigma _{1,2}(\Sigma _{2,2})^{-1}(x_2-\mu_2)</math> and variance <math>\, \Sigma _{1|2} = \Sigma _{1,1}-\Sigma _{1,2}(\Sigma _{2,2})^{-1}\Sigma _{2,1}</math>. | ||

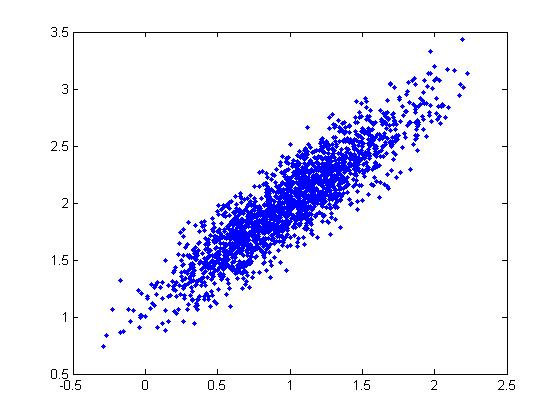

Thus, the algorithm for Gibbs sampling is: | |||

*1) Set i = 1 | |||

*2) Draw <math>X_1^{(i)}</math> ~ <math>N(\mu_1 + \rho(X_2^{(i-1)}-\mu_2), 1-\rho^2)</math> | |||

*3) Draw <math>X_2^{(i)}</math> ~ <math>N(\mu_2 + \rho(X_1^{(i)}-\mu_1), 1-\rho^2)</math> | |||

*4) Set <math>X^{(i)} = [X_1^{(i)}, X_2^{(i)}]^T</math> | |||

*5) Increment i, return to step 2. | |||

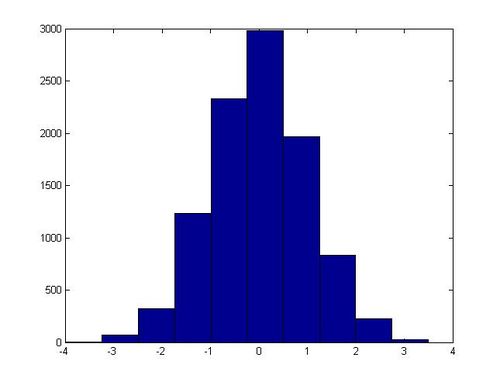

The Matlab code implementation of this algorithm is as follows:<br> | |||

mu = [1; 2]; | |||

sigma = [1 0.9; 0.9 1]; | |||

X(:,1) = [1; 2]; | |||

r = 1 - 0.9^2; | |||

for i = 2:2000 | |||

X(1,i) = 1 + 0.9*(X(2,i-1) - mu(2)) + r*randn; | |||

X(2,i) = 2 + 0.9*(X(1,i) - mu(1)) + r*randn; | |||

end | |||

plot(X(1,:),X(2,:),'.') | |||

Which gives the following plot: | |||

[[File:Gibbs_Example.jpg]] | |||

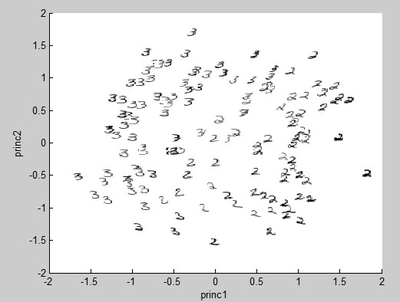

=='''Principal Component Analysis (PCA) - November 8, 2011'''== | =='''Principal Component Analysis (PCA) - November 8, 2011'''== | ||

| Line 2,045: | Line 2,077: | ||

So <math>\displaystyle Q_{ij}=(X_i)(X_j)^T=[X X^T]_{ij} </math>. | So <math>\displaystyle Q_{ij}=(X_i)(X_j)^T=[X X^T]_{ij} </math>. | ||

Now the principal components of <math>\displaystyle X</math> are the eigenvectors of <math>\displaystyle Q</math>. If we do the singular value decomposition, setting [u s v] = svd(Q), then the columns of u are eigenvectors of <math>\displaystyle Q=X X^T</math>. | Now the principal components of <math>\displaystyle X</math> are the eigenvectors of <math>\displaystyle Q</math>. If we do the singular value decomposition, setting <math>\ [u\ s\ v] = svd(Q) </math>, then the columns of <math>\ u </math> are eigenvectors of <math>\displaystyle Q=X X^T</math>. | ||

Let <math>\displaystyle u</math> be a <math>\displaystyle d\times p</math> matrix composed of the | Let <math>\displaystyle u</math> be a <math>\displaystyle d\times p</math> matrix, where <math>\displaystyle p < d</math>, that is composed of the <math>\ p </math> eigenvectors of <math>\displaystyle Q</math> corresponding to the largest eigenvalues. | ||

To map the data in a lower dimensional space we project <math>\displaystyle X</math> onto the p dimensional subspace defined by the columns of <math>\displaystyle u</math>, which are the principal components of <math>\displaystyle X</math>. | To map the data in a lower dimensional space we project <math>\displaystyle X</math> onto the <math>\ p </math> dimensional subspace defined by the columns of <math>\displaystyle u</math>, which are the first <math>\ p </math> principal components of <math>\displaystyle X</math>. | ||

Let <math>\displaystyle Y_{p\times n}={u^T}_{p\times d} X_{d\times n}</math>. So <math>\displaystyle Y_{p\times n}</math> is a lower dimensional approximation of our original data <math>\displaystyle X_{d\times n}</math> | Let <math>\displaystyle Y_{p\times n}={u^T}_{p\times d} X_{d\times n}</math>. So <math>\displaystyle Y_{p\times n}</math> is a lower dimensional approximation of our original data <math>\displaystyle X_{d\times n}</math> | ||

We can also approximately reconstruct the original data using the dimension-reduced data. However, we will lose some information because when we map those points into lower dimension, we throw away the last (d - p) eigenvectors which contain some of the original information. | We can also approximately reconstruct the original data using the dimension-reduced data. However, we will lose some information because when we map those points into lower dimension, we throw away the last <math>\ (d - p) </math> eigenvectors which contain some of the original information. | ||

<math>\displaystyle X'_{d\times n} = {u}_{d\times p} Y_{p\times n}</math>, where <math>\displaystyle X'_{d\times n}</math> is an approximate reconstruction of <math>\displaystyle X_{d\times n}</math>. | <math>\displaystyle X'_{d\times n} = {u}_{d\times p} Y_{p\times n}</math>, where <math>\displaystyle X'_{d\times n}</math> is an approximate reconstruction of <math>\displaystyle X_{d\times n}</math>. | ||

| Line 2,100: | Line 2,132: | ||

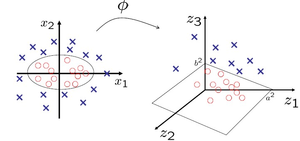

===Introduction to Kernel Function=== | ===Introduction to Kernel Function=== | ||

PCA is useful when those data points spread in or close to a plane. This means that PCA is powerful when dealing with linear problems. But when data points spread in a manifold space, PCA is hard to implement. But there is a solution to this problem - we can use a method to change the nonlinear | PCA is useful when those data points spread in or close to a plane. This means that PCA is powerful when dealing with linear problems. But when data points spread in a manifold space, PCA is hard to implement. But there is a solution to this problem - we can use a method to change the linear algorithm into a nonlinear one. This is called the "Kernel Trick". | ||

'''An intuitive example''' | '''An intuitive example''' | ||

| Line 2,106: | Line 2,138: | ||

[[File:Kernel trick.png|400px|300px]] | [[File:Kernel trick.png|400px|300px]] | ||

From the picture, we can see the red | From the picture, we can see the red circles are in the middle of the blue Xs. However, it is hard to separate those two classes by using any linear function (lines in the two dimensional space). But we can use a Kernal function <math>\ \phi</math> to project the points onto a three dimensional space. Once the blue Xs and red circles have been mapped to a three dimensional space in this way it is easy to separate them using a linear function. | ||

For more details about this trick, please see http://omega.albany.edu:8008/machine-learning-dir/notes-dir/ker1/ker1.pdf | For more details about this trick, please see http://omega.albany.edu:8008/machine-learning-dir/notes-dir/ker1/ker1.pdf | ||

The significance of a Kernel Function, <math>\displaystyle \phi</math>, is that we can implicitly change the data into a high dimension. | |||

Let's look at how this is possible: | Let's look at how this is possible: | ||

| Line 2,166: | Line 2,198: | ||

==''' Kernel PCA - November 15, 2011'''== | ==''' Kernel PCA - November 15, 2011'''== | ||

PCA doesn't work well when the directions of variation in our data | PCA doesn't work well when the directions of variation in our data are nonlinear. Especially in the case where the dataset has very high dimensions, the dataset lies near or on a nonlinear manifold which prevents PCA from determining principal components correctly. To deal with this problem, we apply kernels to PCA. By transforming the original data with a nonlinear mapping, we can obtain much better principal components. | ||

First we look at the algorithm for PCA and see how we can kernelize PCA: | First we look at the algorithm for PCA and see how we can kernelize PCA: | ||

| Line 2,174: | Line 2,206: | ||

Find eigenvectors of <math> \ XX^T </math>, call it <math>\ U </math> | Find eigenvectors of <math> \ XX^T </math>, call it <math>\ U </math> | ||

<math> | to map data points to a lower dimensional space:<br> | ||

<math>\ Y = U^{T}X</math><br> | |||

to reconstruct points:<br> | |||

<math>\ \hat{X} = UY</math><br> | |||

to map a new point:<br> | |||

<math>\ y = U^{T}x</math><br> | |||

to reconstruct point:<br> | |||

</math> | <math>\ \hat{x} = Uy</math><br> | ||

== Dual PCA == | |||

Consider the singular value decomposition of n-by-m matrix X: | |||

<math> | <math> | ||

| Line 2,192: | Line 2,226: | ||

</math> | </math> | ||

The columns of U are the eigenvectors of <math>XX^T</math>. | Where: | ||

* The columns of U are the eigenvectors of <math>XX^T</math> corresponding to the eigenvalues in decreasing order. | |||

The columns of V are the eigenvectors of <math>X^T{X}</math>. | * The columns of V are the eigenvectors of <math>X^T{X}</math>. | ||

* The diagonal matrix <math>\Sigma</math> contains the square roots of the eigenvalues of <math>XX^T</math> in decreasing order. | |||

Now we want to kernelize this classical version of PCA. We would like to express everything based on V which can be kernelized. This is called Dual PCA. | Now we want to kernelize this classical version of PCA. We would like to express everything based on V which can be kernelized. The reason why we want to do this is because if n >> m (i.e. the feature space is much larger than the number of sample points) then the original PCA algorithm would be impractical. We want an algorithm that depends less on n. This is called Dual PCA. | ||

U in terms of V: | U in terms of V: | ||

| Line 2,225: | Line 2,260: | ||

\begin{align} | \begin{align} | ||

\hat{X}&=UY \\ | \hat{X}&=UY \\ | ||

&=XV\Sigma^{-1}\Sigma{V^T} \\ | |||

&= XVV^T \\ | |||

&= X | |||

\end{align} | \end{align} | ||

</math> | </math> | ||

| Line 2,247: | Line 2,283: | ||

\begin{align} | \begin{align} | ||

\hat{x} &= Uy \\ | \hat{x} &= Uy \\ | ||

& = UU^Tx \\ | |||

& =XV\Sigma^{-1}\Sigma^{-1}V^T{x^T}x \\ | & =XV\Sigma^{-1}\Sigma^{-1}V^T{x^T}x \\ | ||

&= XV\Sigma^{-2}V^T{x^T}x | &= XV\Sigma^{-2}V^T{x^T}x | ||

| Line 2,283: | Line 2,320: | ||

We define: | We define: | ||

:<math>\tilde{\phi}(x) = \phi(x) - E_x[\phi(x)]</math> | :<math>\tilde{\phi}(x) = \phi(x) - E_x[\phi(x)]</math> | ||

And thus, our kernel function becomes: | And thus, our kernel function becomes: | ||

:<math>\tilde{k}(\mathbf{x},\mathbf{y}) = \tilde{\phi}(\mathbf{x})^T\tilde{\phi}(\mathbf{y})</math> | :<math>\tilde{k}(\mathbf{x},\mathbf{y}) = \tilde{\phi}(\mathbf{x})^T\tilde{\phi}(\mathbf{y})</math> | ||

:<math>\ | :::<math>\! = (\phi(x) - E_x[\phi(x)])^T(\phi(y) - E_y[\phi(y)])</math> | ||

:::<math>\! = \phi(x)^T\phi(y) - \phi(y)E_x[\phi(x)^T] - \phi(x)^TE_y[\phi(y)] + E_x[\phi(x)^T]E_y[\phi(y)]</math> | |||

:::<math>\! = k(\mathbf{x},\mathbf{y}) - E_x[k(\mathbf{x},\mathbf{y})] - E_y[k(\mathbf{x},\mathbf{y})] + E_x[E_y[k(\mathbf{x},\mathbf{y})]]</math> | |||

In practice, we would do the following: | In practice, we would do the following: | ||

| Line 2,301: | Line 2,345: | ||

'''Note:''' On the right hand side, the second item is the average of the columns of <math>K</math>, the third item is the average of the rows of <math>K</math>, and the last item is the average of all the entries of <math>K</math>. | '''Note:''' On the right hand side, the second item is the average of the columns of <math>K</math>, the third item is the average of the rows of <math>K</math>, and the last item is the average of all the entries of <math>K</math>. | ||

As a result, they are vectors of dimension | As a result, they are vectors of dimension <math>1\times n</math>, <math>n\times 1</math>, and <math>1\times 1</math> (a scalar) respectively. To do the actual arithmetic, all matrix dimensions must match. In MATLAB, this is accomplished through the repmat() function. | ||

5. Find the eigenvectors of <math>\tilde{K}</math>. Take the first few and combine them in a matrix <math>V</math>, and use that as the <math>V</math> in the dual PCA mapping and reconstruction steps shown above. | 5. Find the eigenvectors of <math>\tilde{K}</math>. Take the first few and combine them in a matrix <math>V</math>, and use that as the <math>V</math> in the dual PCA mapping and reconstruction steps shown above. | ||

=='''Multidimensional Scaling (MDS)'''== | =='''Multidimensional Scaling (MDS)'''== | ||

Most of the common linear and polynomial kernels do not give very good results in | Most of the common linear and polynomial kernels do not give very good results in practice. For real data, there is an alternate approach that can yield much better results but, as we will see later, is equivalent to a kernel PCA process. | ||

Multidimensional Scaling (MDS) is an algorithm, much like PCA, that maps the original high dimensional space to a lower dimensional space. | Multidimensional Scaling (MDS) is an algorithm, much like PCA, that maps the original high dimensional space to a lower dimensional space. | ||

===Introduction to MDS=== | ===Introduction to MDS=== | ||

The main purpose of MDS is to try to | The main purpose of MDS is to try to map high dimensional data to a lower dimensional space while preserving the pairwise distances between points. | ||

ie: MDS addresses the problem of constructing a configuration of <math> \ n </math> points in Euclidean space by using information about the distance between the <math> \ n </math> patterns. | ie: MDS addresses the problem of constructing a configuration of <math> \ n </math> points in Euclidean space by using information about the distance between the <math> \ n </math> patterns. | ||

It may not be possible to preserve the exact | It may not be possible to preserve the exact distances between points, so in this case we need to find a representation that is as close as possible. | ||

'''Definition''': A <math> \ n\times n </math> matrix <math> \ D</math> is called the '''distance matrix''' if: | '''Definition''': A <math> \ n\times n </math> matrix <math> \ D</math> is called the '''distance matrix''' if: | ||

| Line 2,325: | Line 2,369: | ||

===Metric MDS=== | ===Metric MDS=== | ||

One of the possible methods to preserve the | One of the possible methods to preserve the pairwise distance is to use Metric MDS, which attempts to minimize: | ||

<math>\min_Y \sum_{i=1}^{n}\sum_{j=1}^{n}(d_{ij}^{(X)} - d_{ij}^{(Y)})^2 </math> | <math>\min_Y \sum_{i=1}^{n}\sum_{j=1}^{n}(d_{ij}^{(X)} - d_{ij}^{(Y)})^2 </math> | ||

where <math>d_{ij}^{(X)} = ||x_i-x_j|| </math> and <math>d_{ij}^{(Y)} = ||y_i-y_j|| </math> | where <math>d_{ij}^{(X)} = ||x_i-x_j||^2 </math> and <math>d_{ij}^{(Y)} = ||y_i-y_j||^2 </math> | ||

==''' Continued with MDS, Isomap and Classification - November 22, 2011'''== | ==''' Continued with MDS, Isomap and Classification - November 22, 2011'''== | ||

<br />The distance matrix can be converted to a kernel matrix of inner products <math>X^ | <br />The distance matrix can be converted to a kernel matrix of inner products <math>\ X^TX</math> by <math>\ X^{T}X=-\frac{1}{2}HD^{(X)}H </math>,<br /> | ||

where <math>H=I-\frac{1}{n}ee^{T}</math> and <math>\ e </math> is a column vector of all 1. | where <math>H=I-\frac{1}{n}ee^{T}</math> and <math>\ e </math> is a column vector of all 1. | ||

| Line 2,359: | Line 2,403: | ||

</math> | </math> | ||

Now <math>\min_Y \sum_{i=1}^{n}\sum_{j=1}^{n}(d_{ij}^{(X)} - d_{ij}^{(Y)})^2 </math> can be reduced to <math>\min_Y \sum_{i=1}^{n}\sum_{j=1}^{n}(x_{i}^{T}x_{j}-y_{i}^{T}y_{j})^{2}</math> <math>\Leftrightarrow </math><math>\min_Y \ Tr(X^{T}X-Y^{T}Y)^{2}</math>. | |||

So far we have rewritten the objective function, but we must remember that our definition of <math>\ D </math> introduces constraints on its components. We must ensure that these constraints are respected in the way we have expressed the minimization problem with traces. Luckily, we can capture these constraints with a single requirement thanks to the following theorem: | |||

<br />''' Theorem''': Let <math>\ D</math> be a distance matrix and <math>\ K=X^{T}X=-\frac{1}{2}HD^{(X)}H </math>. Then <math>\ D</math> is Euclidean if and only if <math>\ K</math> is a positive semi-definite matrix. | |||

< | Therefore, to complete the rewriting of our original minimization problem from norms to traces, it suffices to impose that <math>\ K</math> be <math>\ p.s.d.</math>, as this guarantees that <math>\ D</math> is a distance matrix and the components of <math>\ X^{T}X </math> and <math>\ Y^{T}Y</math> satisfy the original constraints. | ||

<br /> | <br />Proceeding with singular value decomposition, <math>\ X^{T}X</math> and <math>\ Y^{T}Y</math> can be decomposed as: | ||

<br /><math>\ X^{T}X=V\Lambda V^{T}</math><br /><math>Y^{T}Y=Q\hat{\Lambda Q^{T | <br /><math>\ X^{T}X=V\Lambda V^{T}</math><br /><math>Y^{T}Y=Q\hat{\Lambda} Q^{T}</math> | ||

<br />Since <math>Y^{T}Y</math> is <math>p.s.d.</math>,<math>\hat{\Lambda} </math> has no negative value and therefore: <math> Y=\hat{\Lambda}^{\frac{1}{2}}Q^{T}</math>. | <br />Since <math>\ Y^{T}Y</math> is <math>\ p.s.d.</math> , <math>\hat{\Lambda} </math> has no negative value and therefore: <math> Y=\hat{\Lambda}^{\frac{1}{2}}Q^{T}</math>. | ||

<br />The above definitions help rewrite the cost function as: | <br />The above definitions help rewrite the cost function as: | ||

| Line 2,375: | Line 2,421: | ||

<br /><math>\min_{Q,\hat{\Lambda}}Tr(V\Lambda V^{T}-Q\hat{\Lambda}Q^{T})^{2}</math> | <br /><math>\min_{Q,\hat{\Lambda}}Tr(V\Lambda V^{T}-Q\hat{\Lambda}Q^{T})^{2}</math> | ||

<br />Multiply <math>V^{T}</math> on the left and <math>V</math> on the right, we will get | <br />Multiply <math>\ V^{T}</math> on the left and <math>\ V</math> on the right, we will get | ||

<br /><math>\min_{Q,\hat{\Lambda}}(\Lambda-V^{T}Q\hat{\Lambda Q^{T}V | <br /><math>\min_{Q,\hat{\Lambda}}Tr(\Lambda-V^{T}Q\hat{\Lambda} Q^{T}V)^{2}</math> | ||

Then let <math>\ G=V^{T}Q</math> | Then let <math>\ G=V^{T}Q</math> | ||

| Line 2,384: | Line 2,430: | ||

<br /><math>\min_{Q,\hat{\Lambda}}Tr(\Lambda-G\hat{\Lambda}G^{T})^{2}</math> | <br /><math>\min_{Q,\hat{\Lambda}}Tr(\Lambda-G\hat{\Lambda}G^{T})^{2}</math> | ||

<math>=</math><math>\min_{ | <math>=</math><math>\min_{G,\hat{\Lambda}}Tr(\Lambda^{2}+G{\Lambda}G^{T}G\hat{\Lambda}G^{T}-2\Lambda G\hat{\Lambda}G)</math> | ||

<br />For a fixed <math>\hat{\Lambda}</math> we can minimize for G. The result is that | <br />For a fixed <math>\hat{\Lambda}</math> we can minimize for G. The result is that <math>\ G=I</math>. Then we can simplify the target function: | ||

Then we can simplify target function | |||

| Line 2,398: | Line 2,441: | ||

Obviously, <math>\hat{\Lambda}=\Lambda</math>. | Obviously, <math>\hat{\Lambda}=\Lambda</math>. | ||

If <math>X</math> is <math>d\times n</math> matrix ,<math>(d<n)</math>, the rank of <math>X</math> is no greater | If <math>\ X</math> is <math>d\times n</math> matrix, <math>\ (d<n)</math>, the rank of <math>\ X</math> is no greater than <math>\ d</math>. Then the rank of <math>X^{T}X_{(n\times n)}</math> is no greater than <math>\ d</math> since <math>\ rank(X^{T}X) = rank(X) </math>. The dimension of <math>\ Y</math> is smaller than <math>\ X</math>, so therefore the rank of <math>\ Y^{T}Y</math> is smaller than <math>\ d</math>. | ||

Since we want to do dimensionality reduction and make <math>\Lambda</math> and <math>\hat{\Lambda}</math> as similar as possible we can let <math>\hat{\Lambda}</math> be the top <math>d</math> diagonal elements of <math>\Lambda</math>. | Since we want to do dimensionality reduction and make <math>\ \Lambda</math> and <math>\hat{\Lambda}</math> as similar as possible, we can let <math>\hat{\Lambda}</math> be the top <math>\ d</math> diagonal elements of <math>\ \Lambda</math>. | ||

We also have <math>G=V^{T}Q</math>, obviously <math>Q=V</math>. | We also have <math>\ G = V^{T}Q </math>, obviously <math>\ Q=V</math> since <math>\ G = I</math>. | ||

The solution is: | The solution is: | ||

| Line 2,408: | Line 2,451: | ||

<br /><math>Y=\Lambda^{\frac{1}{2}}V^{T}</math> | <br /><math>Y=\Lambda^{\frac{1}{2}}V^{T}</math> | ||

<br />where V is the eigenvector of <math>X^{T}X</math> corresponding to the top <math>d</math> | <br />where <math>\ V</math> is the eigenvector of <math>\ X^{T}X</math> corresponding to the top <math>\ d</math> | ||

eigenvalues, and <math>\Lambda</math> is the top <math>d</math> eigenvalues of <math>X^{T}X</math>. | eigenvalues, and <math>\Lambda</math> is the top <math>\ d</math> eigenvalues of <math>\ X^{T}X</math>. | ||

<br />Compare this with dual PCA. | <br />Compare this with dual PCA. | ||

| Line 2,417: | Line 2,460: | ||

<br /><math>\ Y=\Sigma V^{T}</math>. | <br /><math>\ Y=\Sigma V^{T}</math>. | ||

<br />Clearly, the result of dual PCA is the same with MDS. Actually, one property of PCA is to preserve the pairwise distances between data points in both high | <br />Clearly, the result of dual PCA is the same with MDS. Actually, one property of PCA is to preserve the pairwise distances between data points in both high dimensional and low dimensional space. | ||

Now as an appendix, we provide a short proof to the first equation <math>\ X^{T}X=-\frac{1}{2}HD^{(X)}H </math>. | Now as an appendix, we provide a short proof to the first equation <math>\ X^{T}X=-\frac{1}{2}HD^{(X)}H </math>. | ||

| Line 2,430: | Line 2,473: | ||

===Isomap (As per Handout - Section 1.6)=== | ===Isomap (As per Handout - Section 1.6)=== | ||

Isomap is a nonlinear generalization of classical MDS with the main idea being that MDS is perfomed on the geodesic space of the non-linear data manifold as opposed to being performed on the input space. Isomap applies MDS | The Isomap algorithm is a nonlinear generalization of classical MDS with the main idea being that MDS is perfomed on the geodesic space of the non-linear data manifold as opposed to being performed on the input space. Isomap applies geodesic distances in the distance matrix for MDS rather than the straight line distances between the 2 points in order to find the low-dimensional mapping that preserves the pairwise distances. This geodesic distance is approximated by building a k-neighbourhood graph of all the points on the manifold and finding the shortest path to a given point. This gives a much better distance measurement in a manifold such as the 'Swiss Roll' manifold than using Euclidean distances. | ||

<br> | <br> | ||

| Line 2,445: | Line 2,488: | ||

<br>Process:<br> | <br>'''Process:'''<br> | ||

1. Identify the k nearest neighbours or choose points from a fixed radius | 1. Identify the k nearest neighbours or choose points from a fixed radius | ||

| Line 2,452: | Line 2,495: | ||

3. The geodesic distances, <math> \ d_{M}(i,j)</math> between all pair points on the manifold, M, are then estimated | 3. The geodesic distances, <math> \ d_{M}(i,j)</math> between all pair points on the manifold, M, are then estimated | ||

'''Note''': Isomap approximates <math> \ d_{M}(i,j)</math> as the shortest path distance <math> \ d_{G}(i,j)</math> in Graph G | '''Note''': Isomap approximates <math> \ d_{M}(i,j)</math> as the shortest path distance <math> \ d_{G}(i,j)</math> in Graph G. The k in the algorithm must be chosen carefully, too small a k and the graph will not be connected, but to large a k, and the algorithm will be closer to the euclidean distance instead. | ||

<br> | <br> | ||

| Line 2,460: | Line 2,503: | ||

===Classification === | ===Classification === | ||

Classification is a technique | Classification is a technique in pattern recognition. It can yield very complex decision boundaries as they are very suitable for [http://en.wikipedia.org/wiki/Data_synchronization#Ordered_data, ordered data], [http://en.wikipedia.org/wiki/Categorical_data, categorical data] or a mixture of the two types. A decision or classification represents a multi-stage decision process where a binary decision is made at each stage. | ||

'''E.g''': A hand-written object can be scanned and recognized by the '''classification''' technique. The model realized the class it belongs to and pairs it with the corresponding object in its library. | '''E.g''': A hand-written object can be scanned and recognized by the '''classification''' technique. The model realized the class it belongs to and pairs it with the corresponding object in its library.<br> | ||

< | Mathematically, each object have a set of features <math>\ X</math> and a corresponding label <math>\ Y</math> which is the class it belongs to:<br> | ||

==''' Classification - November 24,2011'''== | <math>\ \{(x_1,y_1),(x_2,y_2),...,(x_n,y_n)\}</math> | ||

Since the training set is labelled with the correct answers, classification is called a "'''supervised learning'''" method. | |||

In contrast, the '''Clustering''' technique is used to explore a data set whereby the main objective is to separate the sample into groups or to provide an understanding about the underlying structure or nature of the data. Clustering is an "'''unsupervised classification'''" method, because we do not know the groups that are in the data or any group characteristics of any unit observation. There are no labels to classify the data points, all we have is the feature set <math>\ X</math>:<br> | |||

<math>\ \{(x_1),(x_2),...,(x_n)\}</math> | |||

==''' Classification - November 24, 2011'''== | |||

===Classification === | ===Classification === | ||

Classification is predicting a discrete random variable Y from another random variable X. It is analogous to regression, but the difference between them is that regression | Classification is predicting a discrete random variable Y (the label) from another random variable X. It is analogous to regression, but the difference between them is that regression uses continuous values, while classification uses discrete values (labels). | ||

Consider iid data <math>\displaystyle (X_1,Y_1),(X_2,Y_2),...,(X_n,Y_n) </math> where <br> | |||

<math> X_i = (X_{i1},...,X_{id}) \in \mathbb{R}^{d} </math>, representing an object, <br> | |||

<math>\ Y_i \in Y</math> is the label of the i-th object, and <math>\ Y</math> is some finite set. | |||

We wish to determine a function <math>\ h : \mathbb{R}^{d} \rightarrow Y</math> that can predict the value of <math>\ Y_i</math> given the value <math>\ X_i</math>. When we observe a new <math> \displaystyle X</math>, predict <math>\displaystyle Y</math> to be <math> \displaystyle h(X)</math>.(We use h(x)) for the following discussion). | |||

<math> X_i | |||

< | The difference between classification and clustering is that clustering do not have <math>\ Y_i</math> and it only puts <math>\ X_i</math> into different classes. | ||

==== | ====Examples==== | ||

'''Object:''' An image of a pepper' | '''Object:''' An image of a pepper' | ||

<br>The features are defined to be colour, length, diameter, and weight. | <br>The features are defined to be colour, length, diameter, and weight. | ||

| Line 2,486: | Line 2,539: | ||

<br> '''Labels(Y):''' Red Pepper | <br> '''Labels(Y):''' Red Pepper | ||

<br> The objective of classification is to use the classification function for unseen data. Given the features, we want to classify the object, or in other words find the label. This is the typical classification problem. | <br> The objective of classification is to use the classification function for unseen data - data for which we do not know the classification. Given the features, we want to classify the object, or in other words find the label. This is the typical classification problem. | ||

<br> '''Real Life Examples''': Face Recognition - | <br> '''Real Life Examples''': Face Recognition - Pictures are just a points in high dimensional space; we can represent them in vectors. Sound waves can also be classified in a similar manner using Fourier Transforms. Classification is also used to find drugs which cure disease. Molecules are classified as either a good fit into the cavity of a protein, or not. | ||

<br> In machine learning, classification is also known as supervised learning. Often we separate the data set into two parts. One is called a training set and the other, a testing set. We use the training set to establish a classifier rule and use the testing set to test its effectiveness. | <br> In machine learning, classification is also known as supervised learning. Often we separate the data set into two parts. One is called a training set and the other, a testing set. We use the training set to establish a classifier rule and use the testing set to test its effectiveness. | ||

| Line 2,497: | Line 2,550: | ||

<br><math> \hat{L}_h = \frac{1}{n} \sum_{i=1}^{n}I(h(X_i)\neq Y_i) </math> | <br><math> \hat{L}_h = \frac{1}{n} \sum_{i=1}^{n}I(h(X_i)\neq Y_i) </math> | ||

<br> The empirical error rate the the proportion of points that have not been classified correctly. It can be shown that this estimation always underestimates the true error rate, although we do not cover it in this course. | Where <math>\!I</math> is the indicator function. That is, <math>\!I( ) = 1</math> if the statement inside the bracket is true, and <math>\!I( ) = 0</math> otherwise. | ||

<br> The empirical error rate is the the proportion of points that have not been classified correctly. It can be shown that this estimation always underestimates the true error rate, although we do not cover it in this course. | |||

A way to get a better estimate of the error is to construct the classifier with half of the given data and use the other half to calculate the error rate. | |||

===''' Bayesians vs Frequentists'''=== | ===''' Bayesians vs Frequentists'''=== | ||

Bayesians view probability as the measure of confidence that a person holds in a proposition given certain information. They state a "prior probability" exist, which represents the possibility of a event's occurrence given no information. As we add new information regarding this event, our belief | Bayesians view probability as the measure of confidence that a person holds in a proposition given certain information. They state a "prior probability" exist, which represents the possibility of a event's occurrence given no information. As we add new information regarding this event, our belief adjusts according to the new information, which gives us a posterior probability. Frequentists interpret probability as a "propensity" of some event. | ||

===''' Bayes Classifier '''=== | ===''' Bayes Classifier '''=== | ||

| Line 2,509: | Line 2,565: | ||

<br> <math> r(x) = P(Y=1|X=x) = \frac{P(X=x|Y=1)P(Y=1)}{P(X=x)} = \frac{P(X=x|Y=1)P(Y=1)}{P(X=x|Y=1)P(Y=1)+P(X=x|Y=0)P(Y=0)} </math> | <br> <math> r(x) = P(Y=1|X=x) = \frac{P(X=x|Y=1)P(Y=1)}{P(X=x)} = \frac{P(X=x|Y=1)P(Y=1)}{P(X=x|Y=1)P(Y=1)+P(X=x|Y=0)P(Y=0)} </math> | ||

<br> Definition: The Bayes classification rule h* is: | <br> '''Definition''': The Bayes classification rule h* is: | ||

<br> <math>h^*(x)=\left\{\begin{matrix}1 & \mathrm{if}\ \hat{r}(x) > 0.5 \\ 0 & otherwise\end{matrix}\right.</math> | <br> <math>h^*(x)=\left\{\begin{matrix}1 & \mathrm{if}\ \hat{r}(x) > 0.5 \\ 0 & otherwise\end{matrix}\right.</math> | ||

<br> The set <math>\displaystyle D(h) = \{x: P(Y=1|X=x)=P(Y=0|X=x)\} </math | <br> The set <math>\displaystyle D(h) = \{x: P(Y=1|X=x)=P(Y=0|X=x)\} </math> is called the decision boundary. | ||

<br> <math>h^*(x)=\left\{\begin{matrix}1 & \mathrm{if}\ P(Y=1|X=x) > P(Y=0|X=x) \\ 0 & otherwise\end{matrix}\right.</math> | <br> <math>h^*(x)=\left\{\begin{matrix}1 & \mathrm{if}\ P(Y=1|X=x) > P(Y=0|X=x) \\ 0 & otherwise\end{matrix}\right.</math> | ||

<br> '''Theorem:''' The Bayes rule is optimal, that is, if h is any other classification rule, then <math> L(h^*) \leq L(h) </math> | <br> '''Theorem:''' The Bayes rule is optimal, that is, if <math>\ h</math> is any other classification rule, then <math> L(h^*) \leq L(h) </math> | ||