Modular Multitask Reinforcement Learning with Policy Sketches: Difference between revisions

(→Model) |

(→Model) |

||

| Line 39: | Line 39: | ||

=='''Model'''== | =='''Model'''== | ||

[[File:MRL_0.png|center|frame]] | |||

We exploit the structural information provided by sketches by constructing for each symbol ''b'' a corresponding subpolicy $\pi_b$. By sharing each subpolicy across all tasks annotated with the corresponding symbol, our approach naturally learns the shared abstraction for the corresponding subtask, without requiring any information about the grounding of that task to be explicitly specified by annotation. | We exploit the structural information provided by sketches by constructing for each symbol ''b'' a corresponding subpolicy $\pi_b$. By sharing each subpolicy across all tasks annotated with the corresponding symbol, our approach naturally learns the shared abstraction for the corresponding subtask, without requiring any information about the grounding of that task to be explicitly specified by annotation. | ||

[[File:Algorithm_MRL2.png|center|frame|Modular Multitask Reinforcement Learning with Policy Sketches]] | [[File:Algorithm_MRL2.png|center|frame|Pseudo Algorithms for Modular Multitask Reinforcement Learning with Policy Sketches]] | ||

At each timestep, a subpolicy may select either a low-level action a 2 A or a special STOP action. We denote the augmented state space A+ := A [ fSTOPg. At a high level, this framework is agnostic to the implementation of subpolicies: any function that takes a representation of the current state onto a distribution over A+ will do. | |||

In this paper, we focus on the case where each b is rep-resented as a neural network.1 These subpolicies may be viewed as options of the kind described by Sutton et al. (1999), with the key distinction that they have no initiation semantics, but are instead invokable everywhere, and have no explicit representation as a function from an initial state to a distribution over final states (instead implicitly using the STOP action to terminate). | |||

Given a fixed sketch (b1; b2; : : : ), a task-specific policy is formed by concatenating its associated subpolicies in se-quence. In particular, the high-level policy maintains a sub-policy index i (initially 0), and executes actions from bi until the STOP symbol is emitted, at which point control is passed to bi+1 . We may thus think of as inducing a Markov chain over the state space S B, with transitions: | |||

(s; bi) ! (s0; bi) with pr. Pa2A bi (ajs) P (s0js; a) | |||

(s; bi+1) with pr. bi (STOPjs) | |||

Note that is semi-Markov with respect to projection of the augmented state space S B onto the underlying state space S. We denote the complete family of task-specific policies := S f g, and let each b be an arbitrary function of the current environment state parameterized by some weight vector b. The learning problem is to optimize over all b to maximize expected discounted reward | |||

X X X | |||

J( ) := J( ) := Esi iR (si) | |||

i | |||

across all tasks 2 T . | |||

3.2. Policy Optimization | |||

Here that optimization is accomplished via a simple decou-pled actor–critic method. In a standard policy gradient ap-proach, with a single policy with parameters , we com-pute gradient steps of the form (Williams, 1992): | |||

X | |||

r J( ) = r log (aijsi) qi c(si) ; (1) | |||

i | |||

where the baseline or “critic” c can be chosen indepen-dently of the future without introducing bias into the gra-dient. Recalling our previous definition of qi as the empir-ical return starting from si, this form of the gradient cor-responds to a generalized advantage estimator (Schulman et al., 2015a) with = 1. Here c achieves close to the optimal variance (Greensmith et al., 2004) when it is set | |||

='''Experiments'''= | ='''Experiments'''= | ||

='''Conclusion & Critique'''= | ='''Conclusion & Critique'''= | ||

Revision as of 21:12, 12 November 2017

Introduction

STILL EDITING. DO NOT MAKE CHANGES=

This paper describes a framework for learning compos-able deep subpolicies in a multitask setting, guided only by abstract sketches of high-level behavior. General rein-forcement learning algorithms allow agents to solve tasks in complex environments. But tasks featuring extremely delayed rewards or other long-term structure are often dif-ficult to solve with flat, monolithic policies, and a long line of prior work has studied methods for learning hier-archical policy representations (Sutton et al., 1999; Diet-terich, 2000; Konidaris & Barto, 2007; Hauser et al., 2008). While unsupervised discovery of these hierarchies is possi-ble (Daniel et al., 2012; Bacon & Precup, 2015), practical approaches often require detailed supervision in the form of explicitly specified high-level actions, subgoals, or be-havioral primitives (Precup, 2000). These depend on state representations simple or structured enough that suitable reward signals can be effectively engineered by hand.

But is such fine-grained supervision actually necessary to achieve the full benefits of hierarchy? Specifically, is it necessary to explicitly ground high-level actions into the representation of the environment? Or is it sufficient to simply inform the learner about the abstract structure of policies, without ever specifying how high-level behaviors should make use of primitive percepts or actions?

To answer these questions, we explore a multitask re-inforcement learning setting where the learner is pre-sented with policy sketches. Policy sketches are short, un-grounded, symbolic representations of a task that describe its component parts, as illustrated in Figure 1. While sym-bols might be shared across tasks (get wood appears in sketches for both the make planks and make sticks tasks), the learner is told nothing about what these symbols mean, in terms of either observations or intermediate rewards.

We present an agent architecture that learns from policy sketches by associating each high-level action with a pa-rameterization of a low-level subpolicy, and jointly op-timizes over concatenated task-specific policies by tying parameters across shared subpolicies. We find that this architecture can use the high-level guidance provided by sketches, without any grounding or concrete definition, to dramatically accelerate learning of complex multi-stage be-haviors. Our experiments indicate that many of the benefits to learning that come from highly detailed low-level su-pervision (e.g. from subgoal rewards) can also be obtained from fairly coarse high-level supervision (i.e. from policy sketches). Crucially, sketches are much easier to produce: they require no modifications to the environment dynam-ics or reward function, and can be easily provided by non-experts. This makes it possible to extend the benefits of hierarchical RL to challenging environments where it may not be possible to specify by hand the details of relevant subtasks. We show that our approach substantially outper-forms purely unsupervised methods that do not provide the learner with any task-specific guidance about how hierar-chies should be deployed, and further that the specific use of sketches to parameterize modular subpolicies makes bet-ter use of sketches than conditioning on them directly.

The present work may be viewed as an extension of recent approaches for learning compositional deep architectures from structured program descriptors (Andreas et al., 2016; Reed & de Freitas, 2016). Here we focus on learning in in-teractive environments. This extension presents a variety of technical challenges, requiring analogues of these methods that can be trained from sparse, non-differentiable reward signals without demonstrations of desired system behavior.

Our contributions are:

A general paradigm for multitask, hierarchical, deep reinforcement learning guided by abstract sketches of task-specific policies.

A concrete recipe for learning from these sketches, built on a general family of modular deep policy rep-resentations and a multitask actor–critic training ob-jective.

The modular structure of our approach, which associates every high-level action symbol with a discrete subpolicy, naturally induces a library of interpretable policy fragments.that are easily recombined. This makes it possible to eval-uate our approach under a variety of different data condi-tions: (1) learning the full collection of tasks jointly via reinforcement, (2) in a zero-shot setting where a policy sketch is available for a held-out task, and (3) in a adapta-tion setting, where sketches are hidden and the agent must learn to adapt a pretrained policy to reuse high-level ac-tions in a new task. In all cases, our approach substantially outperforms previous approaches based on explicit decom-position of the Q function along subtasks (Parr & Russell, 1998; Vogel & Jurafsky, 2010), unsupervised option dis-covery (Bacon & Precup, 2015), and several standard pol-icy gradient baselines.

We consider three families of tasks: a 2-D Minecraft-inspired crafting game (Figure 3a), in which the agent must acquire particular resources by finding raw ingredients, combining them together in the proper order, and in some cases building intermediate tools that enable the agent to al-ter the environment itself; a 2-D maze navigation task that requires the agent to collect keys and open doors, and a 3-D locomotion task (Figure 3b) in which a quadrupedal robot must actuate its joints to traverse a narrow winding cliff.

In all tasks, the agent receives a reward only after the final goal is accomplished. For the most challenging tasks, in-volving sequences of four or five high-level actions, a task-specific agent initially following a random policy essen-tially never discovers the reward signal, so these tasks can-not be solved without considering their hierarchical struc-ture. We have released code at http://github.com/ jacobandreas/psketch.

Related Work

The agent representation we describe in this paper be-longs to the broader family of hierarchical reinforcement learners. As detailed in Section 3, our approach may be viewed as an instantiation of the options framework first described by Sutton et al. (1999). A large body of work describes techniques for learning options and related ab-stract actions, in both single- and multitask settings. Most techniques for learning options rely on intermediate su-pervisory signals, e.g. to encourage exploration (Kearns & Singh, 2002) or completion of pre-defined subtasks (Kulka-rni et al., 2016). An alternative family of approaches em-ploys post-hoc analysis of demonstrations or pretrained policies to extract reusable sub-components (Stolle & Pre-cup, 2002; Konidaris et al., 2011; Niekum et al., 2015). Techniques for learning options with less guidance than the present work include Bacon & Precup (2015) and Vezhn-evets et al. (2016), and other general hierarchical policy learners include Daniel et al. (2012), Bakker & Schmidhu-ber (2004) and Menache et al. (2002). We will see that the minimal supervision provided by policy sketches re-sults in (sometimes dramatic) improvements over fully un-supervised approaches, while being substantially less oner-ous for humans to provide compared to the grounded su-pervision (such as explicit subgoals or feature abstraction hierarchies) used in previous work.

Once a collection of high-level actions exists, agents are faced with the problem of learning meta-level (typically semi-Markov) policies that invoke appropriate high-level actions in sequence (Precup, 2000). The learning problem we describe in this paper is in some sense the direct dual to the problem of learning these meta-level policies: there, the agent begins with an inventory of complex primitives and must learn to model their behavior and select among them; here we begin knowing the names of appropriate high-level actions but nothing about how they are imple-mented, and must infer implementations (but not, initially, abstract plans) from context. Our model can be combined with these approaches to support a “mixed” supervision condition where sketches are available for some tasks but not others (Section 4.5).

Another closely related line of work is the Hierarchical Abstract Machines (HAM) framework introduced by Parr Russell (1998). Like our approach, HAMs begin with a representation of a high-level policy as an automaton (or a more general computer program; Andre & Russell, 2001; Marthi et al., 2004) and use reinforcement learn-ing to fill in low-level details. Because these approaches attempt to learn a single representation of the Q function for all subtasks and contexts, they require extremely strong formal assumptions about the form of the reward function and state representation (Andre & Russell, 2002) that the present work avoids by decoupling the policy representa-tion from the value function. They perform less effectively when applied to arbitrary state representations where these assumptions do not hold (Section 4.3). We are addition-ally unaware of past work showing that HAM automata can be automatically inferred for new tasks given a pre-trained model, while here we show that it is easy to solve the cor-responding problem for sketch followers (Section 4.5).

Our approach is also inspired by a number of recent efforts toward compositional reasoning and interaction with struc-tured deep models. Such models have been previously used for tasks involving question answering (Iyyer et al., 2014; Andreas et al., 2016) and relational reasoning (Socher et al., 2012), and more recently for multi-task, multi-robot trans-fer problems (Devin et al., 2016). In the present work—as in existing approaches employing dynamically assembled modular networks—task-specific training signals are prop-agated through a collection of composed discrete structures with tied weights. Here the composed structures spec-ify time-varying policies rather than feedforward computa-tions, and their parameters must be learned via interaction rather than direct supervision. Another closely related fam-ily of models includes neural programmers (Neelakantan et al., 2015) and programmer–interpreters (Reed & de Fre-itas, 2016), which generate discrete computational struc-tures but require supervision in the form of output actions or full execution traces.

We view the problem of learning from policy sketches as complementary to the instruction following problem stud-ied in the natural language processing literature. Existing work on instruction following focuses on mapping from natural language strings to symbolic action sequences that are then executed by a hard-coded interpreter (Branavan et al., 2009; Chen & Mooney, 2011; Artzi & Zettlemoyer, 2013; Tellex et al., 2011). Here, by contrast, we focus on learning to execute complex actions given symbolic repre-sentations as a starting point. Instruction following models may be viewed as joint policies over instructions and en-vironment observations (so their behavior is not defined in the absence of instructions), while the model described in this paper naturally supports adaptation to tasks where no sketches are available. We expect that future work might combine the two lines of research, bootstrapping policy learning directly from natural language hints rather than the semi-structured sketches used here.

Learning Modular Policies from Sketches

We consider a multitask reinforcement learning prob-lem arising from a family of infinite-horizon discounted Markov decision processes in a shared environment. This environment is specified by a tuple $(S, A, P, \gamma )$, with $S$ a set of states, $A$ a set of low-level actions, $P : S \times A \times S \to R$ a transition probability distribution, and $\gamma$ a discount factor. Each task $t \in T$ is then specified by a pair $(R_t, \rho_t)$, with $R_t : S \to R$ a task-specific reward function and $\rho_t: S \to R$, an initial distribution over states. For a fixed sequence ${(s_i, a_i)}$ of states and actions obtained from a rollout of a given policy, we will denote the empirical return starting in state $s_i$ as $q_i = \sum_{j=i+1}^\infty \gamma^{j-i-1}R(s_j)$. In addition to the components of a standard multitask RL problem, we assume that tasks are annotated with sketches $K_t$ , each consisting of a sequence $(b_{t1},b_{t2},...)$ of high-level symbolic labels drawn from a fixed vocabulary $B$.

Model

We exploit the structural information provided by sketches by constructing for each symbol b a corresponding subpolicy $\pi_b$. By sharing each subpolicy across all tasks annotated with the corresponding symbol, our approach naturally learns the shared abstraction for the corresponding subtask, without requiring any information about the grounding of that task to be explicitly specified by annotation.

At each timestep, a subpolicy may select either a low-level action a 2 A or a special STOP action. We denote the augmented state space A+ := A [ fSTOPg. At a high level, this framework is agnostic to the implementation of subpolicies: any function that takes a representation of the current state onto a distribution over A+ will do. In this paper, we focus on the case where each b is rep-resented as a neural network.1 These subpolicies may be viewed as options of the kind described by Sutton et al. (1999), with the key distinction that they have no initiation semantics, but are instead invokable everywhere, and have no explicit representation as a function from an initial state to a distribution over final states (instead implicitly using the STOP action to terminate).

Given a fixed sketch (b1; b2; : : : ), a task-specific policy is formed by concatenating its associated subpolicies in se-quence. In particular, the high-level policy maintains a sub-policy index i (initially 0), and executes actions from bi until the STOP symbol is emitted, at which point control is passed to bi+1 . We may thus think of as inducing a Markov chain over the state space S B, with transitions: (s; bi) ! (s0; bi) with pr. Pa2A bi (ajs) P (s0js; a)

(s; bi+1) with pr. bi (STOPjs)

Note that is semi-Markov with respect to projection of the augmented state space S B onto the underlying state space S. We denote the complete family of task-specific policies := S f g, and let each b be an arbitrary function of the current environment state parameterized by some weight vector b. The learning problem is to optimize over all b to maximize expected discounted reward X X X J( ) := J( ) := Esi iR (si)

i

across all tasks 2 T .

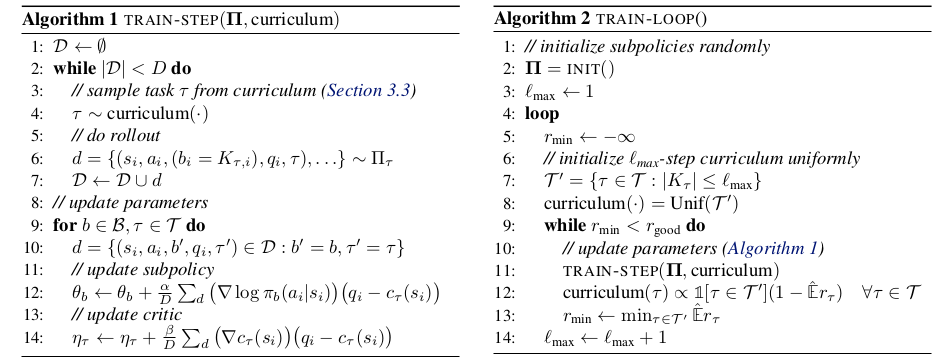

3.2. Policy Optimization

Here that optimization is accomplished via a simple decou-pled actor–critic method. In a standard policy gradient ap-proach, with a single policy with parameters , we com-pute gradient steps of the form (Williams, 1992): X r J( ) = r log (aijsi) qi c(si) ; (1) i

where the baseline or “critic” c can be chosen indepen-dently of the future without introducing bias into the gra-dient. Recalling our previous definition of qi as the empir-ical return starting from si, this form of the gradient cor-responds to a generalized advantage estimator (Schulman et al., 2015a) with = 1. Here c achieves close to the optimal variance (Greensmith et al., 2004) when it is set