SuperGLUE: Difference between revisions

No edit summary |

|||

| (8 intermediate revisions by 4 users not shown) | |||

| Line 9: | Line 9: | ||

There have been several benchmarks attempting to standardize the field of language understanding tasks. SentEval [6] evaluated fixed-size sentence embeddings for tasks. DecaNLP [7] converts tasks into a general question-answering format. GLUE offers a much more flexible and extensible benchmark since it imposes no restrictions on model architectures or parameter sharing. | There have been several benchmarks attempting to standardize the field of language understanding tasks. SentEval [6] evaluated fixed-size sentence embeddings for tasks. DecaNLP [7] converts tasks into a general question-answering format. GLUE offers a much more flexible and extensible benchmark since it imposes no restrictions on model architectures or parameter sharing. | ||

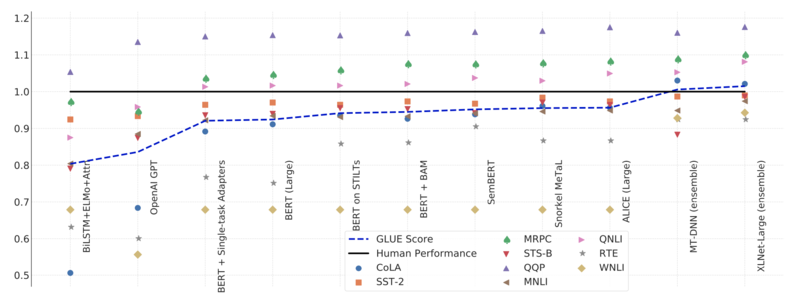

GLUE has been the gold standard for language understanding tests since its release. In fact, the benchmark has promoted growth in language models with all the transformer-based models started with attempting to achieve high scores on GLUE. Original GPT and BERT models scored 72.8 and 80.2 on GLUE. | GLUE has been the gold standard for language understanding tests since its release. In fact, the benchmark has promoted growth in language models with all the transformer-based models started with attempting to achieve high scores on GLUE. Original GPT and BERT models scored 72.8 and 80.2 on GLUE. However, the latest GPT and BERT models far outperform these benchmarks and strike a need for a more robust and difficult benchmark. | ||

Limits to current approaches are also apparent via the GLUE suite. Performance on the GLUE diagnostic entailment dataset falls far below the average human performance of 0.80 R3 reported in the original GLUE publication, with models performing near, or even below, chance on some linguistic phenomena. | |||

== Motivation == | == Motivation == | ||

| Line 27: | Line 29: | ||

'''Comprehensive human baselines:''' Human performance estimates are provided for all benchmark tasks. | '''Comprehensive human baselines:''' Human performance estimates are provided for all benchmark tasks. | ||

'''Improved code support:''' SuperGLUE is | '''Improved code support:''' SuperGLUE is built around the widely used tools including PyTorch and AllenNLP. | ||

'''Refined usage rules:''' SuperGLUE leaderboard ensures fair competition and full credit to creators. | '''Refined usage rules:''' SuperGLUE leaderboard ensures fair competition and full credit to creators. | ||

| Line 33: | Line 35: | ||

== Design Process == | == Design Process == | ||

SuperGLUE is designed to be widely applicable to many different NLP tasks. That being said, in designing SuperGLUE, certain criteria needed to be established to determine whether | SuperGLUE is designed to be widely applicable to many different NLP tasks. That being said, in designing SuperGLUE, certain criteria needed to be established to determine whether an NLP task can be completed. The authors specified six such requirements, which are listed below. | ||

#'''Task substance:''' Tasks should test a system's reasoning and understanding of English text. | #'''Task substance:''' Tasks should test a system's reasoning and understanding of English text. | ||

#'''Task difficulty:''' Tasks should be solvable by those who graduated from an English postsecondary institution. | #'''Task difficulty:''' Tasks should be solvable by those who graduated from an English postsecondary institution. | ||

#'''Evaluability:''' Tasks are required to have an automated performance metric that aligns to human | #'''Evaluability:''' Tasks are required to have an automated performance metric that aligns to human judgments of the output quality. | ||

#'''Public data:''' Tasks need to have existing public data for training with a preference for an additional private test set. | #'''Public data:''' Tasks need to have existing public data for training with a preference for an additional private test set. | ||

#'''Task format:''' Preference for tasks with simpler input and output formats to steer users of the benchmark away from tasks specific architectures. | #'''Task format:''' Preference for tasks with simpler input and output formats to steer users of the benchmark away from tasks specific architectures. | ||

#'''License:''' Task data must be under a license that allows the redistribution and use for research. | #'''License:''' Task data must be under a license that allows the redistribution and use for research. | ||

To select tasks | To select tasks included in the benchmarks, the authors put a public request for NLP tasks and received many. From this, they filtered the tasks according to the criteria above and eliminated any tasks that could not be used due to licensing issues or other problems. | ||

== SuperGLUE Tasks == | == SuperGLUE Tasks == | ||

SuperGLUE has 8 language understanding tasks. They test a model’s understanding of texts in English. The tasks are built to be equivalent to | SuperGLUE has 8 language understanding tasks. They test a model’s understanding of texts in English. The tasks are built to be equivalent to most college-educated English speakers' capabilities and are beyond the capabilities of most state-of-the-art systems today. | ||

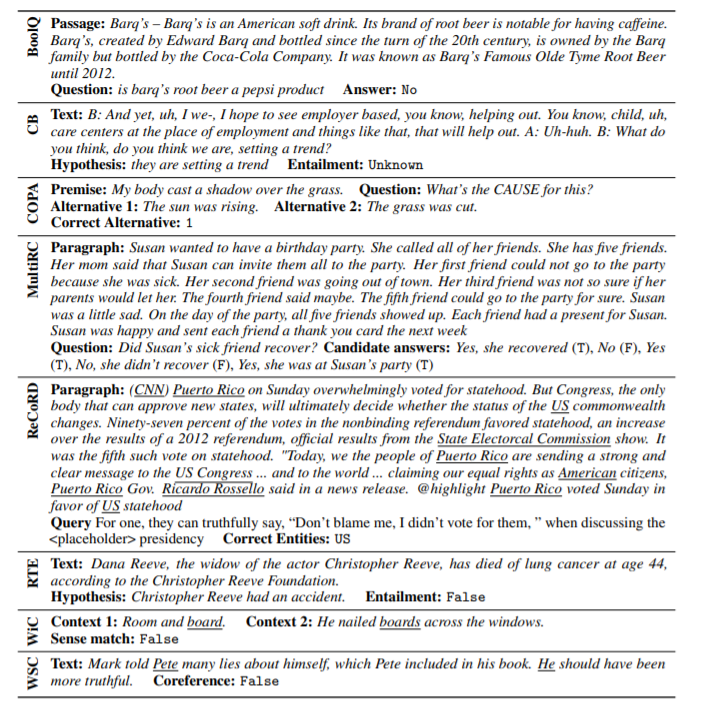

'''BoolQ''' (Boolean Questions [9]): QA task consisting of short passage and related questions to the passage as either a yes or a no answer. | '''BoolQ''' (Boolean Questions [9]): QA task consisting of short passage and related questions to the passage as either a yes or a no answer. | ||

'''CB''' (CommitmentBank [10]): Corpus of text where sentences have embedded clauses and sentences are written | '''CB''' (CommitmentBank [10]): Corpus of text where sentences have embedded clauses and sentences are written to keep the clause accurate. | ||

'''COPA''' (Choice of plausible Alternatives [11]): Reasoning tasks in which given a sentence the system must be able to choose the cause or effect of the sentence from two potential choices. | '''COPA''' (Choice of plausible Alternatives [11]): Reasoning tasks in which given a sentence the system must be able to choose the cause or effect of the sentence from two potential choices. | ||

| Line 56: | Line 58: | ||

'''MultiRC''' (Multi-Sentence Reading Comprehension [12]): QA task in which given a passage and potential answers, the model should label the answers as true or false. The Passages are taken from seven domains including news, fiction, and historical text etc. | '''MultiRC''' (Multi-Sentence Reading Comprehension [12]): QA task in which given a passage and potential answers, the model should label the answers as true or false. The Passages are taken from seven domains including news, fiction, and historical text etc. | ||

'''ReCoRD''' (Reading Comprehension with Commonsense Reasoning Dataset [13]): A multiple-choice, question answering task, | '''ReCoRD''' (Reading Comprehension with Commonsense Reasoning Dataset [13]): A multiple-choice, question answering task, were given a passage with a masked entity, the model should be able to predict the masked out entity from the available choices. The articles are extracted from CNN and Daily Mail. | ||

'''RTE''' (Recognizing Textual Entailment [14]): Classifying whether a text can be plausibly inferred from a given passage. | '''RTE''' (Recognizing Textual Entailment [14]): Classifying whether a text can be plausibly inferred from a given passage. | ||

'''WiC''' (Word in Context [15]): Identifying whether a polysemous word used in multiple sentences is being used with the same sense across sentences or not. | '''WiC''' (Word in Context [15]): Identifying whether a polysemous word used in multiple sentences is being used with the same sense across sentences or not. | ||

technologies | |||

'''WSC''' (Winograd Schema Challenge, [16]): A conference resolution task where sentences include pronouns and noun phrases from the sentence. The goal is to identify the correct reference to a noun phrase corresponding to the pronoun. | '''WSC''' (Winograd Schema Challenge, [16]): A conference resolution task where sentences include pronouns and noun phrases from the sentence. The goal is to identify the correct reference to a noun phrase corresponding to the pronoun. | ||

| Line 67: | Line 69: | ||

[[File: supergluetasks.png]] | [[File: supergluetasks.png]] | ||

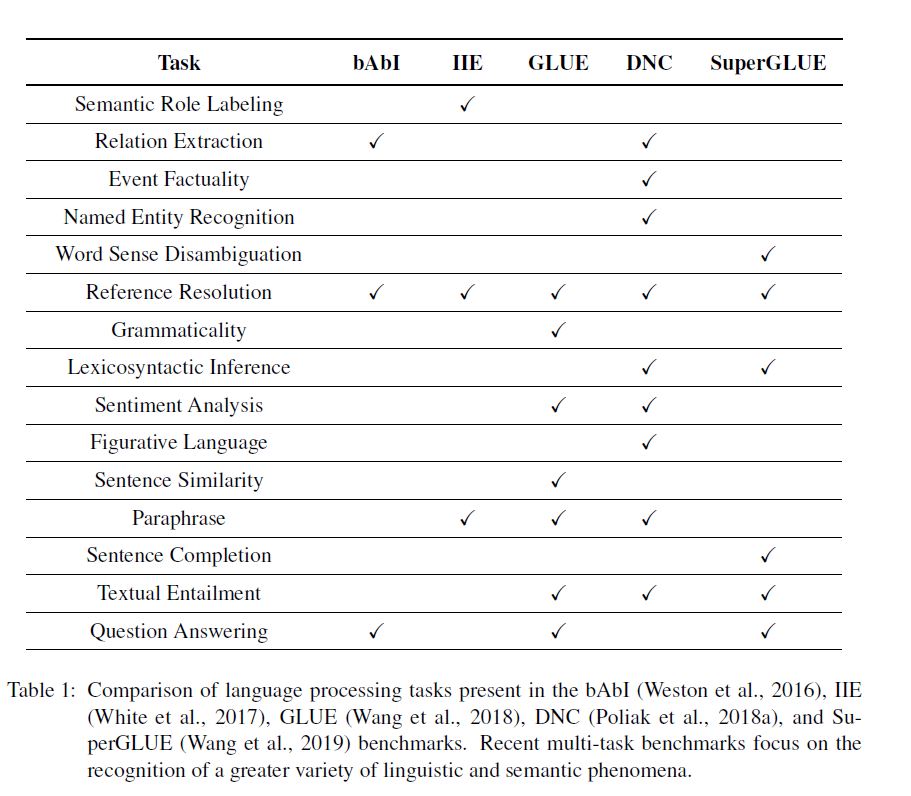

In the following chart[18], you can see the differences between the different benchmarks. | |||

[[File: superglue.JPG]] | |||

Development set examples from the tasks in SuperGLUE are shown below | |||

[[File: CaptureGlue.PNG]] | |||

===Scoring=== | ===Scoring=== | ||

With GLUE, they seek to give a sense of aggregate system performance overall tasks by averaging | With GLUE, they seek to give a sense of aggregate system performance overall tasks by averaging all tasks scores. Lacking a fair criterion to weigh the contributions of each task to the overall score, they opt for the simple approach of weighing each task equally and for tasks with multiple metrics, first averaging those metrics to get a task score. | ||

== Model Analysis == | == Model Analysis == | ||

| Line 78: | Line 88: | ||

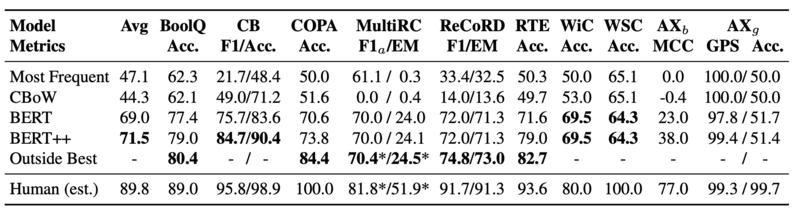

Table 1 offers a summary of the results from SuperGLUE across different models. CBOW baselines are generally close to roughly chance performance. BERT, on the other hand, increased the SuperGLUE score by 25 points and had the highest improvement on most tasks, especially MultiRCC, ReCoRD, and RTE. WSC is trickier for BERT, potentially owing to the small dataset size. | Table 1 offers a summary of the results from SuperGLUE across different models. CBOW baselines are generally close to roughly chance performance. BERT, on the other hand, increased the SuperGLUE score by 25 points and had the highest improvement on most tasks, especially MultiRCC, ReCoRD, and RTE. WSC is trickier for BERT, potentially owing to the small dataset size. | ||

BERT++[8] increases BERT’s performance even further. However, achieving the goal of the benchmark, the best model/score still lags behind compared to human performance. The human results for WiC, MltiRC, RTE, and ReCoRD were already available on [15], [12], [17], and [13] respectively. However, for the remaining tasks, the authors employed | BERT++[8] increases BERT’s performance even further. However, achieving the goal of the benchmark, the best model/score still lags behind compared to human performance. The human results for WiC, MltiRC, RTE, and ReCoRD were already available on [15], [12], [17], and [13] respectively. However, for the remaining tasks, the authors employed crowd workers to reannotate a sample of each test set according to the methods used in [17]. The large gaps should be relatively tricky for models to close in on. The biggest margin is for WSC with 35 points and CV, RTE, BoolQ, WiC all have 10 point margins. | ||

| Line 84: | Line 94: | ||

Table 1: Baseline performance on SuperGLUE tasks. | Table 1: Baseline performance on SuperGLUE tasks. | ||

== Source Code == | |||

The source code is available at https://github.com/nyu-mll/jiant . | |||

== Conclusion == | == Conclusion == | ||

| Line 116: | Line 130: | ||

[11] Melissa Roemmele, Cosmin Adrian Bejan, and Andrew S. Gordon. Choice of plausible alternatives: An evaluation of commonsense causal reasoning. In 2011 AAAI Spring Symposium Series, 2011. | [11] Melissa Roemmele, Cosmin Adrian Bejan, and Andrew S. Gordon. Choice of plausible alternatives: An evaluation of commonsense causal reasoning. In 2011 AAAI Spring Symposium Series, 2011. | ||

[12] Daniel Khashabi, Snigdha Chaturvedi, Michael Roth, Shyam Upadhyay, and Dan Roth. Looking beyond the surface: A challenge set for reading comprehension over multiple sentences. In Proceedings of the Conference of the North American Chapter of the Association for Computational Linguistics: Human Language | [12] Daniel Khashabi, Snigdha Chaturvedi, Michael Roth, Shyam Upadhyay, and Dan Roth. Looking beyond the surface: A challenge set for reading comprehension over multiple sentences. In Proceedings of the Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (NAACL-HLT). Association for Computational Linguistics, 2018. URL https://www.aclweb.org/anthology/papers/N/N18/N18-1023/. | ||

[13] Sheng Zhang, Xiaodong Liu, Jingjing Liu, Jianfeng Gao, Kevin Duh, and Benjamin Van Durme. ReCoRD: Bridging the gap between human and machine commonsense reading comprehension. arXiv preprint 1810.12885, 2018. | [13] Sheng Zhang, Xiaodong Liu, Jingjing Liu, Jianfeng Gao, Kevin Duh, and Benjamin Van Durme. ReCoRD: Bridging the gap between human and machine commonsense reading comprehension. arXiv preprint 1810.12885, 2018. | ||

| Line 127: | Line 141: | ||

[17] Nikita Nangia and Samuel R. Bowman. Human vs. Muppet: A conservative estimate of human performance on the GLUE benchmark. In Proceedings of the Association of Computational Linguistics (ACL). Association for Computational Linguistics, 2019. URL https://woollysocks.github.io/assets/GLUE_Human_Baseline.pdf. | [17] Nikita Nangia and Samuel R. Bowman. Human vs. Muppet: A conservative estimate of human performance on the GLUE benchmark. In Proceedings of the Association of Computational Linguistics (ACL). Association for Computational Linguistics, 2019. URL https://woollysocks.github.io/assets/GLUE_Human_Baseline.pdf. | ||

[18] Storks, Shane, Qiaozi Gao, and Joyce Y. Chai. "Recent advances in natural language inference: A survey of benchmarks, resources, and approaches." arXiv preprint arXiv:1904.01172 (2019). | |||

Latest revision as of 16:58, 6 December 2020

Presented by

Shikhar Sakhuja

Introduction

Natural Language Processing (NLP) has seen immense improvements over the past two years. The improvements offered by RNN-based model such as ELMo [2], and Transformer [1] based models such as OpenAI GPT [3] and BERT[4], have revolutionized the field. These models render GLUE [5], the standard benchmark for NLP tasks, ineffective. The GLUE benchmark was released over a year ago and assessed NLP models using a single-number metric that summarized performance over some diverse tasks. However, the transformer-based models outperform the non-expert humans in several tasks. With transformer-based models achieving near-perfect scores on almost all tasks in GLUE and outperforming humans in some, there is a need for a new benchmark that involves harder and even more diverse language tasks. The authors release SuperGLUE as a new benchmark that has a more rigorous set of language understanding tasks.

Related Work

There have been several benchmarks attempting to standardize the field of language understanding tasks. SentEval [6] evaluated fixed-size sentence embeddings for tasks. DecaNLP [7] converts tasks into a general question-answering format. GLUE offers a much more flexible and extensible benchmark since it imposes no restrictions on model architectures or parameter sharing.

GLUE has been the gold standard for language understanding tests since its release. In fact, the benchmark has promoted growth in language models with all the transformer-based models started with attempting to achieve high scores on GLUE. Original GPT and BERT models scored 72.8 and 80.2 on GLUE. However, the latest GPT and BERT models far outperform these benchmarks and strike a need for a more robust and difficult benchmark.

Limits to current approaches are also apparent via the GLUE suite. Performance on the GLUE diagnostic entailment dataset falls far below the average human performance of 0.80 R3 reported in the original GLUE publication, with models performing near, or even below, chance on some linguistic phenomena.

Motivation

Transformer based NLP models allow NLP models to train using transfer learning which was previously only seen in Computer Vision tasks and was notoriously difficult for language because of the discrete nature of words. Transfer Learning in NLP allows models to be trained over terabytes of language data in a self-supervised fashion. These models can then be finetuned for downstream tasks such as sentiment classification and fake news detection. The fine-tuned models beat many of the human labelers who weren’t experts in the domain. Thus, it creates a need for a newer, more robust baseline that can stay relevant with the rapid improvements in the field of NLP.

Figure 1: Transformer-based models outperforming humans in GLUE tasks.

Improvements to GLUE

SuperGLUE follows the design principles of GLUE but seeks to improve on its predecessor in many ways:

More challenging tasks: SuperGLUE contains the two hardest tasks in GLUE and open tasks that are difficult to current NLP approaches.

More diverse task formats: SuperGLUE expands GLUE task formats to include coreference resolution and question answering.

Comprehensive human baselines: Human performance estimates are provided for all benchmark tasks.

Improved code support: SuperGLUE is built around the widely used tools including PyTorch and AllenNLP.

Refined usage rules: SuperGLUE leaderboard ensures fair competition and full credit to creators.

Design Process

SuperGLUE is designed to be widely applicable to many different NLP tasks. That being said, in designing SuperGLUE, certain criteria needed to be established to determine whether an NLP task can be completed. The authors specified six such requirements, which are listed below.

- Task substance: Tasks should test a system's reasoning and understanding of English text.

- Task difficulty: Tasks should be solvable by those who graduated from an English postsecondary institution.

- Evaluability: Tasks are required to have an automated performance metric that aligns to human judgments of the output quality.

- Public data: Tasks need to have existing public data for training with a preference for an additional private test set.

- Task format: Preference for tasks with simpler input and output formats to steer users of the benchmark away from tasks specific architectures.

- License: Task data must be under a license that allows the redistribution and use for research.

To select tasks included in the benchmarks, the authors put a public request for NLP tasks and received many. From this, they filtered the tasks according to the criteria above and eliminated any tasks that could not be used due to licensing issues or other problems.

SuperGLUE Tasks

SuperGLUE has 8 language understanding tasks. They test a model’s understanding of texts in English. The tasks are built to be equivalent to most college-educated English speakers' capabilities and are beyond the capabilities of most state-of-the-art systems today.

BoolQ (Boolean Questions [9]): QA task consisting of short passage and related questions to the passage as either a yes or a no answer.

CB (CommitmentBank [10]): Corpus of text where sentences have embedded clauses and sentences are written to keep the clause accurate.

COPA (Choice of plausible Alternatives [11]): Reasoning tasks in which given a sentence the system must be able to choose the cause or effect of the sentence from two potential choices.

MultiRC (Multi-Sentence Reading Comprehension [12]): QA task in which given a passage and potential answers, the model should label the answers as true or false. The Passages are taken from seven domains including news, fiction, and historical text etc.

ReCoRD (Reading Comprehension with Commonsense Reasoning Dataset [13]): A multiple-choice, question answering task, were given a passage with a masked entity, the model should be able to predict the masked out entity from the available choices. The articles are extracted from CNN and Daily Mail.

RTE (Recognizing Textual Entailment [14]): Classifying whether a text can be plausibly inferred from a given passage.

WiC (Word in Context [15]): Identifying whether a polysemous word used in multiple sentences is being used with the same sense across sentences or not. technologies WSC (Winograd Schema Challenge, [16]): A conference resolution task where sentences include pronouns and noun phrases from the sentence. The goal is to identify the correct reference to a noun phrase corresponding to the pronoun.

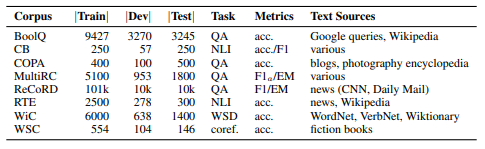

The table below briefly corresponds to the different tasks included in SuperGLUE along with the task type and size of the datasets. In the table, WSD stands for word sense disambiguation, NLI is natural language inference, coref. is coreference resolution, and QA is question answering.

In the following chart[18], you can see the differences between the different benchmarks.

Development set examples from the tasks in SuperGLUE are shown below

Scoring

With GLUE, they seek to give a sense of aggregate system performance overall tasks by averaging all tasks scores. Lacking a fair criterion to weigh the contributions of each task to the overall score, they opt for the simple approach of weighing each task equally and for tasks with multiple metrics, first averaging those metrics to get a task score.

Model Analysis

SuperGLUE includes two tasks for analyzing linguistic knowledge and gender bias in models. To analyze linguistic and world knowledge, submissions to SuperGLUE are required to include predictions of sentence pair relation (entailment, not_entailment) on the resulting set for RTE task. As for gender bias, SuperGLUE includes a diagnostic dataset Winogender, which measures gender bias in co-reference resolution systems. A poor bias score indicates gender bias, however, a good score does not necessarily mean a model is unbiased. This is one limitation of the dataset.

Results

Table 1 offers a summary of the results from SuperGLUE across different models. CBOW baselines are generally close to roughly chance performance. BERT, on the other hand, increased the SuperGLUE score by 25 points and had the highest improvement on most tasks, especially MultiRCC, ReCoRD, and RTE. WSC is trickier for BERT, potentially owing to the small dataset size.

BERT++[8] increases BERT’s performance even further. However, achieving the goal of the benchmark, the best model/score still lags behind compared to human performance. The human results for WiC, MltiRC, RTE, and ReCoRD were already available on [15], [12], [17], and [13] respectively. However, for the remaining tasks, the authors employed crowd workers to reannotate a sample of each test set according to the methods used in [17]. The large gaps should be relatively tricky for models to close in on. The biggest margin is for WSC with 35 points and CV, RTE, BoolQ, WiC all have 10 point margins.

Table 1: Baseline performance on SuperGLUE tasks.

Source Code

The source code is available at https://github.com/nyu-mll/jiant .

Conclusion

SuperGLUE fills the gap that GLUE has created owing to its inability to keep up with the SOTA in NLP. The new language tasks that the benchmark offers are built to be more robust and difficult to solve for NLP models. With the difference in model accuracy being around 10-35 points across all tasks, SuperGLUE is definitely going to be around for some time before the models catch up to it, as well. Overall, this is a significant contribution to improve general-purpose natural language understanding.

Critique

This is quite a fascinating read where the authors of the gold-standard benchmark have essentially conceded to the progress in NLP. Bowman’s team resorting to creating a new benchmark altogether to keep up with the rapid pace of increase in NLP makes me wonder if these benchmarks are inherently flawed. Applying the idea of Wittgenstein’s Ruler, are we measuring the performance of models using the benchmark, or the quality of benchmarks using the models?

I’m curious how long SuperGLUE would stay relevant owing to advances in NLP. GPT-3, released in June 2020, has outperformed GPT-2 and BERT by a huge margin, given the 100x increase in parameters (175B Parameters over ~600GB for GPT-3, compared to 1.5B parameters over 40GB for GPT-2). In October 2020, a new deep learning technique (Pattern Exploiting Training) managed to train a Transformer NLP model with 223M parameters (roughly 0.01% parameters of GPT-3) and outperformed GPT-3 by 3 points on SuperGLUE. With the field improving so rapidly, I think superGLUE is nothing but a bandaid for the benchmarking tasks that will turn obsolete in no time.

References

[1] Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N Gomez, Lukasz Kaiser, and Illia Polosukhin. 2017. Attention is all you need. In Advances in Neural Information Processing Systems, pages 6000–6010.

[2] Matthew Peters, Mark Neumann, Mohit Iyyer, Matt Gardner, Christopher Clark, Kenton Lee, and Luke Zettlemoyer. Deep contextualized word representations. In Proceedings of the Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (NAACL-HLT). Association for Computational Linguistics, 2018. doi: 10.18653/v1/N18-1202. URL https://www.aclweb.org/anthology/N18-1202

[3] Alec Radford, Karthik Narasimhan, Tim Salimans, and Ilya Sutskever. Improving language understanding by generative pre-training, 2018. Unpublished ms. available through a link at https://blog.openai.com/language-unsupervised/.

[4] Jacob Devlin, Ming-Wei Chang, Kenton Lee, and Kristina Toutanova. BERT: Pre-training of deep bidirectional transformers for language understanding. In Proceedings of the Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (NAACL-HLT). Association for Computational Linguistics, 2019. URL https: //arxiv.org/abs/1810.04805.

[5] Alex Wang, Amanpreet Singh, Julian Michael, Felix Hill, Omer Levy, and Samuel R. Bowman. GLUE: A multi-task benchmark and analysis platform for natural language understanding. In International Conference on Learning Representations, 2019a. URL https://openreview.net/forum?id=rJ4km2R5t7.

[6] Alexis Conneau and Douwe Kiela. SentEval: An evaluation toolkit for universal sentence representations. In Proceedings of the 11th Language Resources and Evaluation Conference. European Language Resource Association, 2018. URL https://www.aclweb.org/anthology/L18-1269.

[7] Bryan McCann, James Bradbury, Caiming Xiong, and Richard Socher. Learned in translation: Contextualized word vectors. In Advances in Neural Information processing Systems (NeurIPS). Curran Associates, Inc., 2017. URL http://papers.nips.cc/paper/7209-learned-in-translation-contextualized-word-vectors.pdf.

[8] Jason Phang, Thibault Févry, and Samuel R Bowman. Sentence encoders on STILTs: Supplementary training on intermediate labeled-data tasks. arXiv preprint 1811.01088, 2018. URL https://arxiv.org/abs/1811.01088.

[9] Christopher Clark, Kenton Lee, Ming-Wei Chang, Tom Kwiatkowski, Michael Collins, and Kristina Toutanova. Boolq: Exploring the surprising difficulty of natural yes/no questions. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers), pages 2924–2936,2019a.

[10] Marie-Catherine de Marneffe, Mandy Simons, and Judith Tonhauser. The CommitmentBank: Investigating projection in naturally occurring discourse. 2019. To appear in Proceedings of Sinn und Bedeutung 23. Data can be found at https://github.com/mcdm/CommitmentBank/.

[11] Melissa Roemmele, Cosmin Adrian Bejan, and Andrew S. Gordon. Choice of plausible alternatives: An evaluation of commonsense causal reasoning. In 2011 AAAI Spring Symposium Series, 2011.

[12] Daniel Khashabi, Snigdha Chaturvedi, Michael Roth, Shyam Upadhyay, and Dan Roth. Looking beyond the surface: A challenge set for reading comprehension over multiple sentences. In Proceedings of the Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (NAACL-HLT). Association for Computational Linguistics, 2018. URL https://www.aclweb.org/anthology/papers/N/N18/N18-1023/.

[13] Sheng Zhang, Xiaodong Liu, Jingjing Liu, Jianfeng Gao, Kevin Duh, and Benjamin Van Durme. ReCoRD: Bridging the gap between human and machine commonsense reading comprehension. arXiv preprint 1810.12885, 2018.

[14] Ido Dagan, Oren Glickman, and Bernardo Magnini. The PASCAL recognising textual entailment challenge. In Machine Learning Challenges. Evaluating Predictive Uncertainty, Visual Object Classification, and Recognising Textual Entailment. Springer, 2006. URL https://link.springer.com/chapter/10.1007/11736790_9.

[15] Mohammad Taher Pilehvar and Jose Camacho-Collados. WiC: The word-in-context dataset for evaluating context-sensitive meaning representations. In Proceedings of the Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (NAACL-HLT). Association for Computational Linguistics, 2019. URL https://arxiv.org/abs/1808.09121.

[16] Hector Levesque, Ernest Davis, and Leora Morgenstern. The Winograd schema challenge. In Thirteenth International Conference on the Principles of Knowledge Representation and Reasoning, 2012. URL http://dl.acm.org/citation.cfm?id=3031843.3031909.

[17] Nikita Nangia and Samuel R. Bowman. Human vs. Muppet: A conservative estimate of human performance on the GLUE benchmark. In Proceedings of the Association of Computational Linguistics (ACL). Association for Computational Linguistics, 2019. URL https://woollysocks.github.io/assets/GLUE_Human_Baseline.pdf.

[18] Storks, Shane, Qiaozi Gao, and Joyce Y. Chai. "Recent advances in natural language inference: A survey of benchmarks, resources, and approaches." arXiv preprint arXiv:1904.01172 (2019).